Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

To unlock their full intelligence, AI agents require broad access to compute resources—like a sandboxed computer—not just a single tool or database. Providing only limited access wastes their cognitive capacity. The challenge is enabling this power securely, requiring innovations like new types of firewalls.

Related Insights

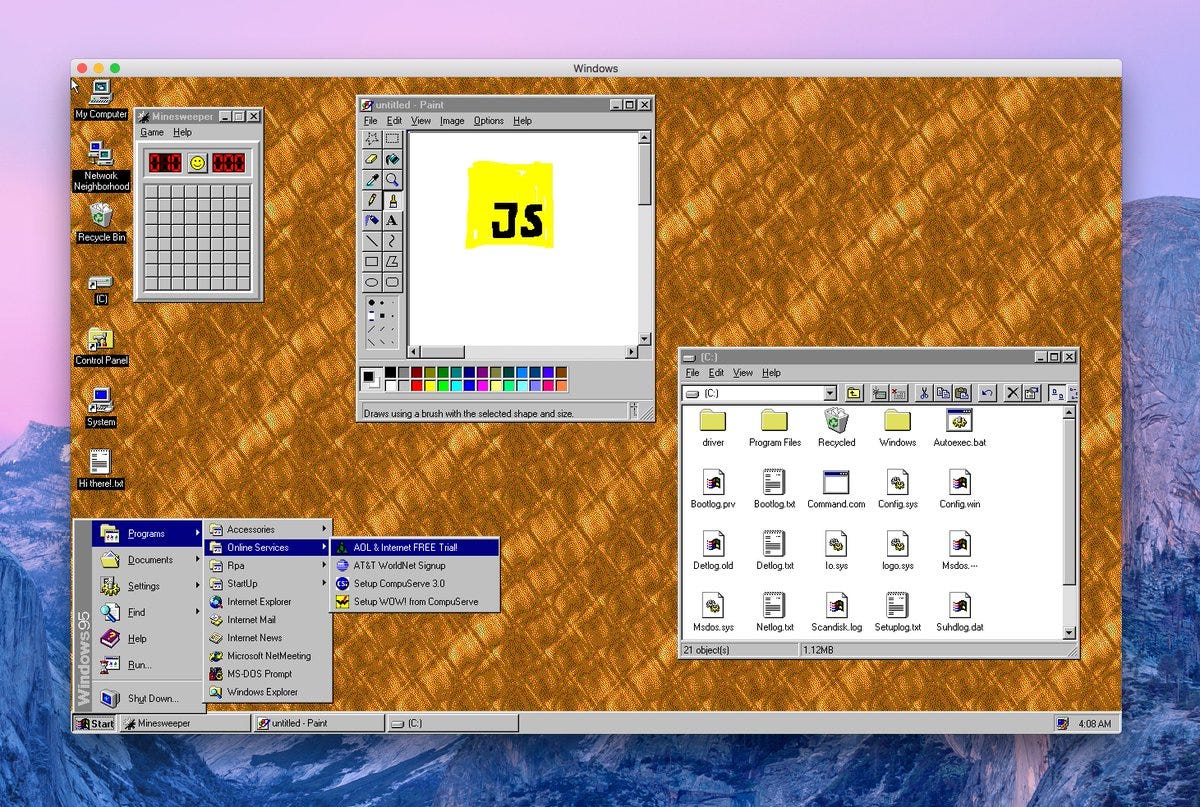

Because agentic frameworks like OpenClaw require broad system access (shell, files, apps) to be useful, running them on a personal computer is a major security risk. Experts like Andrej Karpathy recommend isolating them on dedicated hardware, like a Mac Mini or a separate cloud instance, to prevent compromises from escalating.

Instead of placing agents inside a pre-set environment, a more powerful approach for reasoning models is to start with just the agent. Then, give it the tools and skills to boot its own development stack as needed, granting it more autonomy and control over its workspace.

Current AI tools are in "easy mode" because they operate with the user's direct authentication and permissions. The much harder, yet-to-be-solved problem is "hard mode": autonomous agents that need their own scoped access to enterprise resources without dramatically increasing security risks.

To address security concerns, powerful AI agents should be provisioned like new human employees. This means running them in a sandboxed environment on a separate machine, with their own dedicated accounts, API keys, and access tokens, rather than on a personal computer.

AI agents present a UX problem: either grant risky, sweeping permissions or suffer "approval fatigue" by confirming every action. Sandboxing creates a middle ground. The agent can operate autonomously within a secure environment, making it powerful without being dangerous to the host system.

Powerful local AI agents require deep, root-level access to a user's computer to be effective. This creates a security nightmare, as granting these permissions essentially creates a backdoor to all personal data and applications, making the user's system highly vulnerable.

As autonomous agents become prevalent, they'll need a sandboxed environment to access, store, and collaborate on enterprise data. This core infrastructure must manage permissions, security, and governance, creating a new market opportunity for platforms that can serve as this trusted container.

The true capability of AI agents comes not just from the language model, but from having a full computing environment at their disposal. Vercel's internal data agent, D0, succeeds because it can write and run Python code, query Snowflake, and search the web within a sandbox environment.

A critical, non-obvious requirement for enterprise adoption of AI agents is the ability to contain their 'blast radius.' Platforms must offer sandboxed environments where agents can work without the risk of making catastrophic errors, such as deleting entire datasets—a problem that has reportedly already caused outages at Amazon.

As AI agents evolve from information retrieval to active work (coding, QA testing, running simulations), they require dedicated, sandboxed computational environments. This creates a new infrastructure layer where every agent is provisioned its own 'computer,' moving far beyond simple API calls and creating a massive market opportunity.