Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Google's Embedding 2 model is a significant infrastructure upgrade because it is 'natively multimodal.' This allows AI to directly understand and retrieve images, diagrams, and text without first converting non-text data into lossy captions. This makes internal knowledge bases and co-pilots dramatically more effective and accurate for enterprises.

Related Insights

While companies readily use models that process images, audio, and text inputs, the practical application of generating multimodal outputs (like video or complex graphics) remains rare in business. The primary output is still text or structured data, with synthesized speech being the main exception.

To move beyond keyword search in their media archive, Tim McLear's system generates two vector embeddings for each asset: one from the image thumbnail and another from its AI-generated text description. Fusing these enables a powerful semantic search that understands visual similarity and conceptual relationships, not just exact text matches.

Google's NotebookLM now generates "cinematic video overviews," a leap beyond simple slideshows. By orchestrating its Gemini models to act as a "creative director" for narrative and style, Google is strategically demonstrating its leadership in multimodal AI with a practical, high-value application that differentiates it from competitors.

The vast majority of enterprise information, previously trapped in formats like PDFs and documents, was largely unusable. AI, through techniques like RAG and automated structure extraction, is unlocking this data for the first time, making it queryable and enabling new large-scale analysis.

Advanced multimodal AI can analyze a photo of a messy, handwritten whiteboard session and produce a structured, coherent summary. It can even identify missing points and provide new insights, transforming unstructured creative output into actionable plans.

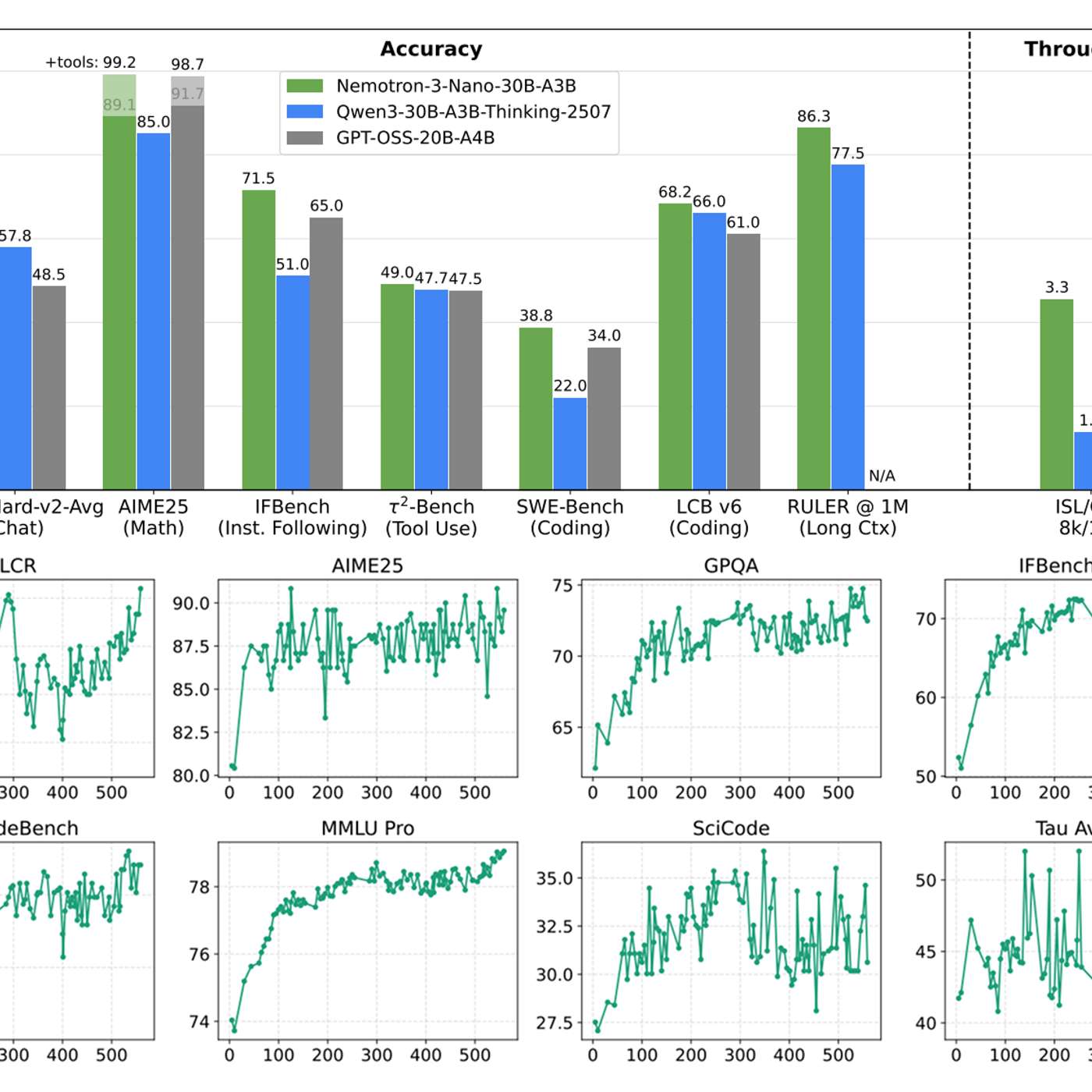

Standard Retrieval-Augmented Generation (RAG) systems often fail because they treat complex documents as pure text, missing crucial context within charts, tables, and layouts. The solution is to use vision language models for embedding and re-ranking, making visual and structural elements directly retrievable and improving accuracy.

For decades, the goal was a 'semantic web' with structured data for machines. Modern AI models achieve the same outcome by being so effective at understanding human-centric, unstructured web pages that they can extract meaning without needing special formatting. This is a major unlock for web automation.

Current multimodal models shoehorn visual data into a 1D text-based sequence. True spatial intelligence is different. It requires a native 3D/4D representation to understand a world governed by physics, not just human-generated language. This is a foundational architectural shift, not an extension of LLMs.

New image models like Google's Nano Banana Pro can transform lengthy articles and research papers into detailed whiteboard diagrams. This represents a powerful new form of information compression, moving beyond simple text summarization to a complete modality shift for easier comprehension and knowledge transfer.

Google's strategy involves building specialized models (e.g., Veo for video) to push the frontier in a single modality. The learnings and breakthroughs from these focused efforts are then integrated back into the core, multimodal Gemini model, accelerating its overall capabilities.