Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

An AI pilot study defined success as "marked and sustained" profit impact within six months. This impossibly high bar automatically classified projects that broke even, were on track for future profit, or provided non-financial benefits as "failures," thus obscuring the real, incremental value of new technology deployments.

Related Insights

Technical metrics like "accuracy" are often the wrong measure for ML projects and can mismanage expectations. To achieve success, projects must be evaluated using business KPIs like profit, savings, or ROI. This aligns data science with business goals and reveals the true value of imperfect predictions.

The main obstacle to deploying enterprise AI isn't just technical; it's achieving organizational alignment on a quantifiable definition of success. Creating a comprehensive evaluation suite is crucial before building, as no single person typically knows all the right answers.

Standardized benchmarks for AI models are largely irrelevant for business applications. Companies need to create their own evaluation systems tailored to their specific industry, workflows, and use cases to accurately assess which new model provides a tangible benefit and ROI.

Demanding a direct, line-item ROI for foundational AI initiatives is like asking for the ROI on Wi-Fi—it's the wrong question. Instead of getting bogged down in impossible calculations, leaders should focus on measuring the business outcomes enabled by the technology, such as innovation speed or new product creation. Obsess on outcomes, not direct financial return.

In a new technological wave like AI, a high project failure rate is desirable. It indicates that a company is aggressively experimenting and pushing boundaries to discover what provides real value, rather than being too conservative.

The 85% AI project failure rate isn't a technology problem. It stems from four business and process issues: failing to identify a narrow use case, using data that isn't clean or ready, not defining success and risk, and applying deterministic Agile methods to probabilistic AI development.

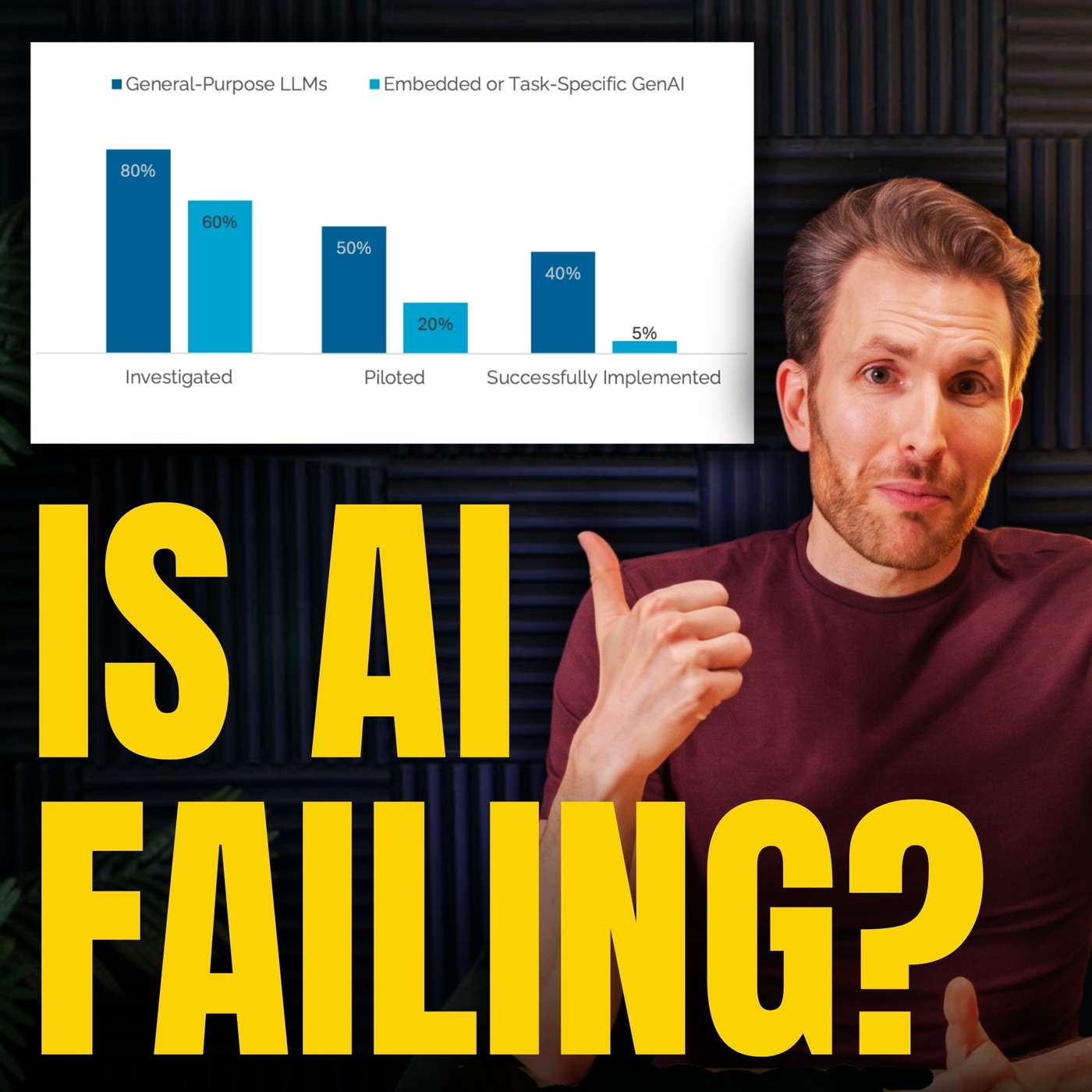

Headlines about high AI pilot failure rates are misleading because it's incredibly easy to start a project, inflating the denominator of attempts. Robust, successful AI implementations are happening, but they require 6-12 months of serious effort, not the quick wins promised by hype cycles.

Don't rely on traditional project milestones to gauge AI progress. Instead, measure success through granular unit economics and operational metrics. Metrics like 'cost per release' or 'cycle time per feature' provide immediate feedback on whether your strategic hypothesis is valid, enabling rapid iteration.

The primary reason most pharmaceutical AI projects fail to deliver value is not technical limitation but strategic failure. Organizations become obsessed with optimizing algorithms while neglecting the foundational blueprint that connects AI investment to measurable business outcomes and operational readiness.

Businesses mistakenly believe that a functioning ML model is intrinsically valuable. However, value is only realized when a model is deployed to change organizational operations. This fixation on the technology itself, rather than its practical implementation, is a primary cause of project failure.

![Jesse Zhang - Building Decagon - [Invest Like the Best, EP.443] thumbnail](https://megaphone.imgix.net/podcasts/2cc4da2a-a266-11f0-ac41-9f5060c6f4ef/image/7df6dc347b875d4b90ae98f397bf1cb1.jpg?ixlib=rails-4.3.1&max-w=3000&max-h=3000&fit=crop&auto=format,compress)