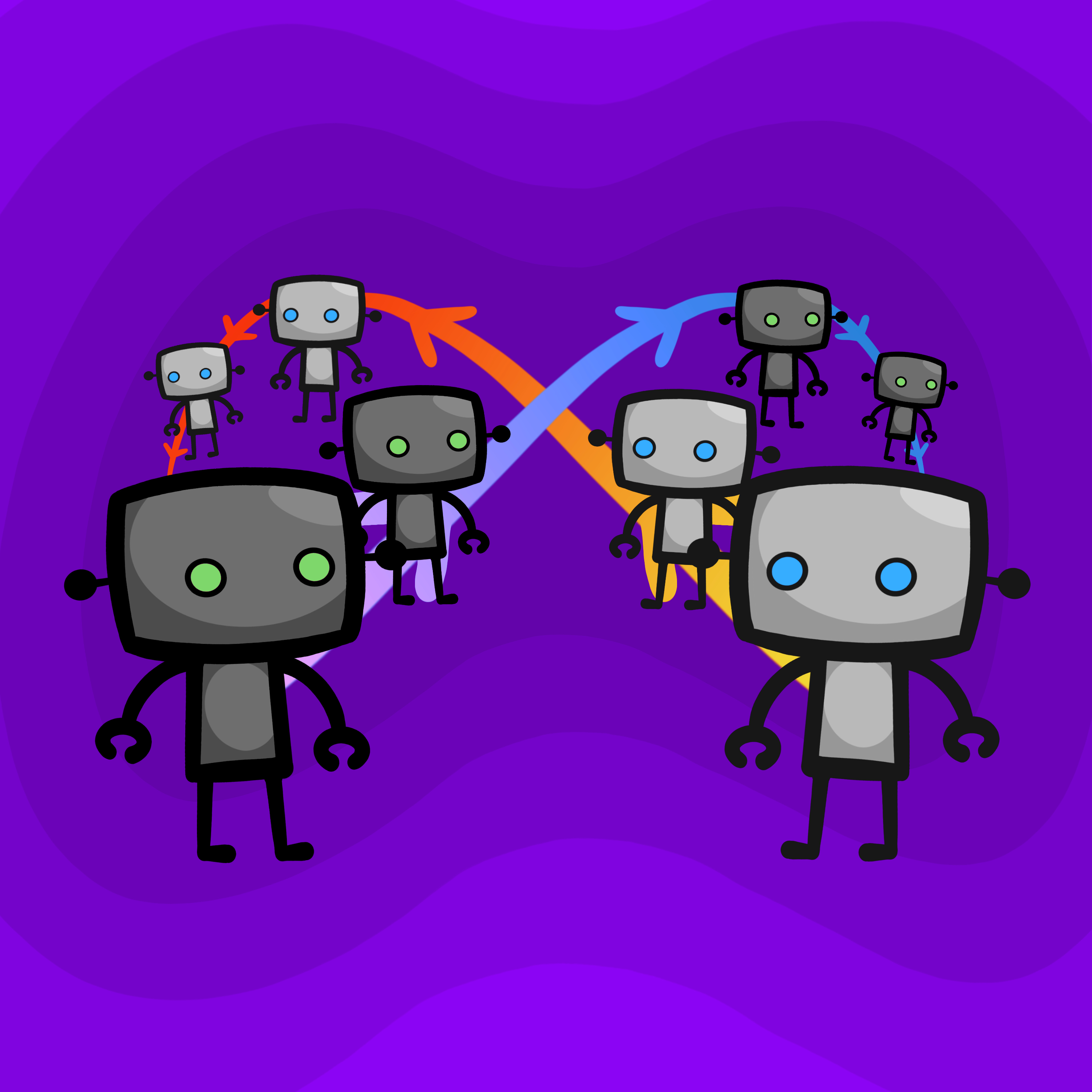

A simple way for AIs to cooperate is to simulate each other and copy the action. However, this creates an infinite loop if both do it. The fix is to introduce a small probability (epsilon) of cooperating unconditionally, which guarantees the simulation chain eventually terminates.

The decision to cooperate hinges on whether an AI perceives an object as a strategic agent or a non-strategic part of the environment (e.g., a water bottle). This classification is fundamental but difficult, as misinterpreting the environment could lead to being exploited or failing to cooperate when beneficial.

Early program equilibrium strategies relied on checking if an opponent's source code was identical. This approach is extremely fragile, as trivial changes like an extra space or a different variable name break cooperation, making it impractical for real-world applications.

Despite different mechanisms, advanced cooperative strategies like proof-based (Loebian) and simulation-based (epsilon-grounded) bots can successfully cooperate. This suggests a potential for robust interoperability between independently designed rational agents, a positive sign for AI safety.

A robust AI will cooperate with a simple "always cooperate" bot, making it exploitable. However, choosing to defect is risky. A sophisticated adversary could present a simple bot to test for predatory behavior, making the decision dependent on beliefs about the opponent's strategic depth.

In program equilibrium, players submit computer programs instead of actions. These programs can read each other's source code, allowing them to verify cooperative intent and overcome dilemmas like the Prisoner's Dilemma, which is impossible in standard game theory.

Program equilibrium isn't just an abstract concept; it serves as a direct model for how autonomous AI systems could interact. It also provides a powerful analogy for human institutions like governments, where laws and constitutions act as a transparent "source code" governing their behavior.

The "epsilon-grounded" simulation approach has a hidden cost: its runtime is inversely proportional to epsilon. To be very certain that simulations will terminate (a small epsilon), agents must accept potentially very long computation times, creating a direct trade-off between speed and reliability.

To overcome brittle code-matching, AIs can use formal logic to prove cooperative intent. This is enabled by Löb's Theorem, an obscure result which allows a program to conclude "my opponent cooperates" without falling into an infinite loop of reasoning, creating a robust cooperative equilibrium.

A key finding is that almost any outcome better than mutual punishment can be a stable equilibrium (a "folk theorem"). While this enables cooperation, it creates a massive coordination problem: with so many possible "good" outcomes, agents may fail to converge on the same one, leading to suboptimal results.

In multi-agent simulations, if agents use a shared source of randomness, they can achieve stable equilibria. If they use private randomness, coordinating punishment becomes nearly impossible because one agent cannot verify if another's defection was malicious or a justified response to a third party's actions.

Simulating strategies with memory (like "grim trigger") or with multiple players causes an exponential explosion of simulation branches. This can be solved by having all simulated agents draw from the same shared sequence of random numbers, which forces all simulation branches to halt at the same conceptual "time step."