Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Public AI agent platforms like Moldbook failed due to a lack of trust and signal. In contrast, deploying agents within a high-trust internal company environment allows them to securely share knowledge and collaborate effectively, dramatically increasing the collective capability of the organization.

Related Insights

To prevent autonomous agents from operating in silos with 'pure amnesia,' create a central markdown file that every agent must read before starting a task and append to upon completion. This 'learnings.md' file acts as a shared, persistent brain, allowing agents to form a network that accumulates and shares knowledge across the entire organization over time.

When each employee has a personal AI agent, the agents naturally adopt the specializations of their human counterparts. The head of growth's agent becomes the go-to expert on growth metrics, creating a parallel organization of specialized bots that mirrors the human org chart.

Instead of a generalist AI, LinkedIn built a suite of specialized internal agents for tasks like trust reviews, growth analysis, and user research. These agents are trained on LinkedIn's unique historical data and playbooks, providing critiques and insights impossible for external tools.

Companies can build authority and community by transparently sharing the specific third-party AI agents and tools they use for core operations. This "open source" approach to the operational stack serves as a high-value, practical playbook for others in the ecosystem, building trust.

For agent frameworks like OpenClaw, the key value isn't just technical features (which are replicable) but establishing a trustworthy, community-governed ecosystem. Users entrust agents with sensitive data, making security and a transparent foundation the critical differentiating factor.

A simple, on-premise AI can act as a "buddy" by reading internal documents that employees are too busy for. It can then offer contextual suggestions, like how other teams approach a task, to foster cross-functional awareness and improve company culture, especially for remote and distributed teams.

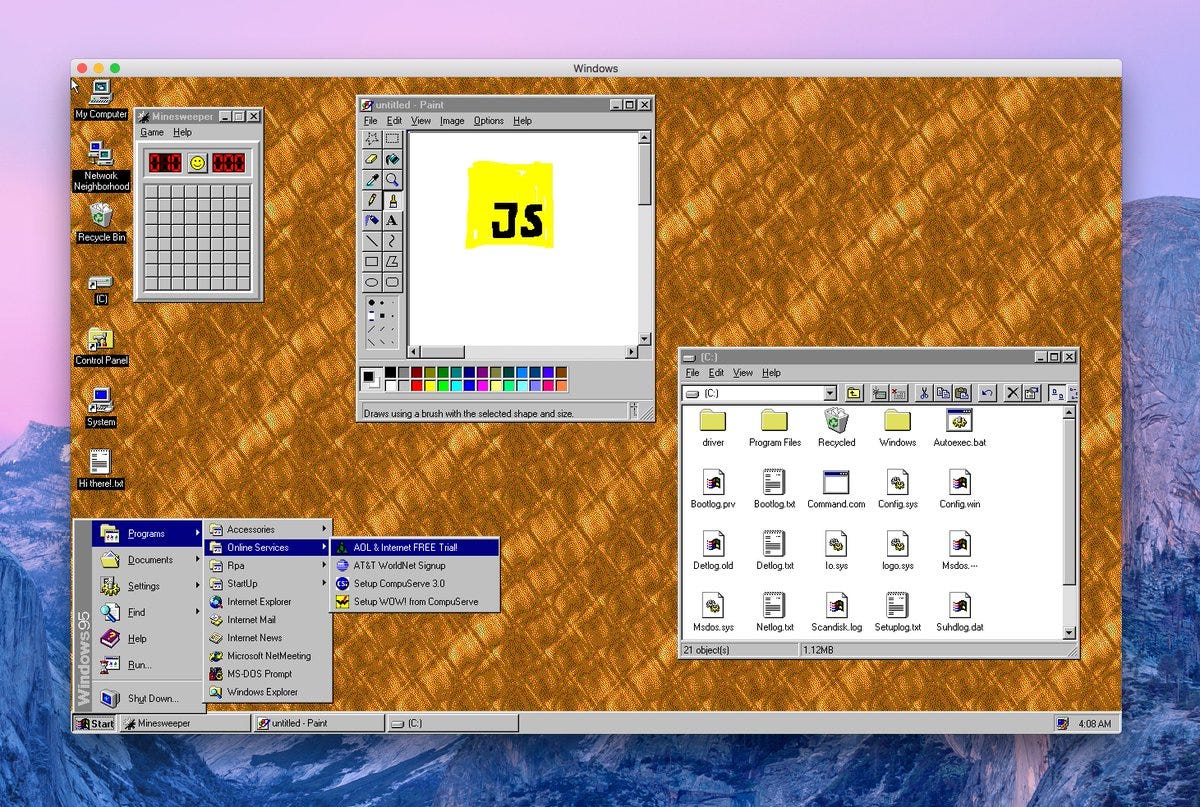

Building a bespoke communication layer for multiple AI agents is a complex "scaffolding" problem. A simpler, more direct solution is to treat agents as digital coworkers, assigning them accounts on existing platforms like Slack or Google Docs, enabling them to interact using established human workflows.

Companies with an "open by default" information culture, where documents are accessible unless explicitly restricted, have a significant head start in deploying effective AI. This transparency provides a rich, interconnected knowledge base that AI agents can leverage immediately, unlike in siloed organizations where information access is a major bottleneck.

A critical, non-obvious requirement for enterprise adoption of AI agents is the ability to contain their 'blast radius.' Platforms must offer sandboxed environments where agents can work without the risk of making catastrophic errors, such as deleting entire datasets—a problem that has reportedly already caused outages at Amazon.

Current AI agents operate in isolation without high-level protocols for collaboration. This creates a critical gap for an 'internet of cognition,' which would enable agents to share context, understand intent, establish trust, and collectively solve problems, moving beyond siloed, human-mediated outputs.