Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Traditional regulation is ill-equipped for AI's complexity and opacity. The podcast proposes a new model inspired by the Federal Reserve's oversight of banks: embedding technically-expert supervisors full-time inside major AI labs. This would allow for proactive monitoring of internal risk models and decisions, rather than just reacting to disasters after they occur.

Related Insights

The Fed's most critical future task is not traditional monetary policy but prudential supervision of AI in finance. The Fed chair must lead the effort to understand and create oversight for novel systemic risks emerging from AI adoption by financial institutions, rather than getting distracted by unrelated political issues like green energy.

Instead of trying to anticipate every potential harm, AI regulation should mandate open, internationally consistent audit trails, similar to financial transaction logs. This shifts the focus from pre-approval to post-hoc accountability, allowing regulators and the public to address harms as they emerge.

Dean Ball proposes that regulating AI should model financial services, not pharmaceuticals. Instead of approving each individual model (like a drug), regulators should focus on the institutional soundness and governance of the labs themselves (like banks), as generalist AIs lack clear 'endpoints' for product-specific testing.

Despite lagging in AI deployment, finance departments lead in governance. Decades of experience with SOX compliance, audit trails, and fiduciary duty created pre-existing frameworks for managing risky tools, which they now apply to AI. This governance-first approach could become a long-term competitive advantage.

When addressing AI's 'black box' problem, lawmaker Alex Boris suggests regulators should bypass the philosophical debate over a model's 'intent.' The focus should be on its observable impact. By setting up tests in controlled environments—like telling an AI it will be shut down—you can discover and mitigate dangerous emergent behaviors before release.

Mark Cuban advocates for a specific regulatory approach to maintain AI leadership. He suggests the government should avoid stifling innovation by over-regulating the creation of AI models. Instead, it should focus intensely on monitoring the outputs to prevent misuse or harmful applications.

Instead of relying solely on human oversight, AI governance will evolve into a system where higher-level "governor" agents audit and regulate other AIs. These specialized agents will manage the core programming, permissions, and ethical guidelines of their subordinates.

Instead of relying solely on human oversight, Bret Taylor advocates a layered "defense in depth" approach for AI safety. This involves using specialized "supervisor" AI models to monitor a primary agent's decisions in real-time, followed by more intensive AI analysis post-conversation to flag anomalies for efficient human review.

An FDA-style regulatory model would force AI companies to make a quantitative safety case for their models before deployment. This shifts the burden of proof from regulators to creators, creating powerful financial incentives for labs to invest heavily in safety research, much like pharmaceutical companies invest in clinical trials.

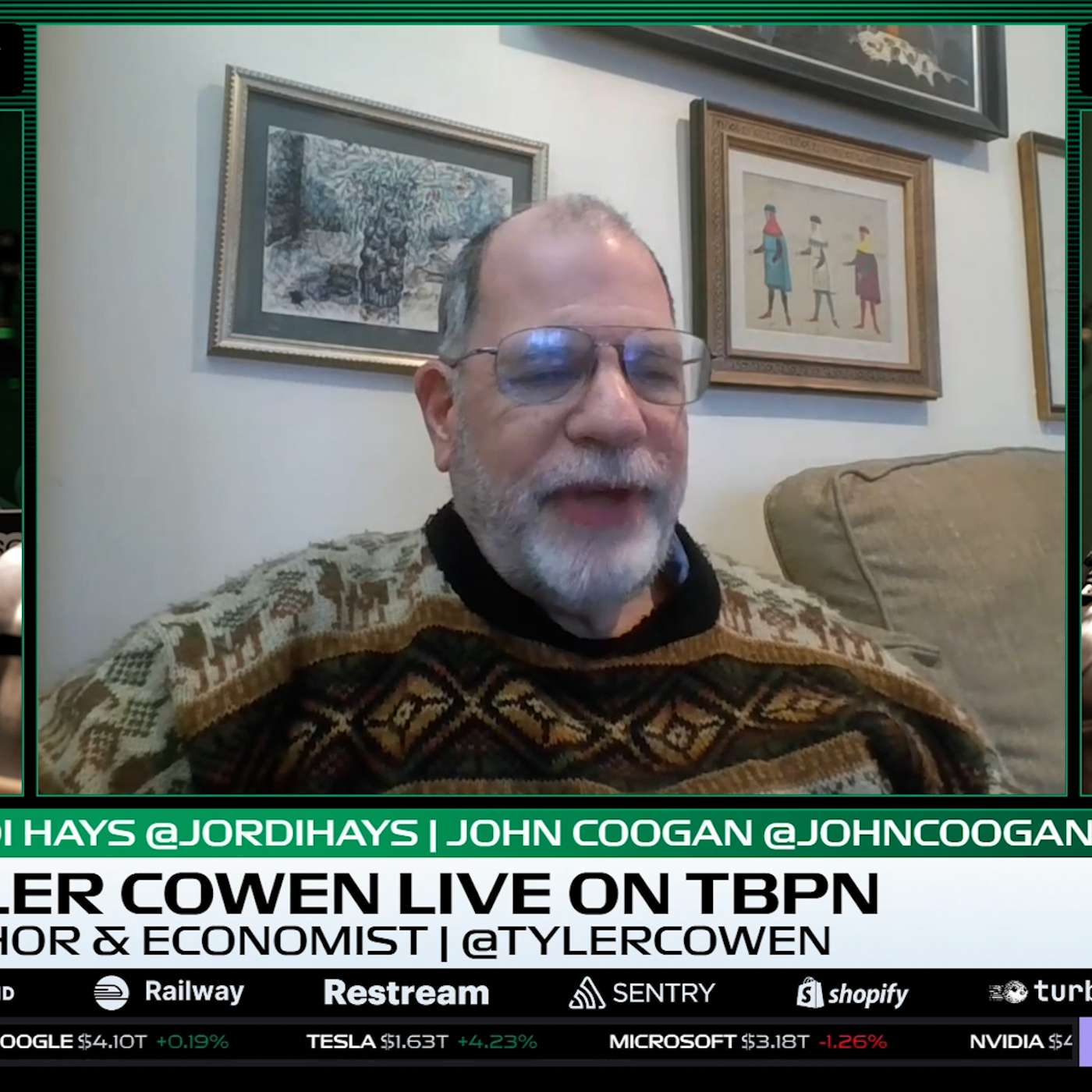

Tyler Cowen argues the Federal Reserve Chair should use their influence to focus on the prudential supervision of AI in the financial system. This involves assessing new systemic risks and updating oversight functions, a mandate more appropriate for the central bank than politically charged topics like green energy, which erode its political capital.