Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

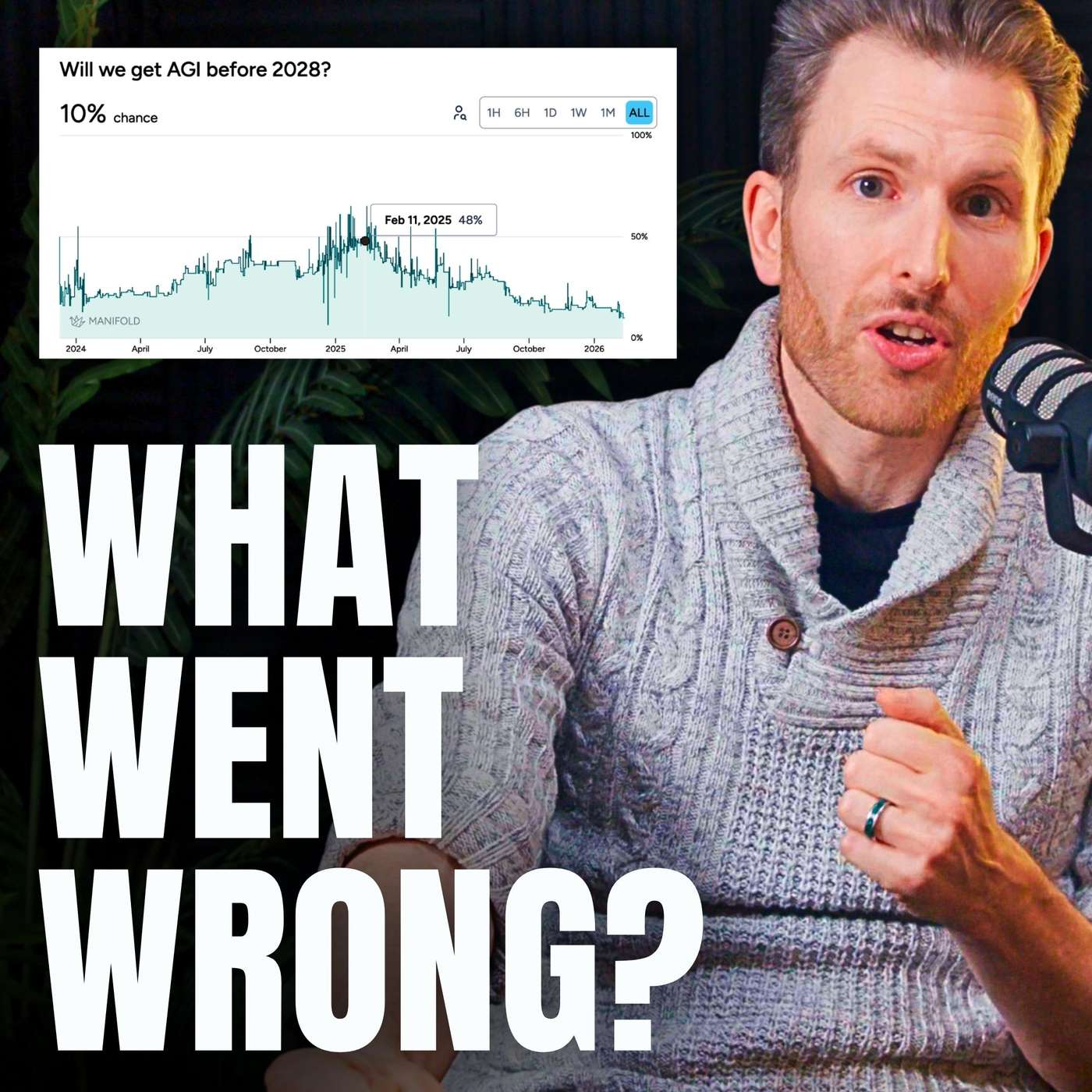

The belief that AI progress will be slow often stems from a strong prior that 'things are just always hard and slow.' This 'bottleneck objection' leads skeptics to assume unforeseen drag factors will always emerge, causing them to dismiss detailed scenarios for rapid acceleration without engaging with the specifics.

Related Insights

While AI's technical capabilities advance exponentially, widespread organizational adoption is slowed by human factors like resistance to change, lack of urgency, and abstract understanding. This creates a significant gap between potential and reality.

While discourse often focuses on exponential growth, the AI Safety Report presents 'progress stalls' as a serious scenario, analogous to passenger aircraft speed, which plateaued after 1960. This highlights that continued rapid advancement is not guaranteed due to potential technical or resource bottlenecks.

Today's AI boom is fueled by scaling computation, which is a known engineering challenge. The alternative, embedding nuanced, human-like inductive biases, is far harder as it requires a deep understanding of the problem space. This difficulty gap explains why massive models dominate AI development over more targeted, efficient ones—scaling is simply the more straightforward path.

The reason smart AI experts continue to disagree on outcomes, despite new evidence, is that they operate from fundamentally different paradigms. One camp sees "always another bottleneck," while the other sees a pattern of overcoming past limitations. New data is simply used to reinforce these pre-existing worldviews.

A growing gap exists between AI's performance in demos and its actual impact on productivity. As podcaster Dwarkesh Patel noted, AI models improve at the rapid rate short-term optimists predict, but only become useful at the slower rate long-term skeptics predict, explaining widespread disillusionment.

AI can produce scientific claims and codebases thousands of times faster than humans. However, the meticulous work of validating these outputs remains a human task. This growing gap between generation and verification could create a backlog of unproven ideas, slowing true scientific advancement.

Criticizing AI developers for being a few months off on predictions is a distraction. The underlying trend is one of exponential growth. Like criticizing Elon Musk's Mars timeline while ignoring his historic rocket launches, it's a failure to grasp the scale and direction of the technological shift that is already happening.

The true exponential acceleration towards AGI is currently limited by a human bottleneck: our speed at prompting AI and, more importantly, our capacity to manually validate its work. The hockey stick growth will only begin when AI can reliably validate its own output, closing the productivity loop.

Convergence is difficult because both camps in the AI speed debate have a narrative for why the other is wrong. Skeptics believe fast-takeoff proponents are naive storytellers who always underestimate real-world bottlenecks. Proponents believe skeptics generically invoke 'bottlenecks' without providing specific, insurmountable examples, thus failing to engage with the core argument.

Even if AI accelerates parts of a workflow like coding, overall progress might stall due to Amdahl's Law. The system's speed is limited by its slowest component, meaning human-dependent tasks like strategic thinking could become the new rate-limiting step.