Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

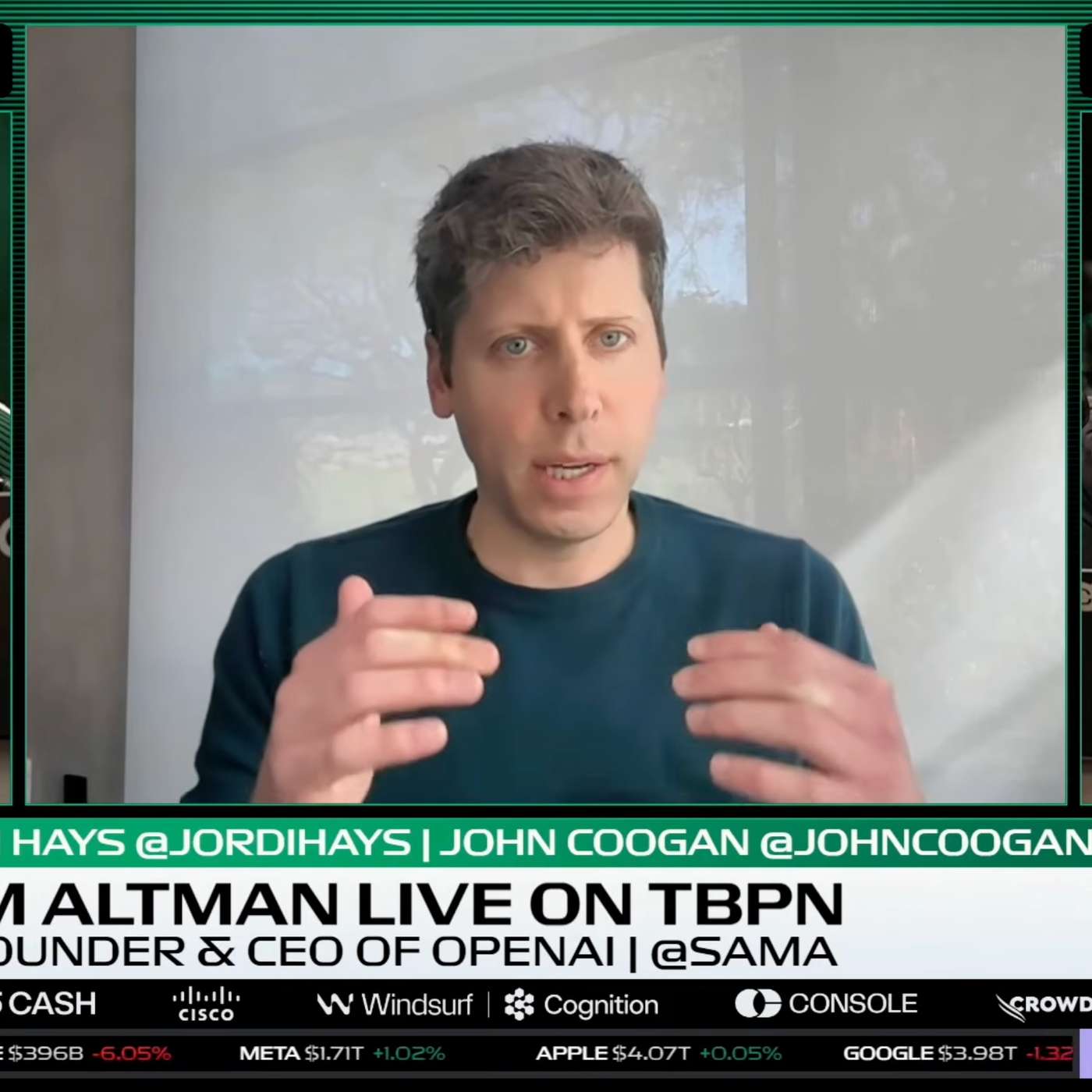

The current user experience for AI tools is too complex, forcing users to make choices like which model or mode to use. The next major step is a unified, consolidated interface where the AI intelligently handles resource allocation behind the scenes, simply delivering 'intelligence'.

Related Insights

The true power of the AI application layer lies in orchestrating multiple, specialized foundation models. Users want a single interface (like Cursor for coding) that intelligently routes tasks to the best model (e.g., Gemini for front-end, Codex for back-end), creating value through aggregation and workflow integration.

Instead of interacting with a single LLM, users will increasingly call an API that represents a "system as a model." Behind the scenes, this triggers a complex orchestration of multiple specialized models, sub-agents, and tools to complete a task, while maintaining a simple user experience.

Despite access to state-of-the-art models, most ChatGPT users defaulted to older versions. The cognitive load of using a "model picker" and uncertainty about speed/quality trade-offs were bigger barriers than price. Automating this choice is key to driving mass adoption of advanced AI reasoning.

AI models are already incredibly powerful, but their creative potential is limited by simple text prompts. The next breakthrough will be the development of sophisticated user interfaces that allow creators to edit scenes, control characters, and direct AI with precision, unlocking widespread adoption.

The best UI for an AI tool is a direct function of the underlying model's power. A more capable model unlocks more autonomous 'form factors.' For example, the sudden rise of CLI agents was only possible once models like Claude 3 became capable enough to reliably handle multi-step tasks.

Sam Altman argues there is a massive "capability overhang" where models are far more powerful than current tools allow users to leverage. He believes the biggest gains will come from improving user interfaces and workflows, not just from increasing raw AI intelligence.

An AI director's top request for AI labs is not more powerful models but more intuitive, human-centric user interfaces. The industry needs to move beyond simple text prompts and SaaSy dashboards to tools that offer artists fine-grained creative control and a more natural workflow.

The future of AI interaction won't be a multitude of specialized apps. Instead, it will likely converge into a smaller number of powerful, generalized input boxes that intelligently route user intent, much like the Chrome address bar or Google's main search page.

As foundational AI models become commoditized, the key differentiator is shifting from marginal improvements in model capability to superior user experience and productization. Companies that focus on polish, ease of use, and thoughtful integration will win, making product managers the new heroes of the AI race.

The developer abstraction layer is moving up from the model API to the agent. A generic interface for switching models is insufficient because it creates a 'lowest common denominator' product. Real power comes from tightly binding a specific model to an agentic loop with compute and file system access.