Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Unlike past speech recognition that failed by requiring precise syntax, modern AI assistants can interpret natural, conversational language. They infer the user's intent, successfully translating it into code without needing perfectly dictated syntax like angle brackets or semicolons.

Related Insights

To fully express intent, AI applications cannot rely on a single modality. They need structured code for control flow, natural language for defining fuzzy tasks (like in DSPy's signatures), and example data for optimization and capturing long-tail behavior.

As models become more powerful, the primary challenge shifts from improving capabilities to creating better ways for humans to specify what they want. Natural language is too ambiguous and code too rigid, creating a need for a new abstraction layer for intent.

The interface for AI agents is becoming nearly frictionless. By setting up a voice-to-voice loop via an app like Telegram, users can issue complex commands by simply holding down a button and speaking. This model removes the cognitive load of typing and makes interaction more natural and immediate.

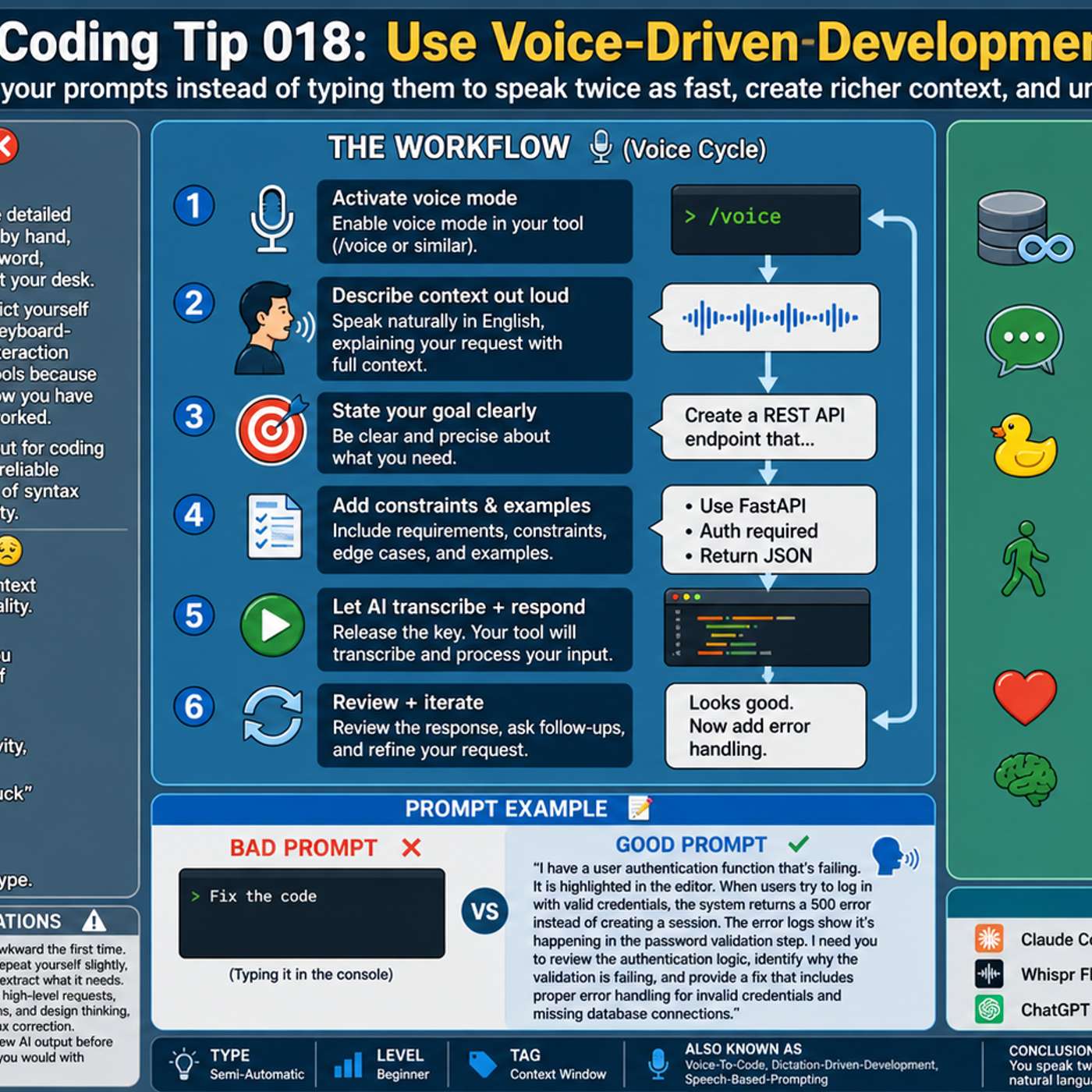

Instead of typing, dictating prompts for AI coding tools allows for faster and more detailed instructions. Speaking your thought process naturally includes more context and nuance, which leads to better results from the AI. Tools like Whisperflow are optimized with developer terminology for higher accuracy.

Unlike traditional programming, which demands extreme precision, modern AI agents operate from business-oriented prompts. Given a high-level goal and minimal context (like a single class name), an AI can infer intent and generate a complete, multi-file solution.

The initial magic of GitHub's Copilot wasn't its accuracy but its profound understanding of natural language. Early versions had a code completion acceptance rate of only 20%, yet the moments it correctly interpreted human intent were so powerful they signaled a fundamental technology shift.

Jack Dorsey champions "vibe coding," using AI to generate code, allowing developers to operate at a higher level of abstraction. This shifts focus from syntax (like semicolons) to orchestration, making software creation more accessible and freeing developers to be more creative.

AI development has evolved to where models can be directed using human-like language. Instead of complex prompt engineering or fine-tuning, developers can provide instructions, documentation, and context in plain English to guide the AI's behavior, democratizing access to sophisticated outcomes.

In the near future, developers may no longer write code in languages like Python or Javascript. AI, with its deep understanding of hardware, could translate human intent directly into binary, making infrastructure decisions like database selection entirely obsolete.

The paradigm for creating software has shifted from writing code to writing natural language. Founders report a new workflow: speaking English to an AI, which then writes English prompts for other programs to generate the final code. This fundamentally changes the nature of software engineering and productivity.