Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

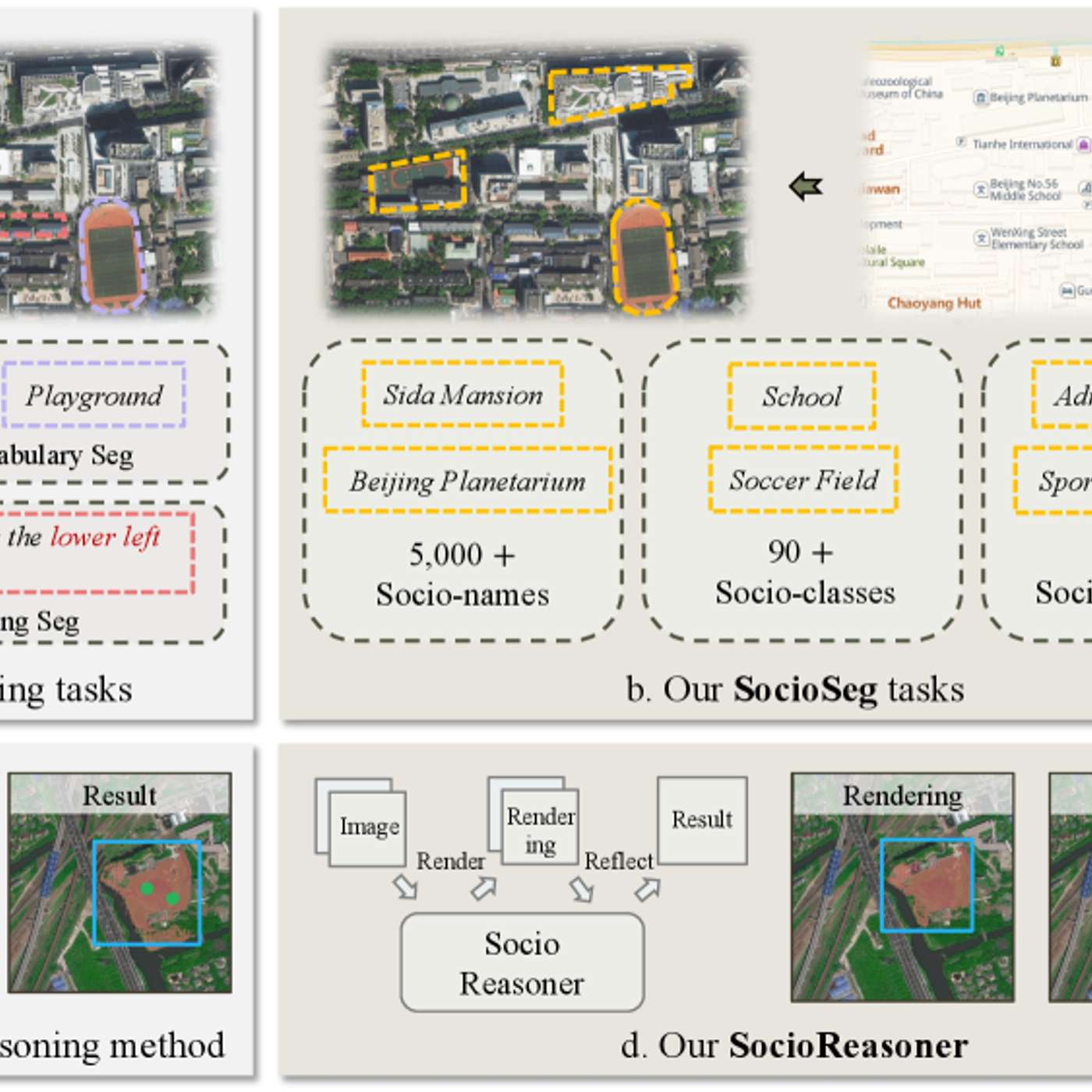

The researchers' failure case analysis is highlighted as a key contribution. Understanding why the model fails—due to ambiguous data or unusual inputs—provides a realistic scope of application and a clear roadmap for improvement, which is more useful for practitioners than high scores alone.

Related Insights

An AI agent's failure on a complex task like tax preparation isn't due to a lack of intelligence. Instead, it's often blocked by a single, unpredictable "tiny thing," such as misinterpreting two boxes on a W4 form. This highlights that reliability challenges are granular and not always intuitive.

There's a significant gap between AI performance in simulated benchmarks and in the real world. Despite scoring highly on evaluations, AIs in real deployments make "silly mistakes that no human would ever dream of doing," suggesting that current benchmarks don't capture the messiness and unpredictability of reality.

The benchmark for AI performance shouldn't be perfection, but the existing human alternative. In many contexts, like medical reporting or driving, imperfect AI can still be vastly superior to error-prone humans. The choice is often between a flawed AI and an even more flawed human system, or no system at all.

Just as standardized tests fail to capture a student's full potential, AI benchmarks often don't reflect real-world performance. The true value comes from the 'last mile' ingenuity of productization and workflow integration, not just raw model scores, which can be misleading.

To ensure their AI model wasn't just luckily finding effective drug delivery peptides, researchers intentionally tested sequences the model predicted would perform poorly (negative controls). When these predictions were experimentally confirmed, it proved the model had genuinely learned the underlying chemical principles and was not just overfitting.

The critical challenge in AI development isn't just improving a model's raw accuracy but building a system that reliably learns from its mistakes. The gap between an 85% accurate prototype and a 99% production-ready system is bridged by an infrastructure that systematically captures and recycles errors into high-quality training data.

When models achieve suspiciously high scores, it raises questions about benchmark integrity. Intentionally including impossible problems in benchmarks can serve as a flag to test an AI's ability to recognize unsolvable requests and refuse them, a crucial skill for real-world reliability and safety.

When selecting foundational models, engineering teams often prioritize "taste" and predictable failure patterns over raw performance. A model that fails slightly more often but in a consistent, understandable way is more valuable and easier to build robust systems around than a top-performer with erratic, hard-to-debug errors.

For AI systems to be adopted in scientific labs, they must be interpretable. Researchers need to understand the 'why' behind an AI's experimental plan to validate and trust the process, making interpretability a more critical feature than raw predictive power.

Fine-tuning an AI model is most effective when you use high-signal data. The best source for this is the set of difficult examples where your system consistently fails. The processes of error analysis and evaluation naturally curate this valuable dataset, making fine-tuning a logical and powerful next step after prompt engineering.

![[State of Code Evals] After SWE-bench, Code Clash & SOTA Coding Benchmarks recap — John Yang thumbnail](https://assets.flightcast.com/V2Uploads/nvaja2542wefzb8rjg5f519m/01K4D8FB4MNA071BM5ZDSMH34N/square.jpg)