Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

For high-volume tasks like processing thousands of emails, standard function calling fails due to context limits. The solution is a sandboxed environment where an agent writes and executes code to programmatically call tools, enabling large-scale data processing.

Related Insights

As AI generates more code than humans can review, the validation bottleneck emerges. The solution is providing agents with dedicated, sandboxed environments to run tests and verify functionality before a human sees the code, shifting review from process to outcome.

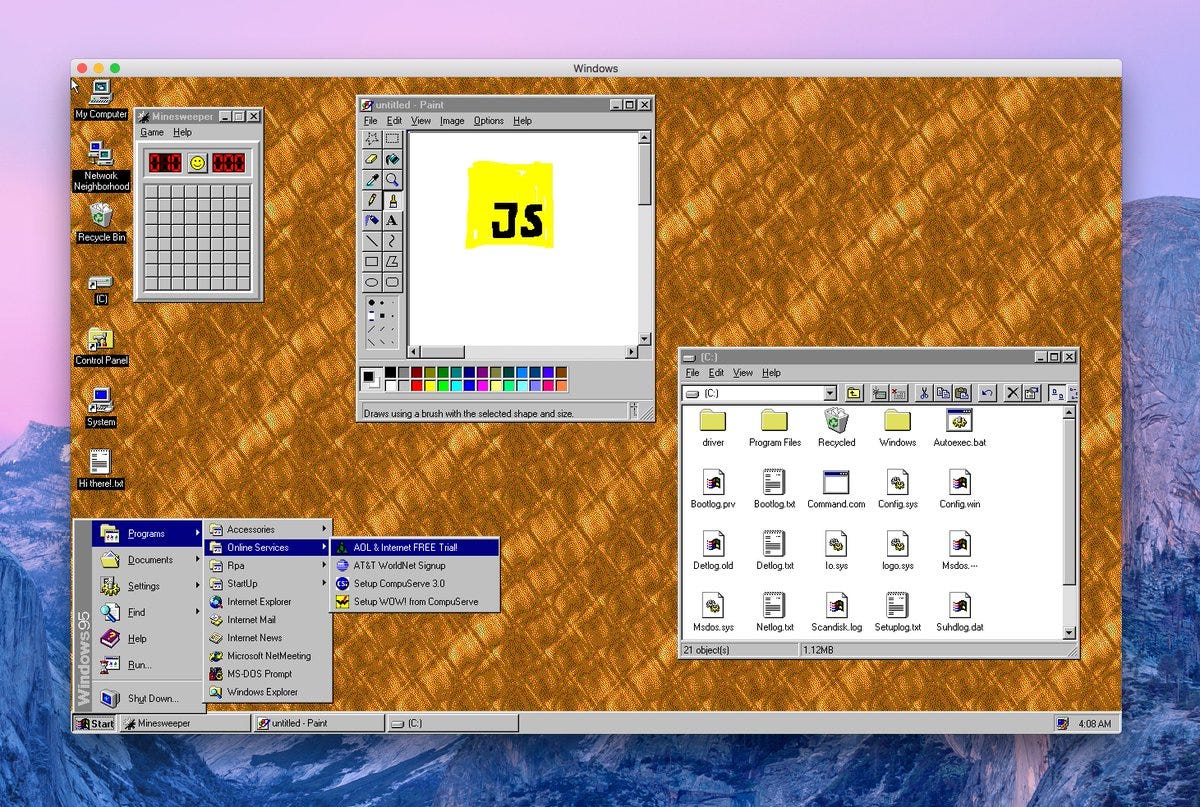

Cursor discovered that agents need more than just code access. Providing a full VM environment—a "brain in a box" where they can see pixels, run code, and use dev tools like a human—was the step-change needed to tackle entire features, not just minor edits.

To address security concerns, powerful AI agents should be provisioned like new human employees. This means running them in a sandboxed environment on a separate machine, with their own dedicated accounts, API keys, and access tokens, rather than on a personal computer.

AI agents present a UX problem: either grant risky, sweeping permissions or suffer "approval fatigue" by confirming every action. Sandboxing creates a middle ground. The agent can operate autonomously within a secure environment, making it powerful without being dangerous to the host system.

Instead of giving an LLM hundreds of specific tools, a more scalable "cyborg" approach is to provide one tool: a sandboxed code execution environment. The LLM writes code against a company's SDK, which is more context-efficient, faster, and more flexible than multiple API round-trips.

Simply giving an AI agent thousands of tools is counterproductive. The real value lies in an 'agentic tool execution layer' that provides just-in-time discovery and managed execution to prevent the agent from getting overwhelmed by its options.

"Code Mode" is not an alternative to MCP but a more efficient way to use it. Instead of multiple sequential tool calls, the model generates a single script that executes multiple actions in a sandbox. MCP still provides the core benefits of authentication, discoverability, and a standardized, LLM-friendly API.

The simple "tool calling in a loop" model for agents is deceptive. Without managing context, token-heavy tool calls quickly accumulate, leading to high costs ($1-2 per run), hitting context limits, and performance degradation known as "context rot."

The true capability of AI agents comes not just from the language model, but from having a full computing environment at their disposal. Vercel's internal data agent, D0, succeeds because it can write and run Python code, query Snowflake, and search the web within a sandbox environment.

A critical, non-obvious requirement for enterprise adoption of AI agents is the ability to contain their 'blast radius.' Platforms must offer sandboxed environments where agents can work without the risk of making catastrophic errors, such as deleting entire datasets—a problem that has reportedly already caused outages at Amazon.