Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

A simple method to detect a common type of real-time deepfake is to ask the person to place their fingers in front of their face. While the AI can generate realistic hands held separately, the complexity of overlaying them on the face often causes the model to glitch and break the illusion, providing a practical, low-tech verification test.

Related Insights

The development of advanced surveillance in China required training models to distinguish between real humans and synthetic media. This technological push inadvertently propelled deepfake and face detection advancements globally, which were then repurposed for consumer applications like AI-generated face filters.

Creating reliable AI detectors is an endless arms race against ever-improving generative models, which often have detectors built into their training process (like GANs). A better approach is using algorithmic feeds to filter out low-quality "slop" content, regardless of its origin, based on user behavior.

The proliferation of deepfakes is a positive development because it democratizes media manipulation, which was previously exclusive to well-resourced entities. This widespread availability of synthetic media will force the public to become more skeptical of video evidence and less likely to form opinions based on short, decontextualized clips.

The 'aha' moment for Google's team was when the AI model accurately rendered their own faces. Judging consistency on unfamiliar faces is unreliable; the most stringent and meaningful evaluation comes from a person judging an AI-generated image of themselves.

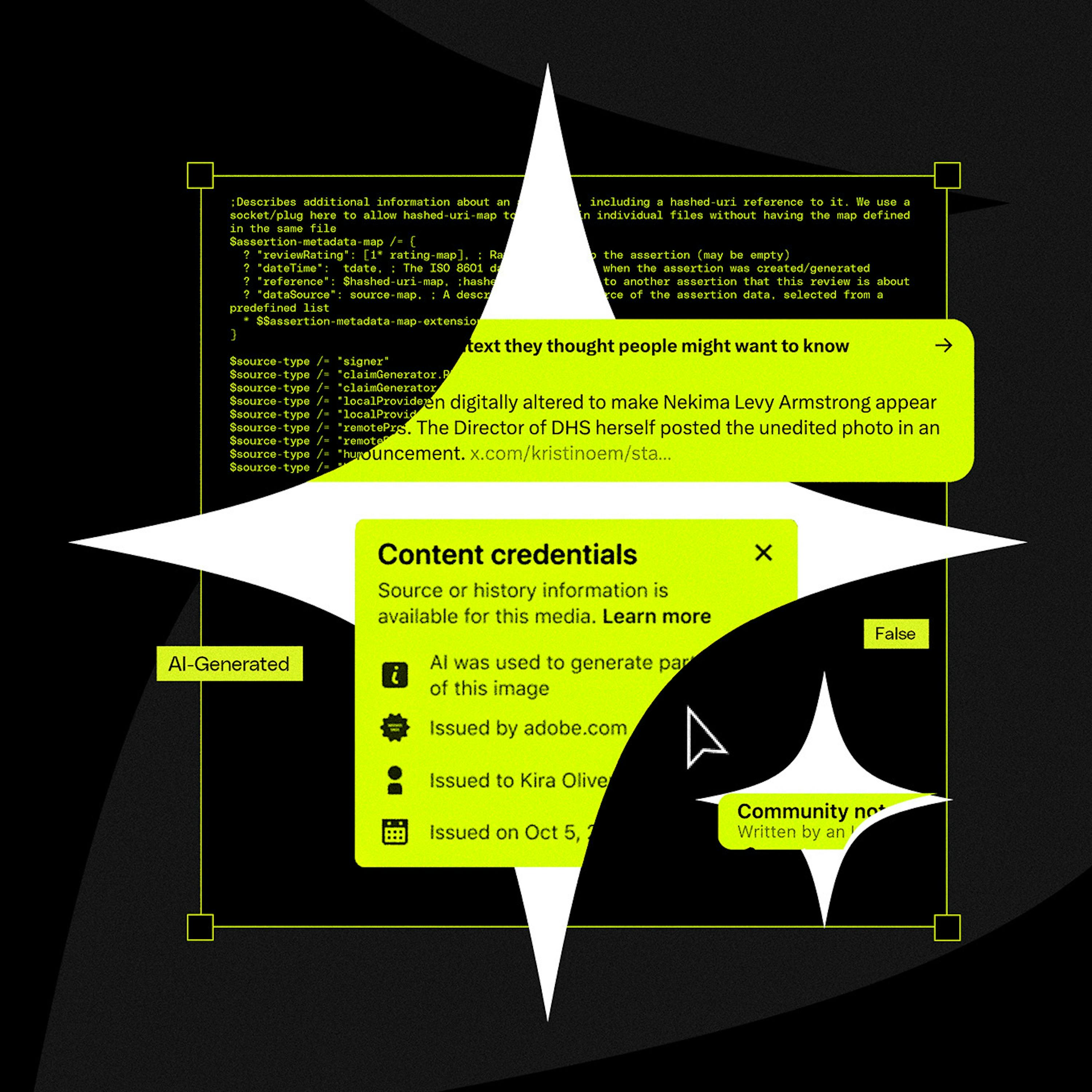

Politician Alex Boris argues that expecting humans to spot increasingly sophisticated deepfakes is a losing battle. The real solution is a universal metadata standard (like C2PA) that cryptographically proves if content is real or AI-generated, making unverified content inherently suspect, much like an unsecure HTTP website today.

The rapid advancement of AI-generated video will soon make it impossible to distinguish real footage from deepfakes. This will cause a societal shift, eroding the concept of 'video proof' which has been a cornerstone of trust for the past century.

As AI makes creating complex visuals trivial, audiences will become skeptical of content like surrealist photos or polished B-roll. They will increasingly assume it is AI-generated rather than the result of human skill, leading to lower trust and engagement.

Cryptographically signing media doesn't solve deepfakes because the vulnerability shifts to the user. Attackers use phishing tactics with nearly identical public keys or domains (a "Sybil problem") to trick human perception. The core issue is human error, not a lack of a technical solution.

C2PA was designed to track a file's provenance (creation, edits), not specifically to detect AI. This fundamental mismatch in purpose is why it's an ineffective solution for the current deepfake crisis, as it wasn't built to be a simple binary validator of reality.

A significant societal risk is the public's inability to distinguish sophisticated AI-generated videos from reality. This creates fertile ground for political deepfakes to influence elections, a problem made worse by social media platforms that don't enforce clear "Made with AI" labeling.