Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The proliferation of deepfakes is a positive development because it democratizes media manipulation, which was previously exclusive to well-resourced entities. This widespread availability of synthetic media will force the public to become more skeptical of video evidence and less likely to form opinions based on short, decontextualized clips.

Related Insights

Adam Mosseri’s public statement that we can no longer assume photos or videos are real marks a pivotal shift. He suggests moving from a default of trust to a default of skepticism, effectively admitting platforms have lost the war on deepfakes and placing the burden of verification on users.

The modern information landscape is saturated with AI-generated propaganda from all sides. It is no longer sufficient to be skeptical of foreign adversaries; one must actively question and verify information from domestic governments as well, as all parties use these tools to shape narratives.

As AI makes it impossible to distinguish real from fake online content (the 'dead internet theory'), society will be forced to question reality itself. This skepticism is ultimately beneficial, as it will lead people to place a higher value on tangible, verifiable experiences like physical touch, nature, and in-person connection, which cannot be digitally replicated.

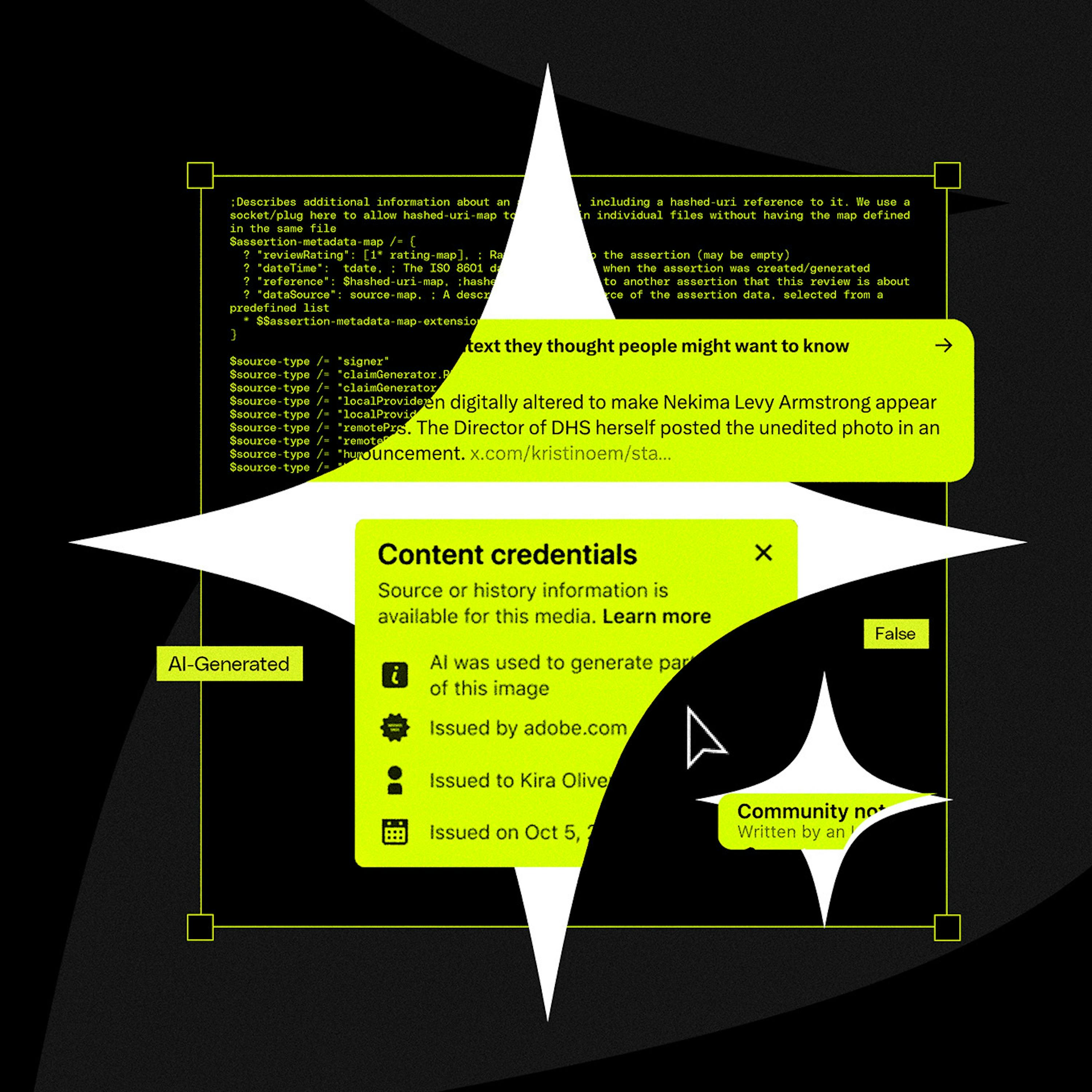

As AI begins to create simulations indistinguishable from reality, technological solutions for verification will fail. Survival in this new era depends on developing critical literacy: the human ability to evaluate sources, understand bias, and question all narratives.

The modern media ecosystem is defined by the decomposition of truth. From AI-generated fake images to conspiracy theories blending real and fake documents on X, people are becoming accustomed to an environment where discerning absolute reality is difficult and are willing to live with that ambiguity.

The rise of convincing AI-generated deepfakes will soon make video and audio evidence unreliable. The solution will be the blockchain, a decentralized, unalterable ledger. Content will be "minted" on-chain to provide a verifiable, timestamped record of authenticity that no single entity can control or manipulate.

Counterintuitively, as AI makes it easy to fake any video or audio, the power of "gotcha" recordings will diminish. The plausible deniability of "it could be a deepfake" may free people from the social surveillance state created by smartphone cameras.

The rapid advancement of AI-generated video will soon make it impossible to distinguish real footage from deepfakes. This will cause a societal shift, eroding the concept of 'video proof' which has been a cornerstone of trust for the past century.

As AI makes creating complex visuals trivial, audiences will become skeptical of content like surrealist photos or polished B-roll. They will increasingly assume it is AI-generated rather than the result of human skill, leading to lower trust and engagement.

As social media and search results become saturated with low-quality, AI-generated content (dubbed "slop"), users may develop a stronger preference for reliable information. This "sloptimism" suggests the degradation of the online ecosystem could inadvertently drive a rebound in trust for established, human-curated news organizations as a defense against misinformation.