Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The rise of photorealistic, real-time deepfakes will make it impossible to trust who you're speaking with on video calls. This will necessitate a "proof of human" layer for platforms like Zoom, especially for high-value conversations like financial transactions where impersonation poses a significant threat.

Related Insights

As AI makes it easy to fake video and audio, blockchain's immutable and decentralized ledger offers a solution. Creators can 'mint' their original content, creating a verifiable record of authenticity that nobody—not even governments or corporations—can alter.

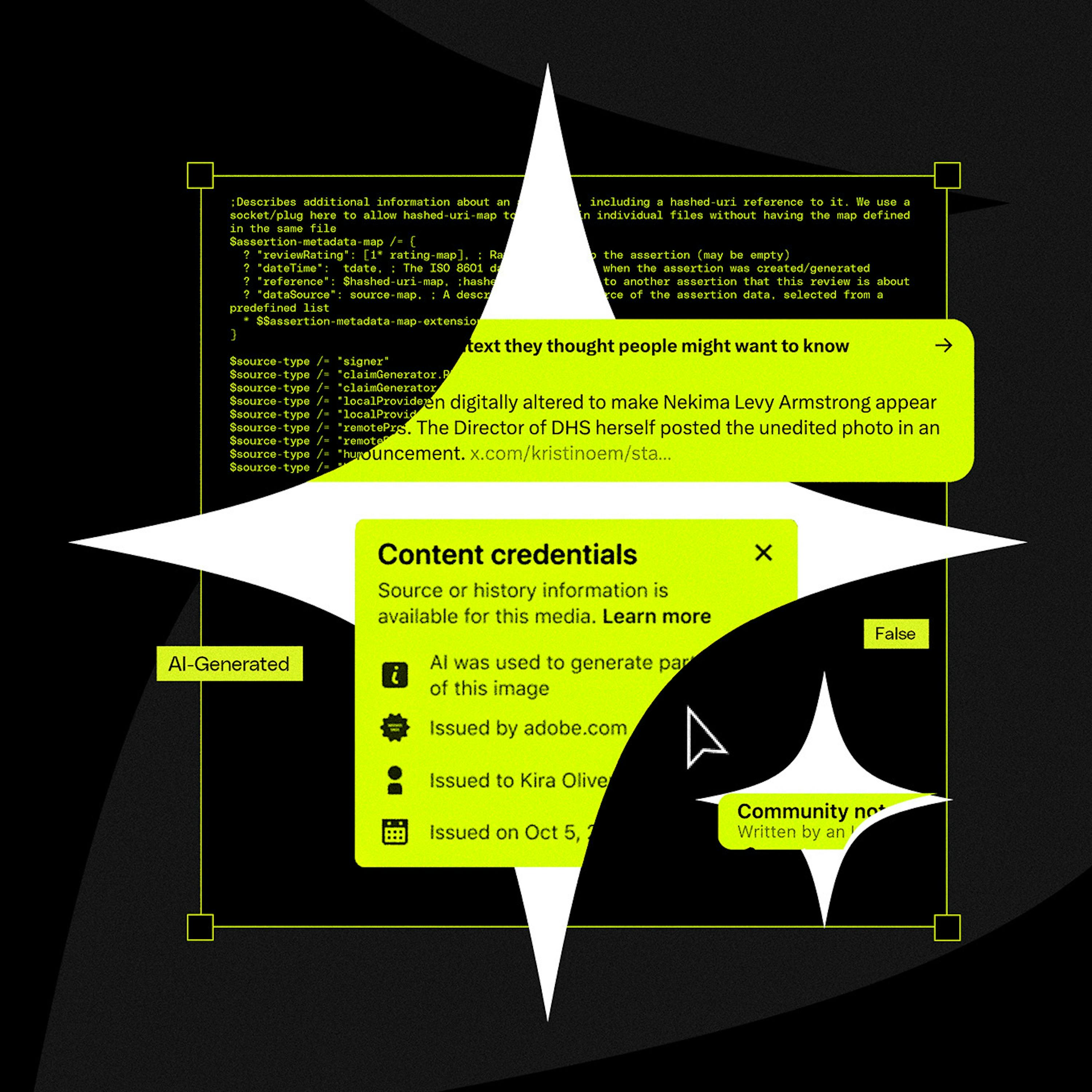

Adam Mosseri’s public statement that we can no longer assume photos or videos are real marks a pivotal shift. He suggests moving from a default of trust to a default of skepticism, effectively admitting platforms have lost the war on deepfakes and placing the burden of verification on users.

For AI agents, the key vulnerability parallel to LLM hallucinations is impersonation. Malicious agents could pose as legitimate entities to take unauthorized actions, like infiltrating banking systems. This represents a critical, emerging security vector that security teams must anticipate.

A simple method to detect a common type of real-time deepfake is to ask the person to place their fingers in front of their face. While the AI can generate realistic hands held separately, the complexity of overlaying them on the face often causes the model to glitch and break the illusion, providing a practical, low-tech verification test.

The rise of AI, which can generate endless fake content, creates a powerful demand for crypto's core function: providing verifiable truth. Crypto wallets, digital signatures, and proof-of-human systems become critical infrastructure to prove authenticity in an AI-saturated world. AI effectively subsidizes the need for crypto.

Politician Alex Boris argues that expecting humans to spot increasingly sophisticated deepfakes is a losing battle. The real solution is a universal metadata standard (like C2PA) that cryptographically proves if content is real or AI-generated, making unverified content inherently suspect, much like an unsecure HTTP website today.

Traditional identity methods like government IDs, "web of trust" social graphs, and facial biometrics are inadequate for a global proof of human system. They fail on scalability, privacy, or vulnerability to sophisticated AI that can mimic human behavior and create fake trust networks.

The rise of convincing AI-generated deepfakes will soon make video and audio evidence unreliable. The solution will be the blockchain, a decentralized, unalterable ledger. Content will be "minted" on-chain to provide a verifiable, timestamped record of authenticity that no single entity can control or manipulate.

The rapid advancement of AI-generated video will soon make it impossible to distinguish real footage from deepfakes. This will cause a societal shift, eroding the concept of 'video proof' which has been a cornerstone of trust for the past century.

To combat bots without compromising its core value of anonymity, Reddit is exploring human verification. CEO Steve Huffman identifies passkeys (like Face ID or Touch ID) as a key technology because they require a physical human presence to authenticate, proving a person is "in seat" without revealing their real-world identity.