Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The enormous compute budget for the original AlphaGo was not about finding the most efficient training method, but about proving a method could work at all. Once a breakthrough is made and the path is clear, subsequent efforts can focus on optimization and achieve similar results with far less compute.

Related Insights

A 10x increase in compute may only yield a one-tier improvement in model performance. This appears inefficient but can be the difference between a useless "6-year-old" intelligence and a highly valuable "16-year-old" intelligence, unlocking entirely new economic applications.

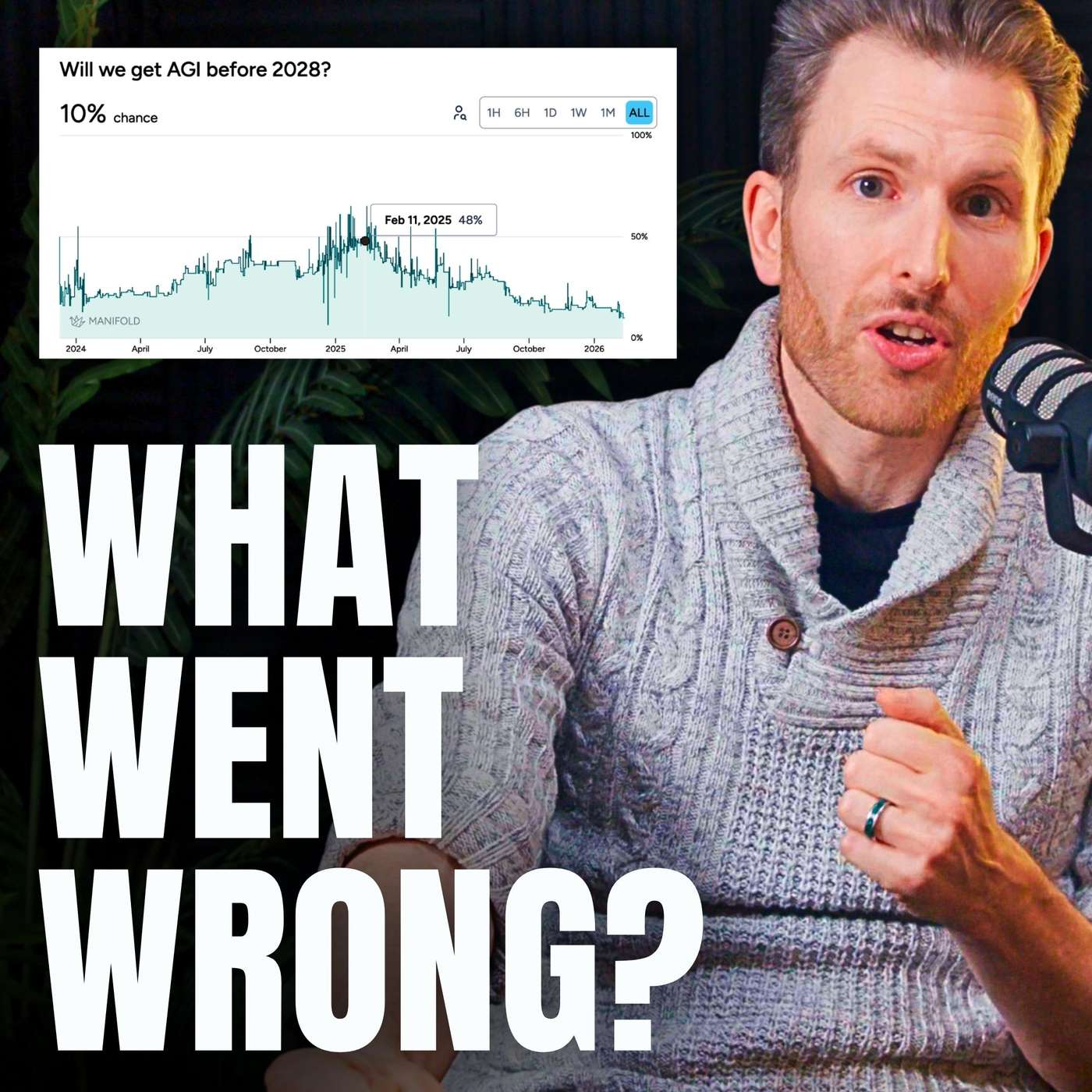

A slowdown in compute growth may have a squared negative effect on AI progress. It not only reduces resources for training larger models but also stifles the discovery of new algorithms, as breakthroughs like the Transformer required immense compute for experimentation. This double impact could significantly delay major capabilities milestones.

The progression from early neural networks to today's massive models is fundamentally driven by the exponential increase in available computational power, from the initial move to GPUs to today's million-fold increases in training capacity on a single model.

Breakthroughs like neural network "pruning" can reduce model size by 90% without losing accuracy, offering a 10x reduction in inference costs. This highlights that algorithmic innovation, not just acquiring more hardware, will be a key competitive vector in the AI race, enabling more output with less energy.

Over two-thirds of reasoning models' performance gains came from massively increasing their 'thinking time' (inference scaling). This was a one-time jump from a zero baseline. Further gains are prohibitively expensive due to compute limitations, meaning this is not a repeatable source of progress.

Today's AI boom is fueled by scaling computation, which is a known engineering challenge. The alternative, embedding nuanced, human-like inductive biases, is far harder as it requires a deep understanding of the problem space. This difficulty gap explains why massive models dominate AI development over more targeted, efficient ones—scaling is simply the more straightforward path.

The history of AI, such as the 2012 AlexNet breakthrough, demonstrates that scaling compute and data on simpler, older algorithms often yields greater advances than designing intricate new ones. This "bitter lesson" suggests prioritizing scalability over algorithmic complexity for future progress.

The "bitter lesson" in AI research posits that methods leveraging massive computation scale better and ultimately win out over approaches that rely on human-designed domain knowledge or clever shortcuts, favoring scale over ingenuity.

The era of guaranteed progress by simply scaling up compute and data for pre-training is ending. With massive compute now available, the bottleneck is no longer resources but fundamental ideas. The AI field is re-entering a period where novel research, not just scaling existing recipes, will drive the next breakthroughs.

While costly, advanced AI models provide a return on investment by enabling teams to tackle previously unsolvable or prohibitively complex problems. The value isn't just in accelerating existing workflows but in fundamentally increasing the ambition and scope of what's technically achievable.

![Dylan Patel - Inside the Trillion-Dollar AI Buildout - [Invest Like the Best, EP.442] thumbnail](https://megaphone.imgix.net/podcasts/799253cc-9de9-11f0-8661-ab7b8e3cb4c1/image/d41d3a6f422989dc957ef10da7ad4551.jpg?ixlib=rails-4.3.1&max-w=3000&max-h=3000&fit=crop&auto=format,compress)