Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

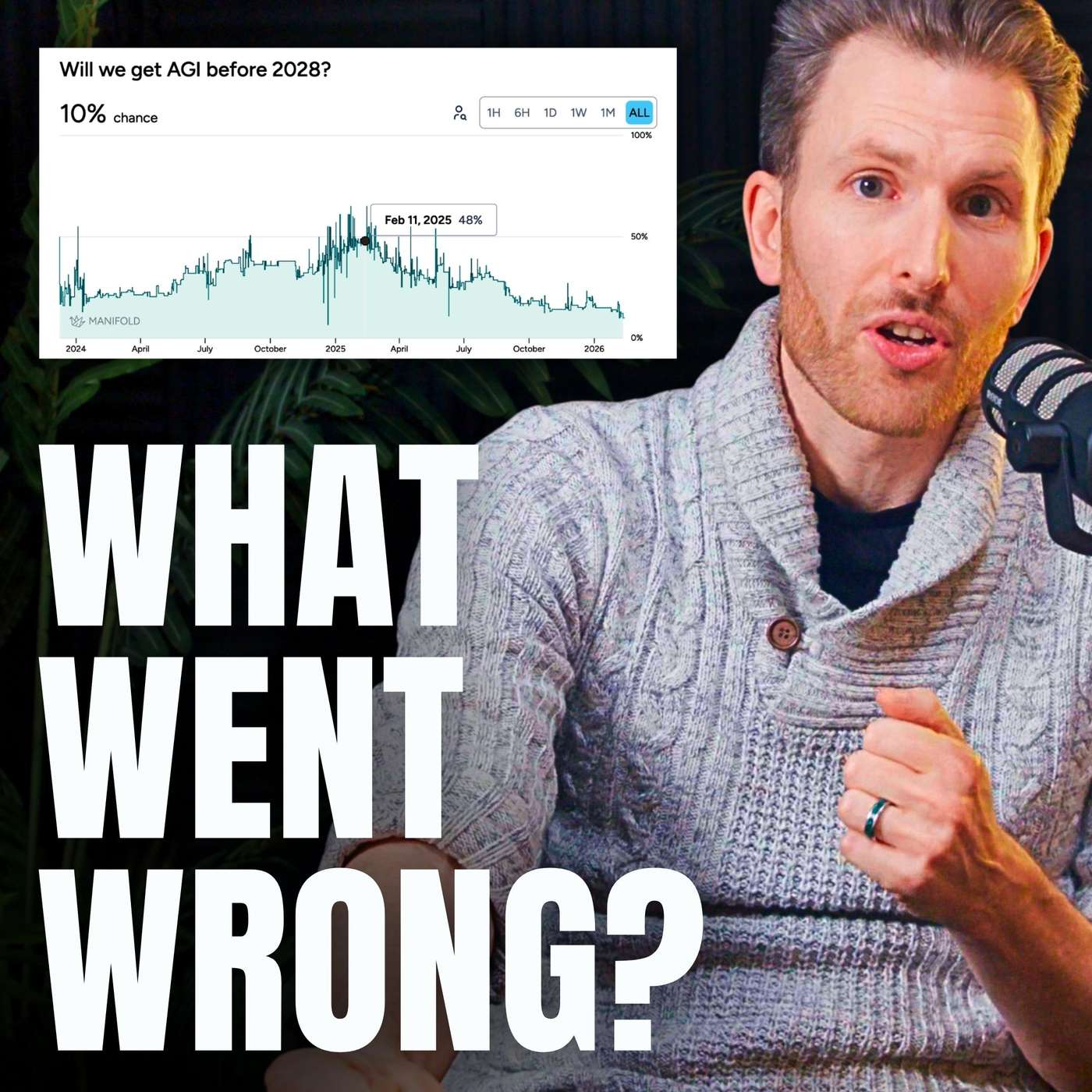

A genuine AI capabilities explosion won't happen just because models can write novel research papers. The bottleneck is the full automation of the R&D loop, which includes a long tail of "messy" real-world tasks like fixing failing GPUs in a data center or managing facility cooling. This physical and logistical grounding is often overlooked.

Related Insights

Even the most advanced AI model can't accelerate science without practical, real-world data. The current bottleneck is often logistical—knowing reagent lead times, lab inventory, and costs. Superior model intelligence is less critical than having access to this operational context.

Turing's CEO argues that frontier models are already capable of much more than enterprises are demanding. The bottleneck isn't the AI's ability, but the "first mile and last mile schlep" of integration. Massive productivity gains are possible even without further model improvements.

A "software-only singularity," where AI recursively improves itself, is unlikely. Progress is fundamentally tied to large-scale, costly physical experiments (i.e., compute). The massive spending on experimental compute over pure researcher salaries indicates that physical experimentation, not just algorithms, remains the primary driver of breakthroughs.

The focus in AI has evolved from rapid software capability gains to the physical constraints of its adoption. The demand for compute power is expected to significantly outstrip supply, making infrastructure—not algorithms—the defining bottleneck for future growth.

The most critical feedback loop for an intelligence explosion isn't just AI automating AI R&D (software). It's AI automating the entire physical supply chain required to produce more of itself—from raw material extraction to building the factories that fabricate the chips it runs on. This 'full stack' automation is a key milestone for exponential growth.

While the world focused on GPU shortages, the real constraint on AI compute is now physical infrastructure. The bottleneck has moved to accessing power, building data centers, and finding specialized labor like electricians and acquiring basic materials like structural steel. Merely acquiring chips is no longer enough to scale.

While data was once a major constraint for training AI, models can now effectively create their own synthetic data. This has shifted the critical choke points in the AI supply chain to physical infrastructure like power grids and data center construction, which are now the primary limiters of growth.

Even if AI perfects software engineering, automating AI R&D will be limited by non-coding tasks, as AI companies aren't just software engineers. Furthermore, AI assistance might only be enough to maintain the current rate of progress as 'low-hanging fruit' disappears, rather than accelerate it.

The ultimate goal for leading labs isn't just creating AGI, but automating the process of AI research itself. By replacing human researchers with millions of "AI researchers," they aim to trigger a "fast takeoff" or recursive self-improvement. This makes automating high-level programming a key strategic milestone.

The primary constraint on the AI boom is not chips or capital, but aging physical infrastructure. In Santa Clara, NVIDIA's hometown, fully constructed data centers are sitting empty for years simply because the local utility cannot supply enough electricity. This highlights how the pace of AI development is ultimately tethered to the physical world's limitations.