Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

While harms like fraud are clearly bad, a vast middle ground of "gray fakes" exists. Applications like synthetically resurrecting the deceased or AI satire unsettle us without a clear ethical consensus. This ambiguity creates complex challenges for platforms and policymakers.

Related Insights

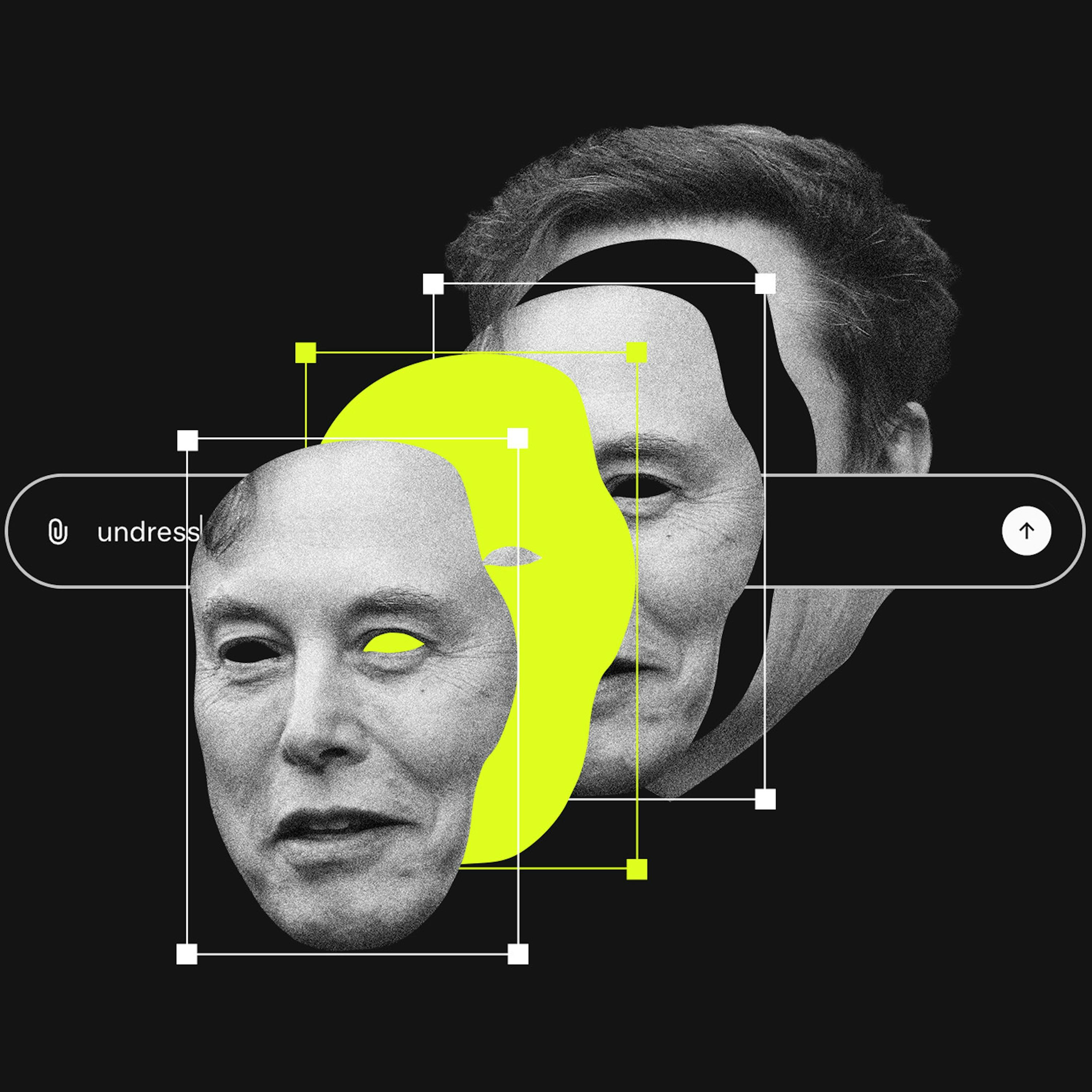

The Grok controversy is reigniting the debate over moderating legal but harmful content, a central conflict in the UK's Online Safety Act. AI's ability to mass-produce harassing images that fall short of illegality pushes this unresolved regulatory question to the forefront.

The rush to label Grok's output as illegal CSAM misses a more pervasive issue: using AI to generate demeaning, but not necessarily illegal, images as a tool for harassment. This dynamic of "lawful but awful" content weaponized at scale currently lacks a clear legal framework.

The biggest political danger of deepfakes isn't that people will believe fake content. It's the "liar's dividend": politicians can now dismiss genuine, scandalous video evidence as a deepfake. This erodes video as a tool for accountability, a more subtle but profound threat to political discourse.

The modern media ecosystem is defined by the decomposition of truth. From AI-generated fake images to conspiracy theories blending real and fake documents on X, people are becoming accustomed to an environment where discerning absolute reality is difficult and are willing to live with that ambiguity.

Politician Alex Boris argues that expecting humans to spot increasingly sophisticated deepfakes is a losing battle. The real solution is a universal metadata standard (like C2PA) that cryptographically proves if content is real or AI-generated, making unverified content inherently suspect, much like an unsecure HTTP website today.

The rapid advancement of AI-generated video will soon make it impossible to distinguish real footage from deepfakes. This will cause a societal shift, eroding the concept of 'video proof' which has been a cornerstone of trust for the past century.

Beyond generating fake content, AI exacerbates public skepticism towards all information, even from established sources. This erodes the common factual basis on which society operates, making it harder for democracies to function as people can't even agree on the basic building blocks of information.

As AI makes creating complex visuals trivial, audiences will become skeptical of content like surrealist photos or polished B-roll. They will increasingly assume it is AI-generated rather than the result of human skill, leading to lower trust and engagement.

Current responses to deepfakes are insufficient. Detection is an endless cat-and-mouse game with high error rates. Watermarking can be compromised. Provenance systems struggle with explainability for complex media edits. None provide the categorical confidence needed to solve the crisis of digital trust.

A significant societal risk is the public's inability to distinguish sophisticated AI-generated videos from reality. This creates fertile ground for political deepfakes to influence elections, a problem made worse by social media platforms that don't enforce clear "Made with AI" labeling.