Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The biggest political danger of deepfakes isn't that people will believe fake content. It's the "liar's dividend": politicians can now dismiss genuine, scandalous video evidence as a deepfake. This erodes video as a tool for accountability, a more subtle but profound threat to political discourse.

Related Insights

When officials deny events clearly captured on video, it breaks public trust more severely than standard political spin. This direct contradiction of visible reality unlocks an intense level of citizen anger that feels like a personal, deliberate gaslighting attempt.

The ability to label a deepfake as 'fake' doesn't solve the problem. The greater danger is 'frequency bias,' where repeated exposure to a false message forms a strong mental association, making the idea stick even when it's consciously rejected as untrue.

Adam Mosseri’s public statement that we can no longer assume photos or videos are real marks a pivotal shift. He suggests moving from a default of trust to a default of skepticism, effectively admitting platforms have lost the war on deepfakes and placing the burden of verification on users.

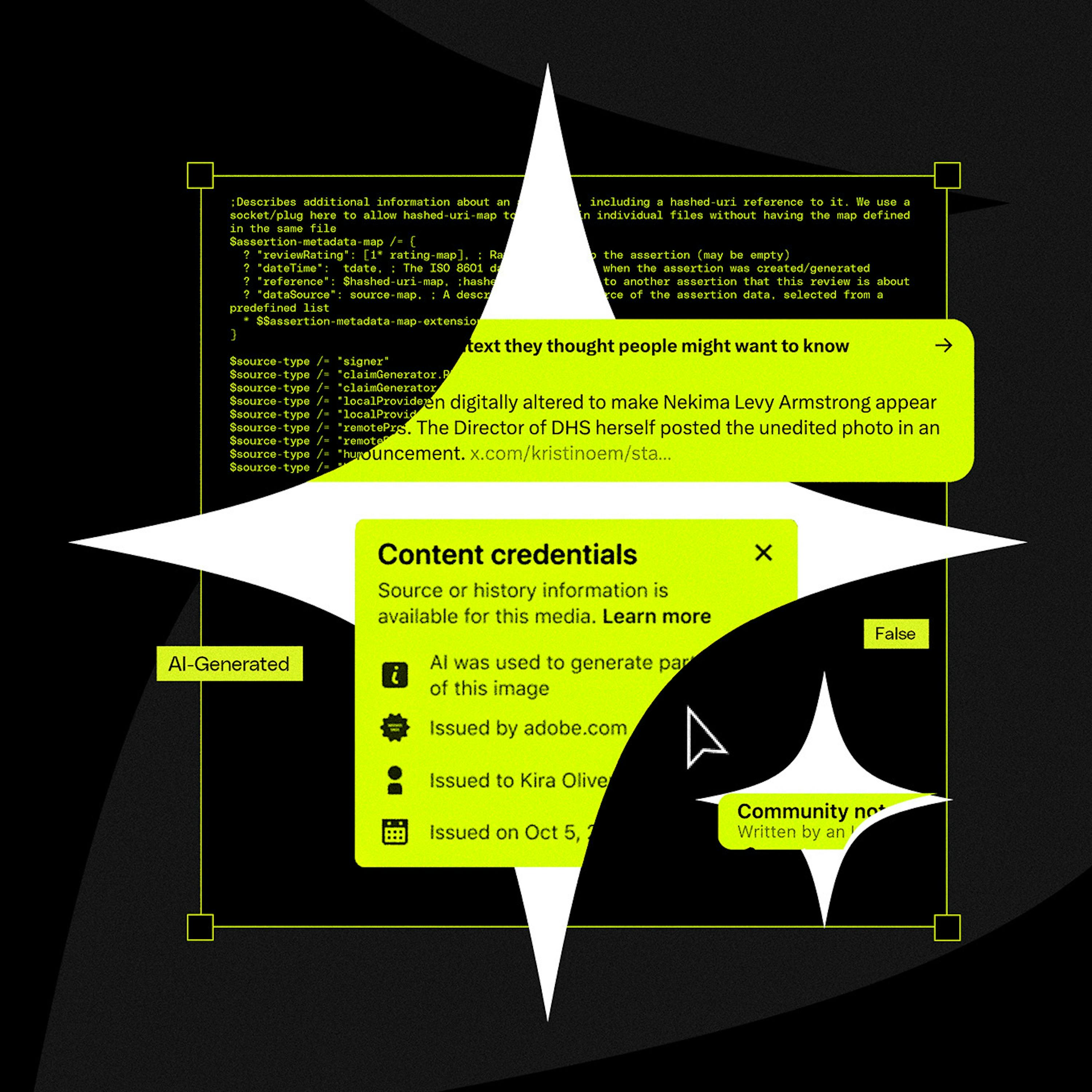

The modern media ecosystem is defined by the decomposition of truth. From AI-generated fake images to conspiracy theories blending real and fake documents on X, people are becoming accustomed to an environment where discerning absolute reality is difficult and are willing to live with that ambiguity.

The rise of convincing AI-generated deepfakes will soon make video and audio evidence unreliable. The solution will be the blockchain, a decentralized, unalterable ledger. Content will be "minted" on-chain to provide a verifiable, timestamped record of authenticity that no single entity can control or manipulate.

Counterintuitively, as AI makes it easy to fake any video or audio, the power of "gotcha" recordings will diminish. The plausible deniability of "it could be a deepfake" may free people from the social surveillance state created by smartphone cameras.

The rapid advancement of AI-generated video will soon make it impossible to distinguish real footage from deepfakes. This will cause a societal shift, eroding the concept of 'video proof' which has been a cornerstone of trust for the past century.

Beyond generating fake content, AI exacerbates public skepticism towards all information, even from established sources. This erodes the common factual basis on which society operates, making it harder for democracies to function as people can't even agree on the basic building blocks of information.

As AI makes creating complex visuals trivial, audiences will become skeptical of content like surrealist photos or polished B-roll. They will increasingly assume it is AI-generated rather than the result of human skill, leading to lower trust and engagement.

A significant societal risk is the public's inability to distinguish sophisticated AI-generated videos from reality. This creates fertile ground for political deepfakes to influence elections, a problem made worse by social media platforms that don't enforce clear "Made with AI" labeling.