Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

The rapid expansion promised by AI firms faces real-world bottlenecks. These include shortages of key commodities like copper, insufficient power grid capacity requiring years to build new plants, and a lack of skilled construction labor, making promised timelines highly unrealistic.

Related Insights

The primary bottleneck for scaling AI over the next decade may be the difficulty of bringing gigawatt-scale power online to support data centers. Smart money is already focused on this challenge, which is more complex than silicon supply.

The focus in AI has evolved from rapid software capability gains to the physical constraints of its adoption. The demand for compute power is expected to significantly outstrip supply, making infrastructure—not algorithms—the defining bottleneck for future growth.

Despite a massive contract with OpenAI, Oracle is pushing back data center completion dates due to labor and material shortages. This shows that the AI infrastructure boom is constrained by physical-world limitations, making hyper-aggressive timelines from tech giants challenging to execute in practice.

Despite staggering announcements for new AI data centers, a primary limiting factor will be the availability of electrical power. The current growth curve of the power infrastructure cannot support all the announced plans, creating a physical bottleneck that will likely lead to project failures and investment "carnage."

The true constraint on scaling AI is not silicon or power, but "time to compute"—the physical reality of construction. Sourcing thousands of tradespeople for remote sites and managing complex supply chains for building materials is the primary hurdle limiting the speed of AI infrastructure growth.

While the world focused on GPU shortages, the real constraint on AI compute is now physical infrastructure. The bottleneck has moved to accessing power, building data centers, and finding specialized labor like electricians and acquiring basic materials like structural steel. Merely acquiring chips is no longer enough to scale.

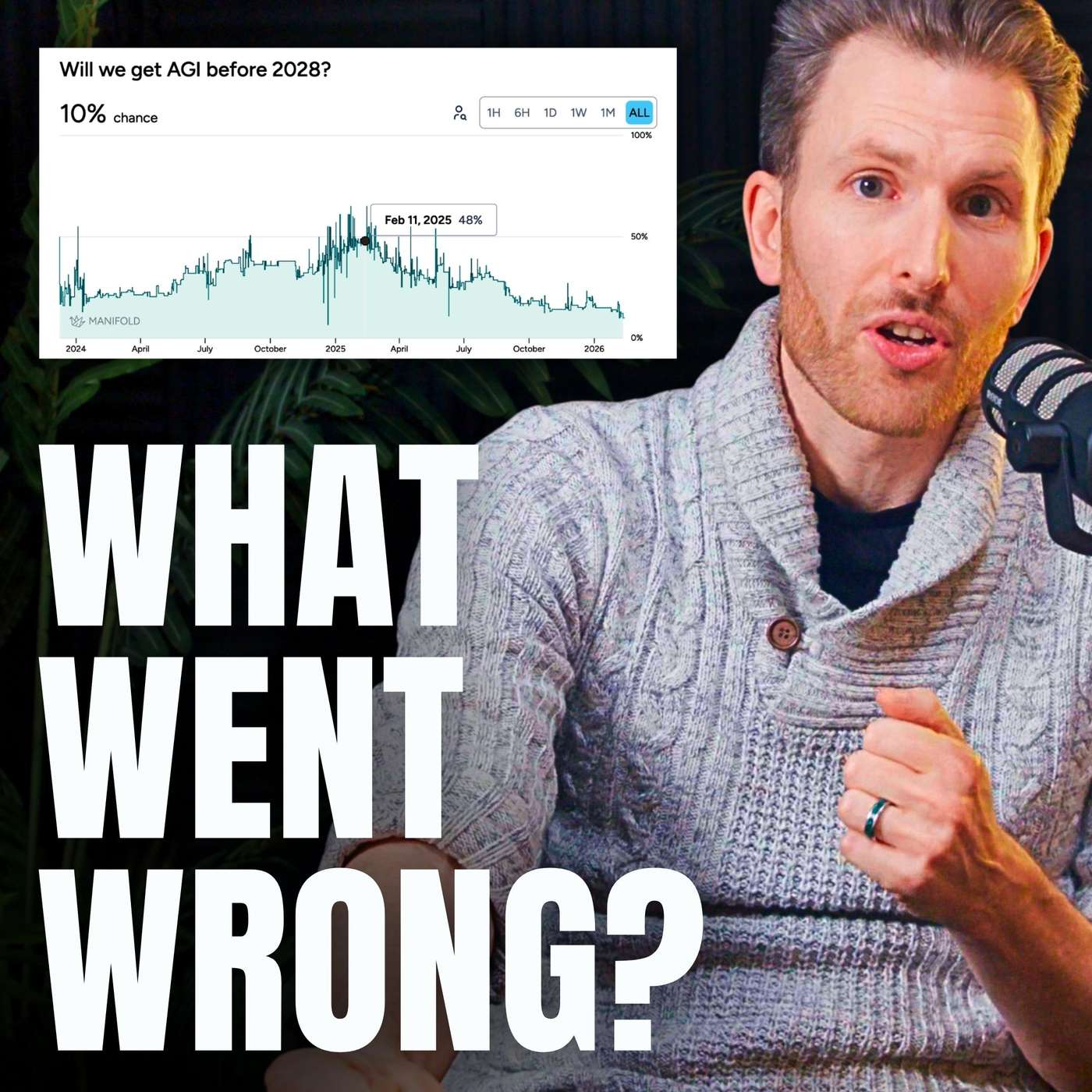

The AI industry's exponential growth in consuming compute, electricity, and talent is unsustainable. By 2032, it will have absorbed most available slack from other industries. Further progress will require potentially un-fundable trillion-dollar training runs, creating a critical period for AGI development.

Most of the world's energy capacity build-out over the next decade was planned using old models, completely omitting the exponential power demands of AI. This creates a looming, unpriced-in bottleneck for AI infrastructure development that will require significant new investment and planning.

According to Arista's CEO, the primary constraint on building AI infrastructure is the massive power consumption of GPUs and networks. Finding data center locations with gigawatts of available power can take 3-5 years, making energy access, not technology, the main limiting factor for industry growth.

The primary constraint on the AI boom is not chips or capital, but aging physical infrastructure. In Santa Clara, NVIDIA's hometown, fully constructed data centers are sitting empty for years simply because the local utility cannot supply enough electricity. This highlights how the pace of AI development is ultimately tethered to the physical world's limitations.