Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Russia's information warfare is less about creating new narratives and more about identifying and exacerbating existing societal fissures. By amplifying local opposition to a new military base, for instance, they frame a legitimate debate as a conflict between citizens and a corrupt state, thereby eroding trust and national unity from within.

Related Insights

Russia's propaganda strategy has evolved from disseminating alternative narratives to promoting 'vibes-based' content. Using viral media like the 'Sigma Boy' song, the aim is to foster specific feelings and ideas, such as patriarchal dominance, making it difficult to distinguish from organic trends.

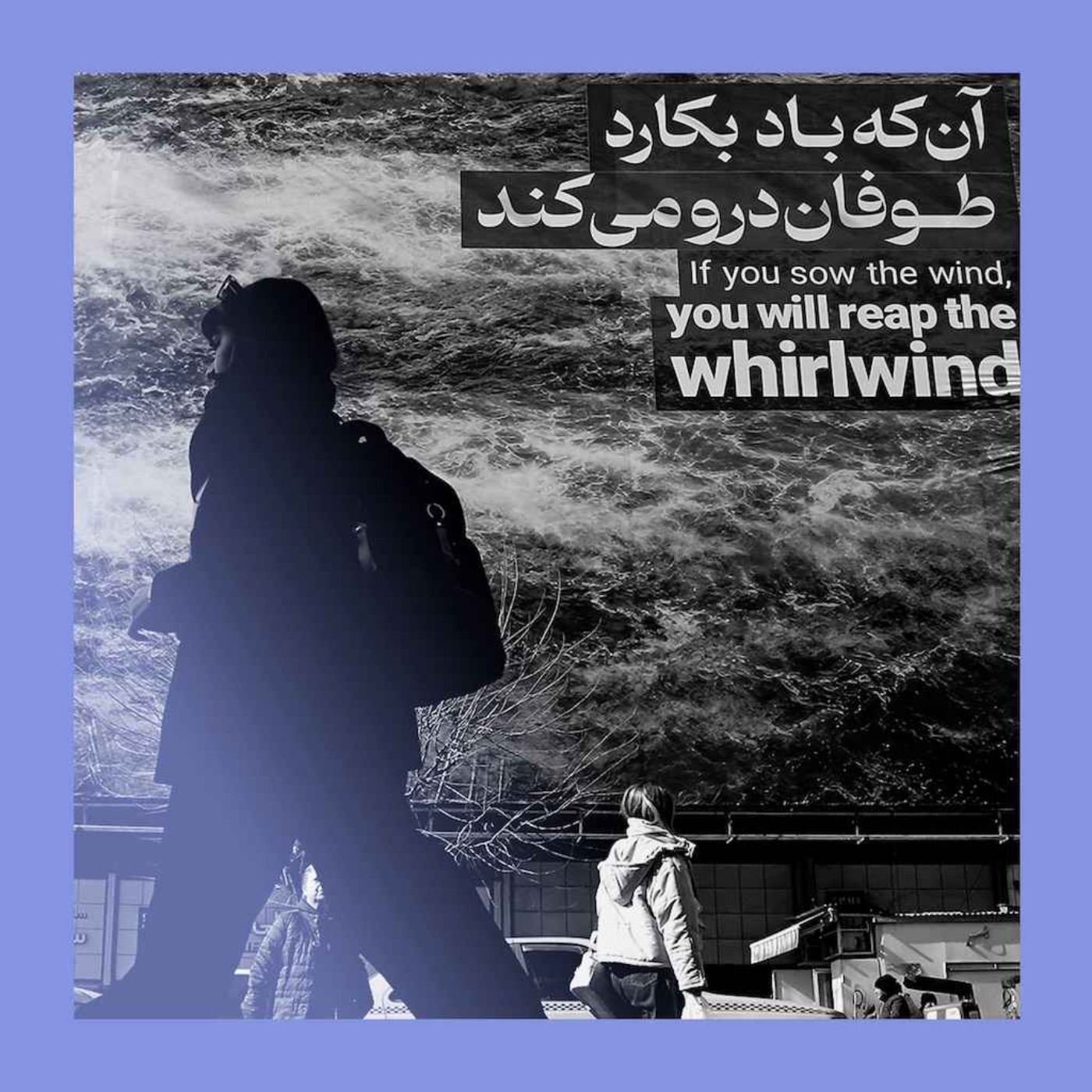

The modern information landscape is so saturated with noise, deepfakes, and propaganda that discerning the truth requires an enormous investment of time and energy. This high "cost" leads not to believing falsehoods, but to a general disbelief in everything and an inability to form trusted opinions.

Unlike historical propaganda which used centralized broadcasts, today's narrative control is decentralized and subtle. It operates through billions of micro-decisions and algorithmic nudges that shape individual perceptions daily, achieving macro-level control without any overt displays of power.

Social media's algorithms are a key threat to political movements. They are designed to find the 10% of issues on which allies disagree and amplify that discord. This manufactured infighting turns potential collaborators into enemies, fracturing coalitions and undermining collective action.

A content moderation failure revealed a sophisticated misuse tactic: campaigns used factually correct but emotionally charged information (e.g., school shooting statistics) not to misinform, but to intentionally polarize audiences and incite conflict. This challenges traditional definitions of harmful content.

Many of today's political and social conflicts stem from long-term KGB "psyops" designed to divide the West. These playbooks—which involve framing influential figures, backing separatist movements, and creating internal division—are still actively used by Russia and have been copied by other nations.

Effective political propaganda isn't about outright lies; it's about controlling the frame of reference. By providing a simple, powerful lens through which to view a complex situation, leaders can dictate the terms of the debate and trap audiences within their desired narrative, limiting alternative interpretations.

Problems like astroturfing (faking grassroots movements) and disinformation existed long before modern AI. AI acts as a powerful amplifier, making these tactics cheaper and more scalable, but it doesn't invent them. The solutions are often political and societal, not purely technological fixes.

The KGB's 20-year campaign to frame Pope Pius XII as a Nazi sympathizer only worked in the 1960s. It succeeded because it targeted a generation too young to have lived through WWII and witnessed the Pope's anti-Hitler actions firsthand, creating a "blank canvas" for the false narrative to take hold.

The era of limited information sources allowed for a controlled, shared narrative. The current media landscape, with its volume and velocity of information, fractures consensus and erodes trust, making it nearly impossible for society to move forward in lockstep.