Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

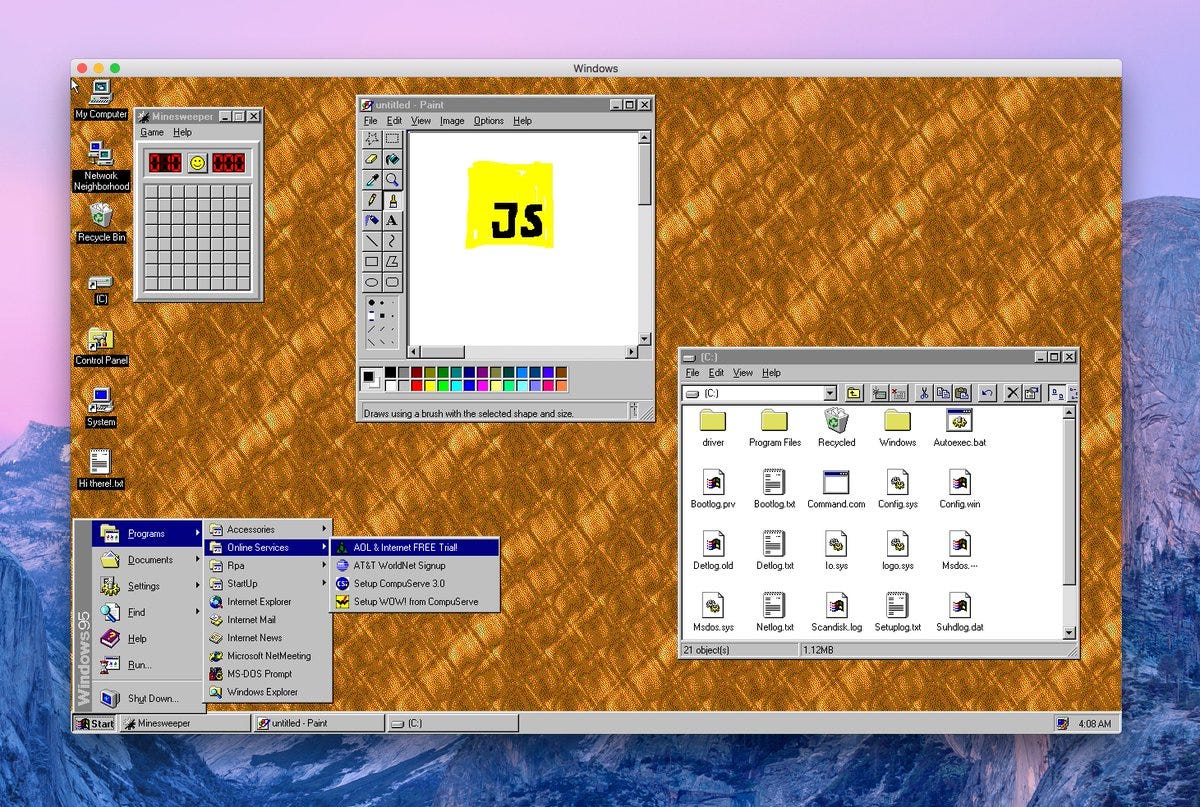

An experiment giving coding agents a chat channel to coordinate their work failed to improve results. The agents were faster and more effective simply by observing changes directly in the shared codebase. The overhead of communication was less efficient than direct environmental awareness.

Related Insights

Multi-agent systems work well for easily parallelizable, "read-only" tasks like research, where sub-agents gather context independently. They are much trickier for "write" tasks like coding, where conflicting decisions between agents create integration problems.

A developer found that when his AI agent interacts directly with coding environments, it produces features with better value and fewer bugs compared to when he manually prompts an AI model himself. This suggests direct 'computer-to-computer' interaction is more effective for development tasks.

Contrary to the expectation that more agents increase productivity, a Stanford study found that two AI agents collaborating on a coding task performed 50% worse than a single agent. This "curse of coordination" intensified as more agents were added, highlighting the significant overhead in multi-agent systems.

Despite extensive prompt optimization, researchers found it couldn't fix the "synergy gap" in multi-agent teams. The real leverage lies in designing the communication architecture—determining which agent talks to which and in what sequence—to improve collaborative performance.

While messaging platforms like Slack can serve as an interface for human-to-agent communication, they are fundamentally ill-suited for agent-to-agent collaboration. These tools are designed for human interaction patterns, creating friction when orchestrating multiple autonomous agents and indicating a need for new, agent-native communication protocols.

Building a bespoke communication layer for multiple AI agents is a complex "scaffolding" problem. A simpler, more direct solution is to treat agents as digital coworkers, assigning them accounts on existing platforms like Slack or Google Docs, enabling them to interact using established human workflows.

The study's finding that adding AI agents diminishes productivity provides a modern validation of Brooks's Law. The overhead required for coordination among agents completely negated any potential speed benefits from parallelizing the work, proving that simply adding more "developers" is counterproductive.

While chat works for human-AI interaction, the infinite canvas is a superior paradigm for multi-agent and human-AI collaboration. It allows for simultaneous, non-distracting parallel work, asynchronous handoffs, and persistent spatial context—all of which are difficult to achieve in a linear, turn-based chat interface.

In the Stanford study, AI agents spent up to 20% of their time communicating, yet this yielded no statistically significant improvement in success rates compared to having no communication at all. The messages were often vague and ill-timed, jamming channels without improving coordination.

In most cases, having multiple AI agents collaborate leads to a result that is no better, and often worse, than what the single most competent agent could achieve alone. The only observed exception is when success depends on generating a wide variety of ideas, as agents are good at sharing and adopting different approaches.