Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

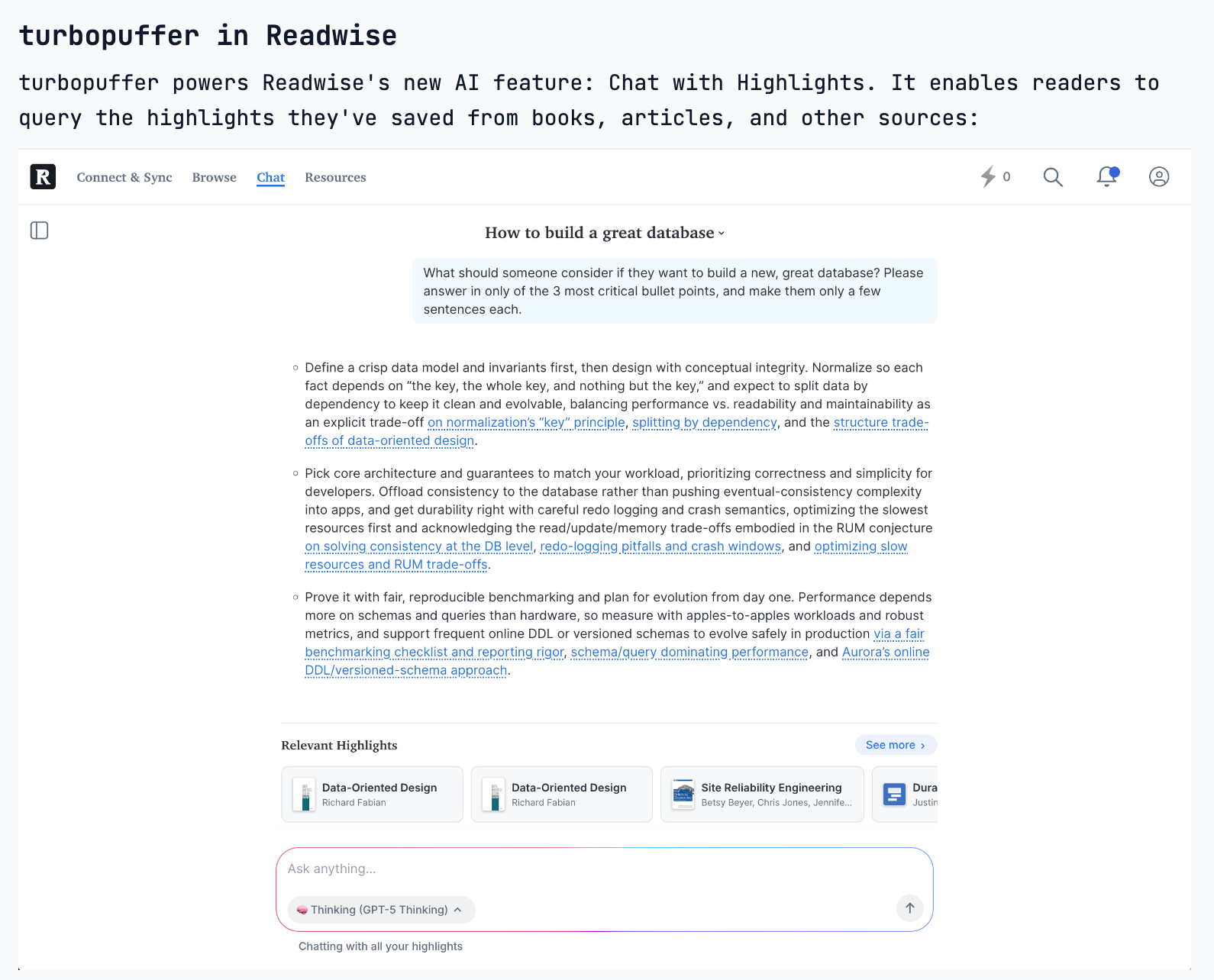

The idea for Turbopuffer originated when its founder calculated that adding an embedding-based feature to Readwise would cost $30k/month, a 6x increase in their total infra bill. This single data point revealed a clear market need for a drastically cheaper vector search solution.

Related Insights

TurboPuffer achieved its massive cost savings by building on slow S3 storage. While this increased write latency by 1000x—unacceptable for transactional systems—it was a perfectly acceptable trade-off for search and AI workloads, which prioritize fast reads over fast writes.

AI's hunger for context is making search a critical but expensive component. As illustrated by Turbo Puffer's origin, a single recommendation feature using vector embeddings can cost tens of thousands per month, forcing companies to find cheaper solutions to make AI features economically viable at scale.

Unlike traditional SaaS, achieving product-market fit in AI is not enough for survival. The high and variable costs of model inference mean that as usage grows, companies can scale directly into unprofitability. This makes developing cost-efficient infrastructure a critical moat and survival strategy, not just an optimization.

An engineer's struggle to run OpenClaw on cheap cloud VMs due to high RAM needs led him to build a solution for friends. This quickly validated the need for an affordable, managed hosting service, which he turned into a startup (Agent37.com) almost immediately.

To serve Notion on AWS while its core infra was on GCP, Turbopuffer bought dark fiber to reduce cross-cloud latency. This extreme measure was preferable to compromising their core architectural principle of avoiding a stateful consensus layer, showcasing deep product conviction.

While the long-term vision for a major database is to support every query plan, the only sustainable advantage for a startup is focus. The founder explicitly states their biggest risk is overeagerness and that they will regret trying to do too much, not too little.

OpenPipe's initial value was clear: GPT-4 was powerful but prohibitively expensive for production. They offered a managed flow to distill expensive workflows into cheaper, smaller models, resonating with early customers facing massive OpenAI bills and helping them reach $1M ARR in eight months.

The founder used a "Napkin Math" approach, analyzing fundamental computing metrics (disk speed, memory cost). This revealed a viable architecture using cheap S3 storage that incumbents overlooked, creating a 100x cost advantage for his database.

According to Ring's founder, the technology for ambitious AI features like "Dog Search Party" already exists. The real bottleneck is the cost of computation. Products that are technically possible today are often not launched because the processing expense makes them commercially unviable.

Early on, the founder ran Turbopuffer's cloud infrastructure on his personal credit card. When a large customer's usage bill skyrocketed, the immense financial pressure forced the team to optimize relentlessly, leading them to become profitable out of necessity rather than strategy.