Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

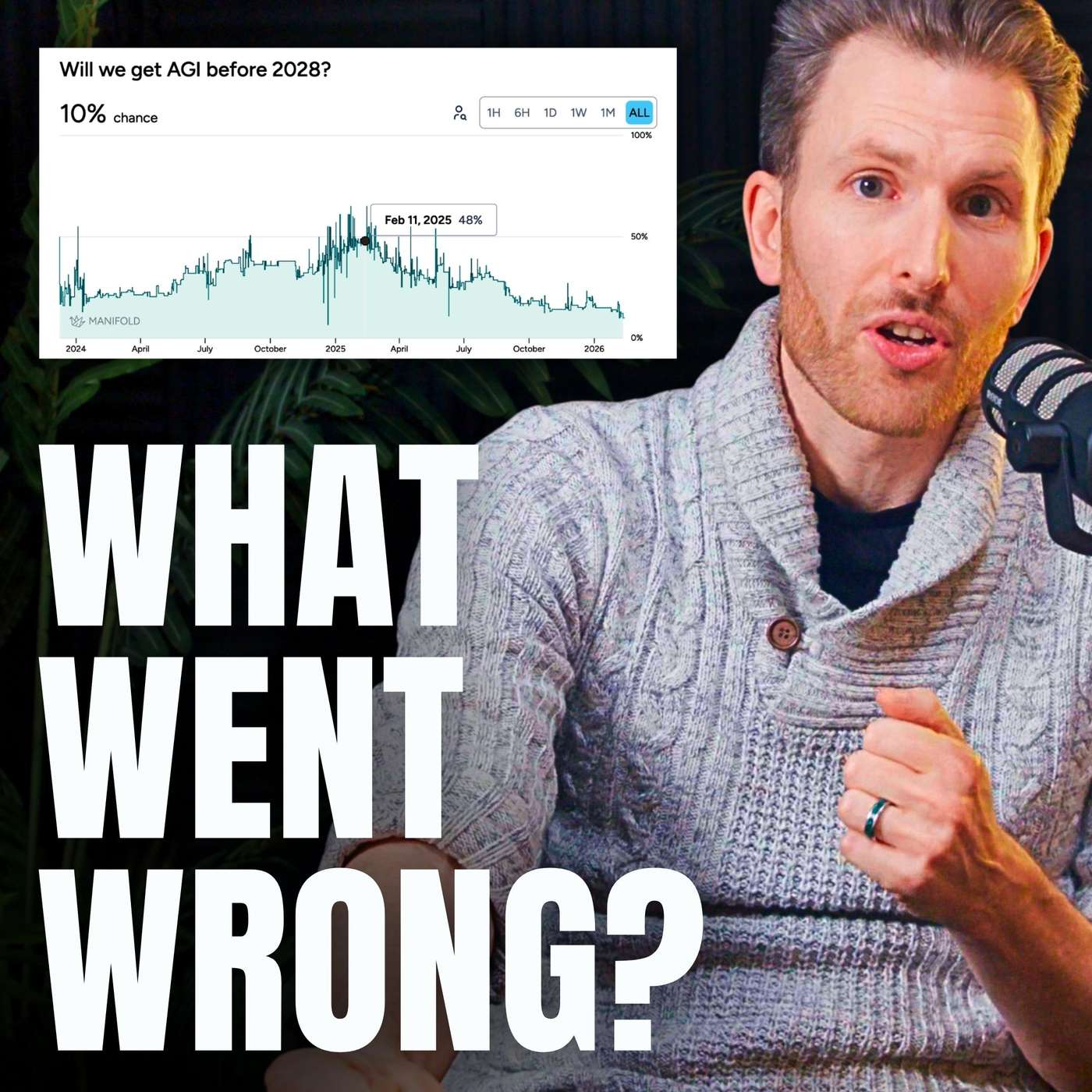

As AI achieves impressive milestones, like assisting in creating a cancer vaccine, the public conversation immediately discounts the achievement. The goalposts shift from "AI helped solve a problem" to demanding a fully autonomous, one-shot solution. This pattern of escalating expectations obscures the real, incremental progress being made.

Related Insights

As AI models achieve previously defined benchmarks for intelligence (e.g., reasoning), their failure to generate transformative economic value reveals those benchmarks were insufficient. This justifies 'shifting the goalposts' for AGI. It is a rational response to realizing our understanding of intelligence was too narrow. Progress in impressiveness doesn't equate to progress in usefulness.

When AI models achieve superhuman performance on specific benchmarks like coding challenges, it doesn't solve real-world problems. This is because we implicitly optimize for the benchmark itself, creating "peaky" performance rather than broad, generalizable intelligence.

Users frequently write off an AI's ability to perform a task after a single failure. However, with models improving dramatically every few months, what was impossible yesterday may be trivial today. This "capability blindness" prevents users from unlocking new value.

The pursuit of AGI may mirror the history of the Turing Test. Once ChatGPT clearly passed the test, the milestone was dismissed as unimportant. Similarly, as AI achieves what we now call AGI, society will likely move the goalposts and decide our original definition was never the true measure of intelligence.

A growing gap exists between AI's performance in demos and its actual impact on productivity. As podcaster Dwarkesh Patel noted, AI models improve at the rapid rate short-term optimists predict, but only become useful at the slower rate long-term skeptics predict, explaining widespread disillusionment.

AI models will produce a few stunning, one-off results in fields like materials science. These isolated successes will trigger an overstated hype cycle proclaiming 'science is solved,' masking the longer, more understated trend of AI's true, profound, and incremental impact on scientific discovery.

A paradox of rapid AI progress is the widening "expectation gap." As users become accustomed to AI's power, their expectations for its capabilities grow even faster than the technology itself. This leads to a persistent feeling of frustration, even though the tools are objectively better than they were a year ago.

Criticizing AI developers for being a few months off on predictions is a distraction. The underlying trend is one of exponential growth. Like criticizing Elon Musk's Mars timeline while ignoring his historic rocket launches, it's a failure to grasp the scale and direction of the technological shift that is already happening.

The media portrays AI development as volatile, with huge breakthroughs and sudden plateaus. The reality inside labs like OpenAI is a steady, continuous process of experimentation, stacking small wins, and consistent scaling. The internal experience is one of "chugging along."

Sequoia highlights the "AI effect": once an AI capability becomes mainstream, we stop calling it AI and give it a specific name, thereby moving the goalposts for "true" AI. This historical pattern of downplaying achievements is a key reason they are explicitly declaring the arrival of AGI.

![[State of RL/Reasoning] IMO/IOI Gold, OpenAI o3/GPT-5, and Cursor Composer — Ashvin Nair, Cursor thumbnail](https://assets.flightcast.com/V2Uploads/nvaja2542wefzb8rjg5f519m/01K4D8FB4MNA071BM5ZDSMH34N/square.jpg)