Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Simple concurrency helpers or custom promise chains fail in production. Robust systems need a "runtime contract" that enforces strict rules like concurrency limits, retry policies with backoff, and automatic cancellation of related tasks. This ensures predictable behavior and prevents cascading failures.

Related Insights

Kubernetes’s architecture of independent, asynchronous control loops makes it highly resilient; it can always drive toward its desired state regardless of failures. The deliberate trade-off is that this design makes debugging extremely difficult, as the root cause of an issue is often spread across multiple processes without a clear, unified log.

For complex, multi-step AI data pipelines, use a durable execution service like Trigger.dev or Vercel Workflows. This provides automatic retries, failure handling, and monitoring, ensuring your data enrichment processes are robust even when individual services or models fail.

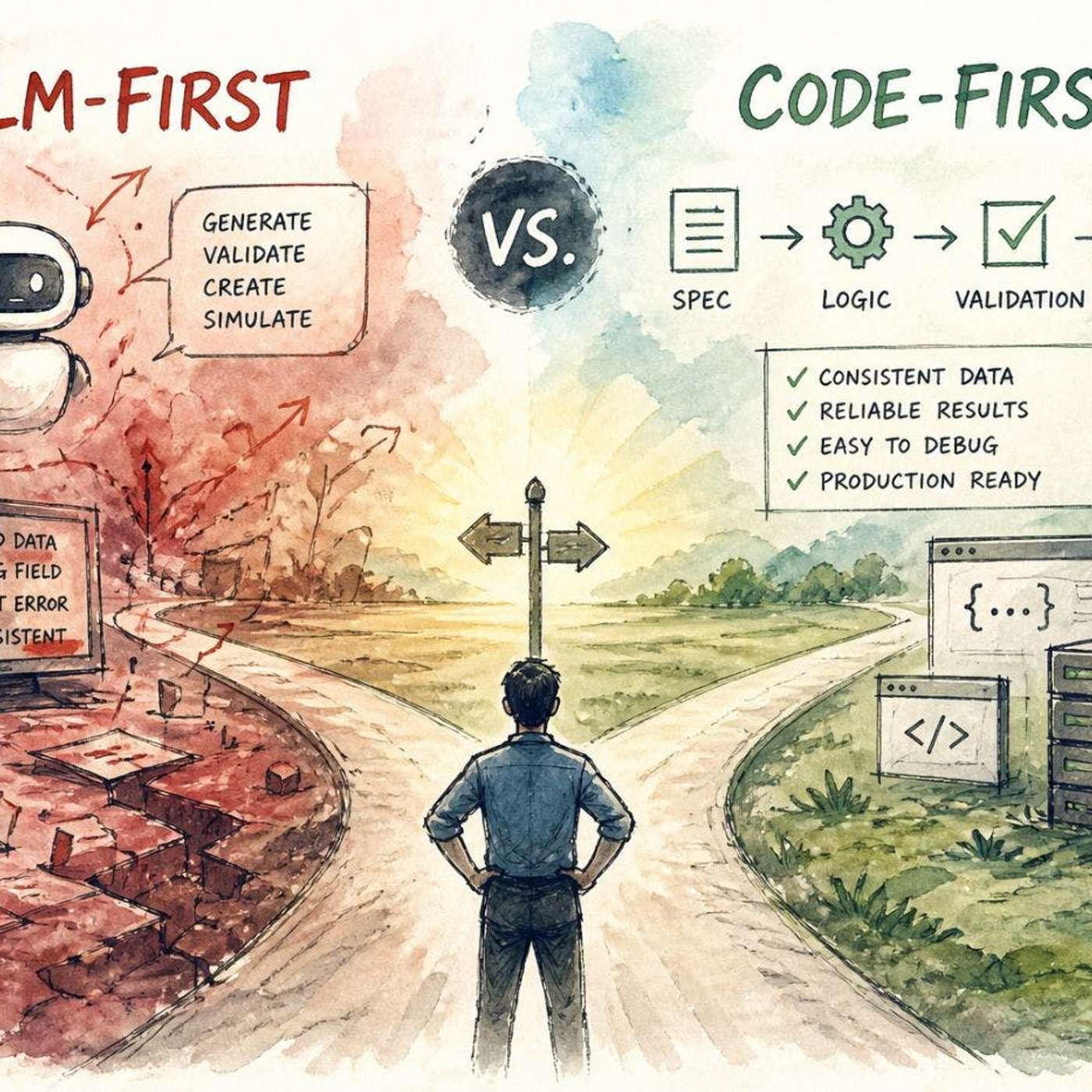

Don't give LLMs full control. Use deterministic code for core logic, validation, and enforcing rules. Delegate only tasks requiring flexibility or understanding of unstructured input to the LLM, treating it as a specialized component, not the entire system.

Instead of viewing velocity and dependability as a trade-off, engineer systems where the easiest, most automated path is also the safest. This "pit of success" makes the right choice the default for developers, aligning speed with reliability.

Many developers believe tweaking prompts and logic ('harness engineering') is the hardest part of building agents. The real bottleneck, however, is scaling, reliability, and managing production infrastructure—a common miscalculation that managed services aim to solve.

Developers often assume `Promise.race` terminates losing operations, but it doesn't. The "losing" promises continue running in the background, consuming resources, incurring API costs, and leaving orphaned processes that require manual cleanup, unlike a true ownership model which would handle cancellation.

While agentic AI can handle complex tasks described in natural language, it often fails on processes that take too long (e.g., over seven minutes). Traditional, deterministic automation workflows (like a standard Zap) are more reliable for these long-running or asynchronous jobs.

As AI models execute tasks via function calling, their internal state is insufficient for reliable, repeatable business outcomes. They must integrate with external systems (like BPMS) to become predictable "runtimes," ensuring consistent results despite prompt failures or hallucinations.

To make AI tools like Warp more reliable, Marco Casalaina creates explicit rules (e.g., "remind me to activate owner access") and connects the agent to documentation servers. This pre-loading of context and constraints prevents common failures and improves the agent's performance on complex tasks, moving beyond simple prompting.

The fundamental design of native Promises is to represent values over time, not to manage the lifecycle of the underlying operation. This lack of an "ownership" model means there is no built-in mechanism for a parent scope to enforce cancellation or cleanup on its child processes, causing leaks.