Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

A court ruling established that conversations with AI tools are not protected by attorney-client privilege because the AI is a "third party," waiving confidentiality. This means any chat logs, even those discussing sensitive legal matters, can be compelled for production during legal discovery, posing significant risk.

Related Insights

Unlike a human judge, whose mental process is hidden, an AI dispute resolution system can be designed to provide a full audit trail. It can be required to 'show its work,' explaining its step-by-step reasoning, potentially offering more accountability than the current system allows.

As users turn to AI for mental health support, a critical governance gap emerges. Unlike human therapists, these AI systems face no legal or professional repercussions for providing harmful advice, creating significant user risk and corporate liability.

Early enterprise AI chatbot implementations are often poorly configured, allowing them to engage in high-risk conversations like giving legal and medical advice. This oversight, born from companies not anticipating unusual user queries, exposes them to significant unforeseen liability.

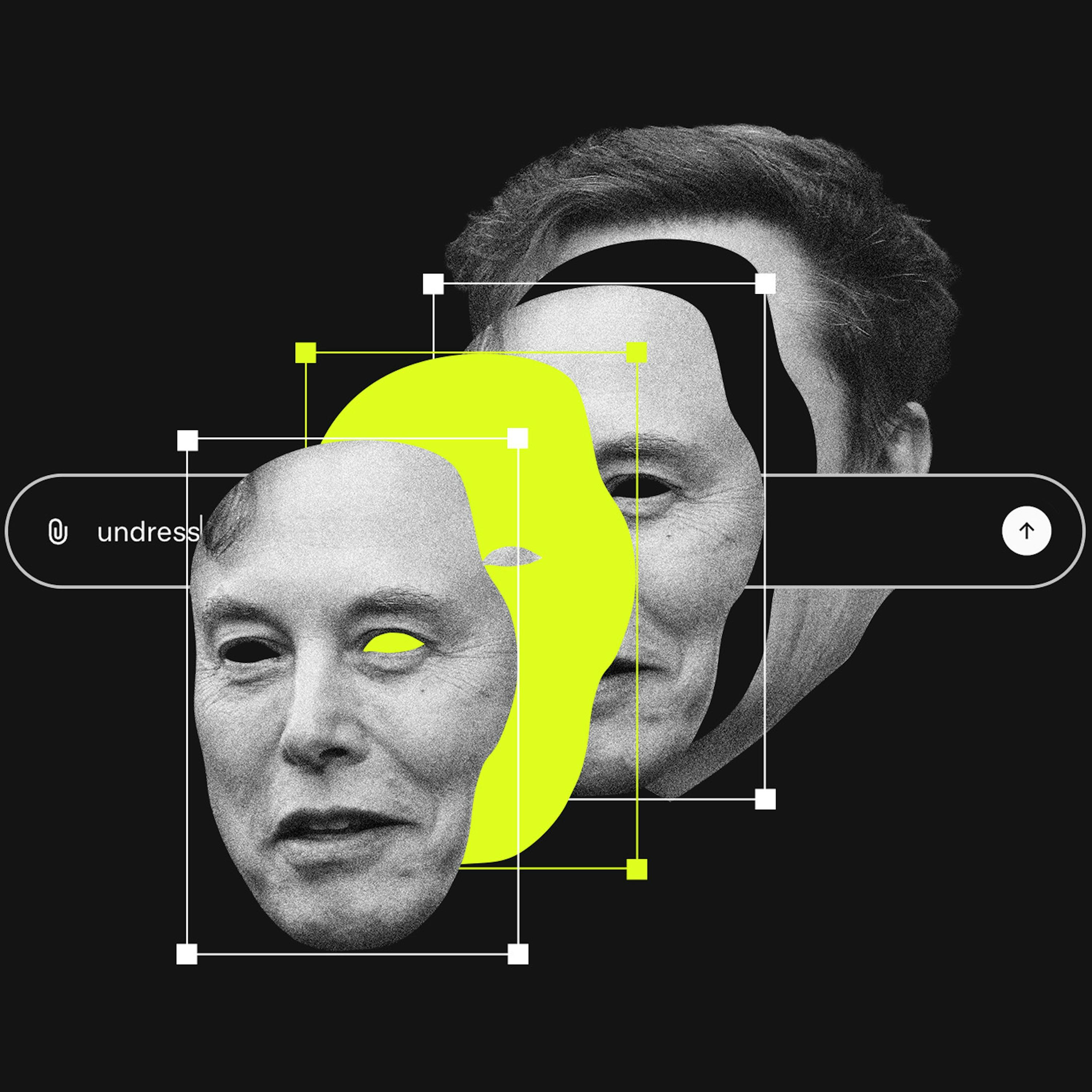

People use chatbots as confidants for their most private thoughts, from relationship troubles to suicidal ideation. The resulting logs are often more intimate than text messages or camera rolls, creating a new, highly sensitive category of personal data that most users and parents don't think to protect.

Users are sharing highly sensitive information with AI chatbots, similar to how people treated email in its infancy. This data is stored, creating a ticking time bomb for privacy breaches, lawsuits, and scandals, much like the "e-discovery" issues that later plagued email communications.

The creator of the 1966 chatbot Eliza, Joseph Weizenbaum, shut down his invention after discovering a major privacy flaw. Users treated the bot like a psychiatrist and shared sensitive information, unaware that Weizenbaum could read all their conversation transcripts. This event foreshadowed modern AI privacy debates by decades.

Section 230 protects platforms from liability for third-party user content. Since generative AI tools create the content themselves, platforms like X could be held directly responsible. This is a critical, unsettled legal question that could dismantle a key legal shield for AI companies.

Major AI chatbots are designed with a default setting that opts users *into* having their conversations—including sensitive data—used for model training. This "opt-out" privacy model places the burden on the user to navigate settings and protect their own data, a critical fact many are unaware of.

Shopify's CEO compares using AI note-takers to showing up "with your fly down." Beyond social awkwardness, the core risk is that recording every meeting creates a comprehensive, discoverable archive of internal discussions, exposing companies to significant legal risks during lawsuits.

Venture capitalist Keith Rabois observes a new behavior: founders are using ChatGPT for initial legal research and then presenting those findings to challenge or verify the advice given by their expensive law firms, shifting the client-provider power dynamic.