Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

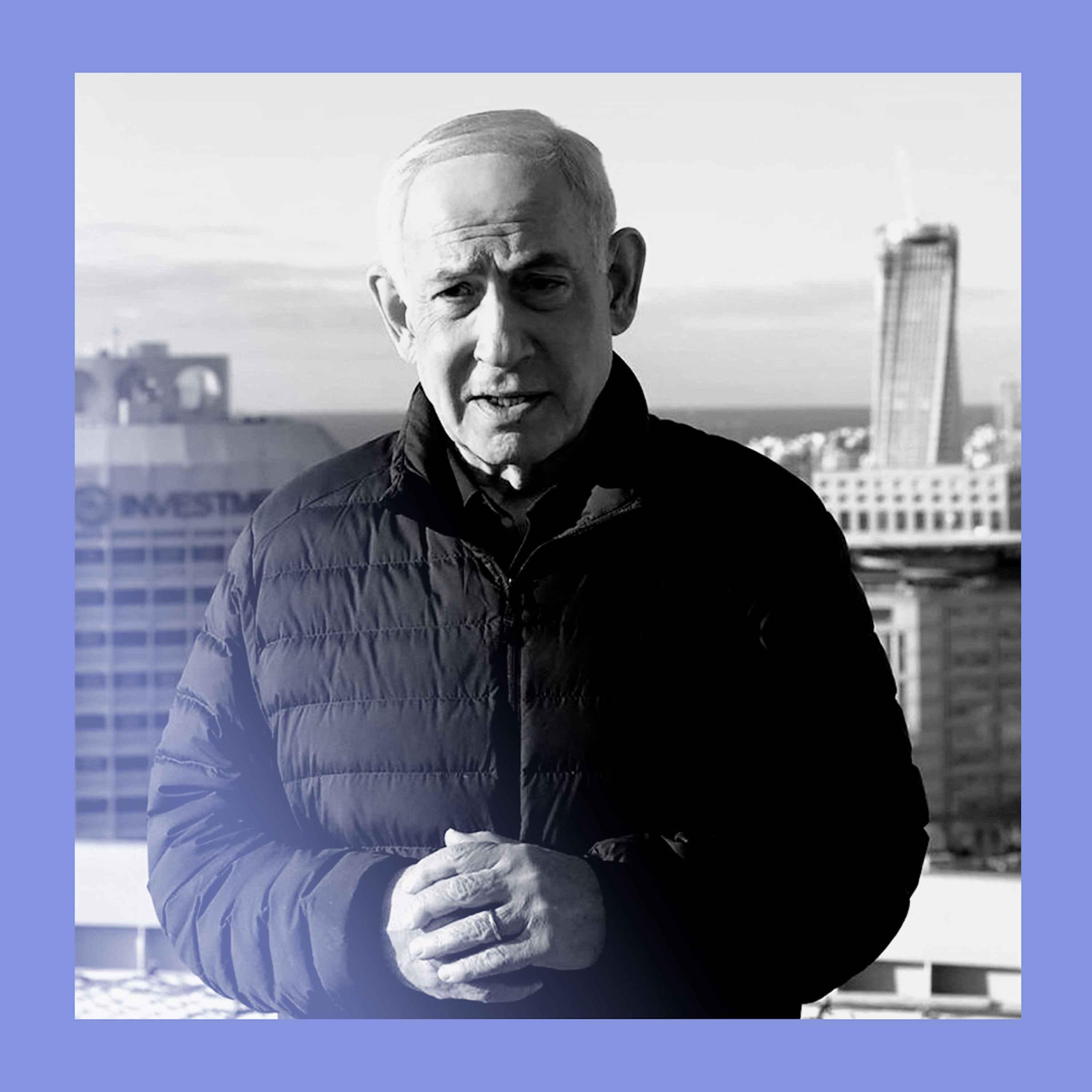

A geopolitical analyst argues that demonizing Anthropic CEO Dario Amodei is a mistake. He has uniquely succeeded where others failed, converting a generation of tech workers, who were previously skeptical of the national security establishment, into enthusiastic military supporters—a valuable 'gift' for national security relations.

Related Insights

By threatening a willing partner, the DoD risks sending a message to Silicon Valley that any collaboration will lead to a loss of control, undermining efforts to recruit tech talent for national security.

Anthropic's public standoff with the Pentagon over AI safeguards is now being mirrored by rivals OpenAI and Google. This unified front among competitors is largely driven by internal pressure and the need to retain top engineering talent who are morally opposed to their work being used for autonomous weapons.

The conflict between Anthropic and the Pentagon stemmed from fundamental philosophical differences and personal animosity between leaders, as much as specific contract language over surveillance and autonomous weapons. The disagreement was deeply rooted in a clash of Silicon Valley and Washington cultures.

Dario Amodei, CEO of Anthropic, frames the debate over selling advanced GPUs to China not as a trade issue, but as a severe national security risk. He compares it to selling nuclear weapons, arguing that it arms a geopolitical competitor with the foundational technology for advanced AI, which he calls "a country of geniuses in a data center."

Anthropic CEO Dario Amodei likely backed out of the Pentagon deal not just on personal principle, but because losing the contract was preferable to losing his team. AI safety is a core, unifying belief at Anthropic, demonstrating that in the war for elite AI talent, employee sentiment can dictate a company's most critical strategic decisions.

Unlike contractors who oversell a '20 percent solution,' Anthropic's CEO is transparently stating their AI isn't reliable for lethal uses. This 'truth in advertising' is culturally bizarre in a defense sector accustomed to hype, driving the conflict with a Pentagon that wants partners to project capability.

Anthropic’s resistance to giving the Pentagon unrestricted use of its AI is a talent retention strategy. AI researchers are a scarce, highly valued resource, and many in Silicon Valley are "peaceniks." This forces leaders to balance lucrative military contracts with the risk of losing top employees who object to their work's applications.

Anthropic is leveraging a seemingly minor disagreement over hypothetical military use cases into a major public relations victory. This move cements its brand as the "ethical" AI company, even if the core conflict is more of a culture clash than a substantive policy dispute.

Key negotiators for both OpenAI and Anthropic in their Pentagon talks are former government officials. This reveals a growing talent war for policy experts with deep government ties, who are now crucial for navigating and securing high-stakes defense contracts.

Despite an ongoing feud over AI safeguards, a defense official revealed the Pentagon feels compelled to continue working with Anthropic because they "need them now." This indicates a perceived immediate requirement for frontier models like Claude, handing significant negotiating power to the AI company.