Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

A significant credibility gap is forming between AI executives' talk of "superintelligence" and the often buggy, frustrating reality of using current models. This disconnect devalues serious policy discussions and creates cynicism, with observers noting we are in an "extremely capable tool era," not a "new social contract era."

Related Insights

The public AI debate is a false dichotomy between 'hype folks' and 'doomers.' Both camps operate from the premise that AI is or will be supremely powerful. This shared assumption crowds out a more realistic critique that current AI is a flawed, over-sold product that isn't truly intelligent.

Cohere's co-founder argues that conversations about hypothetical 'digital gods' killing humanity are a distraction. They prevent more practical and urgent discussions about policy solutions for AI-driven wealth inequality and labor market disruption, which are the technology's most pressing societal challenges today.

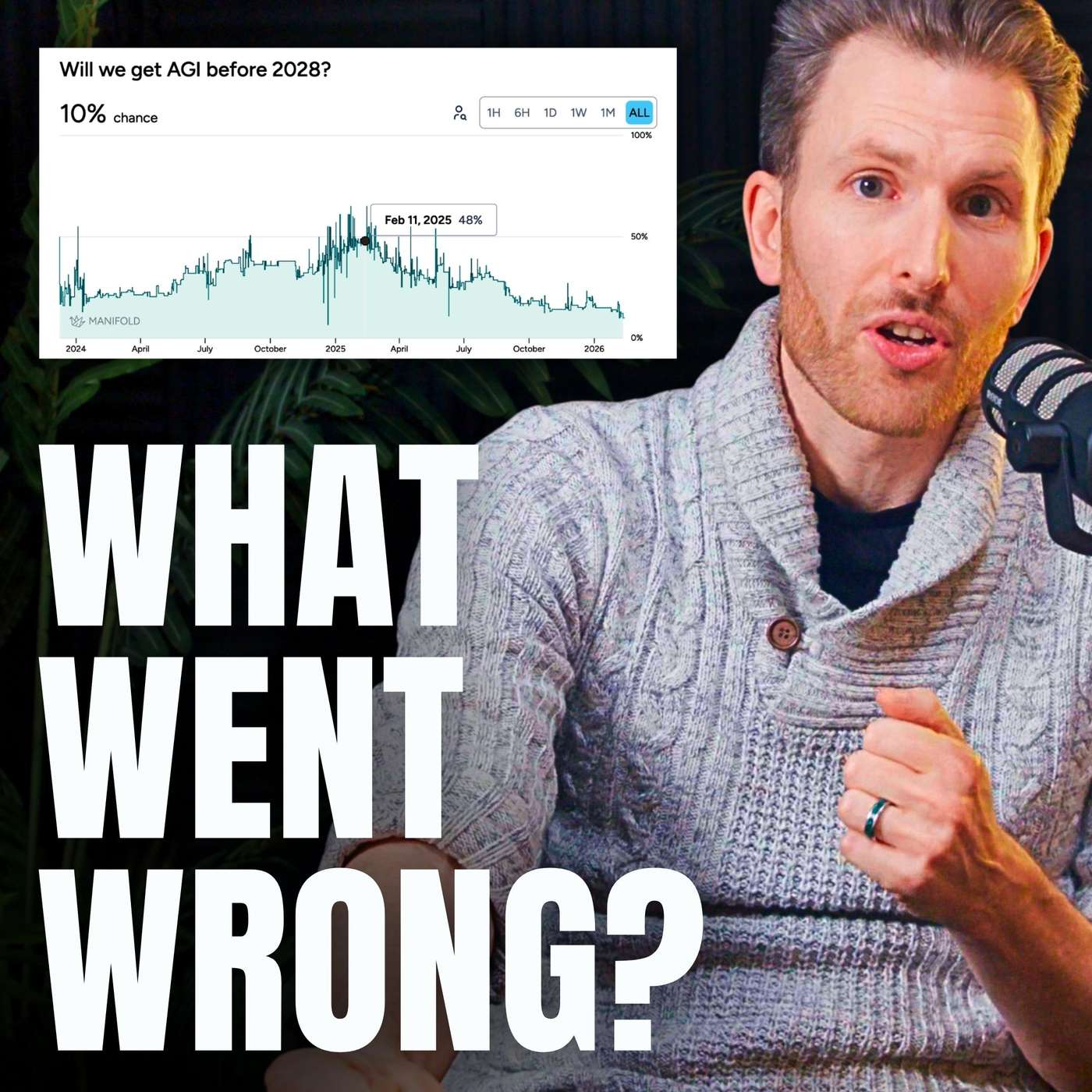

The hype around an imminent Artificial General Intelligence (AGI) event is fading among top AI practitioners. The consensus is shifting to a "Goldilocks scenario" where AI provides massive productivity gains as a synergistic tool, with true AGI still at least a decade away.

People deeply involved in AI perceive its current capabilities as world-changing, while the general public, using free or basic tools, remains largely unaware of the imminent, profound disruption to knowledge work.

There's a stark contrast in AGI timeline predictions. Newcomers and enthusiasts often predict AGI within months or a few years. However, the field's most influential figures, like Ilya Sutskever and Andrej Karpathy, are now signaling that true AGI is likely decades away, suggesting the current paradigm has limitations.

A growing gap exists between AI's performance in demos and its actual impact on productivity. As podcaster Dwarkesh Patel noted, AI models improve at the rapid rate short-term optimists predict, but only become useful at the slower rate long-term skeptics predict, explaining widespread disillusionment.

A paradox of rapid AI progress is the widening "expectation gap." As users become accustomed to AI's power, their expectations for its capabilities grow even faster than the technology itself. This leads to a persistent feeling of frustration, even though the tools are objectively better than they were a year ago.

The capabilities of free, consumer-grade AI tools are over a year behind the paid, frontier models. Basing your understanding of AI's potential on these limited versions leads to a dangerously inaccurate assessment of the technology's trajectory.

The discourse around AGI is caught in a paradox. Either it is already emerging, in which case it's less a cataclysmic event and more an incremental software improvement, or it remains a perpetually receding future goal. This captures the tension between the hype of superhuman intelligence and the reality of software development.

The perceived limits of today's AI are not inherent to the models themselves but to our failure to build the right "agentic scaffold" around them. There's a "model capability overhang" where much more potential can be unlocked with better prompting, context engineering, and tool integrations.