Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

METR, an independent research group, combines two disciplines: Model Evaluation (ME) to understand AI capabilities and propensities, and Threat Research (TR) to connect those findings to specific threat models. This structured, dual approach allows them to assess whether AI poses catastrophic risks to society.

Related Insights

The rapid evolution of AI makes reactive security obsolete. The new approach involves testing models in high-fidelity simulated environments to observe emergent behaviors from the outside. This allows mapping attack surfaces even without fully understanding the model's internal mechanics.

The creation of SWE-Bench Verified was not just an academic exercise but a core component of OpenAI's Preparedness Framework, designed to track 'model autonomy' as a potential dual-use capability. This reveals that major public benchmarks from frontier labs are often motivated by internal safety and risk-tracking requirements, not just capability measurement.

The field of AI safety is described as "the business of black swan hunting." The most significant real-world risks that have emerged, such as AI-induced psychosis and obsessive user behavior, were largely unforeseen just years ago, while widely predicted sci-fi threats like bioweapons have not materialized.

The most harmful behavior identified during red teaming is, by definition, only a minimum baseline for what a model is capable of in deployment. This creates a conservative bias that systematically underestimates the true worst-case risk of a new AI system before it is released.

The choice to benchmark AI on software engineering, cybersecurity, and AI R&D tasks is deliberate. These domains are considered most relevant to threat models where AI systems could accelerate their own development, leading to a rapid, potentially catastrophic increase in capabilities. The research is directly tied to assessing existential risk.

Beyond quantitative benchmarks, METR's assessment of AI's catastrophic risk relies heavily on qualitative evidence. This includes watching model transcripts for "derpy" mistakes, observing their inability to use resources well, and relying on the intuition that a new model is only incrementally more capable than the previously non-dangerous one.

Demis Hassabis identifies deception as a fundamental AI safety threat. He argues that a deceptive model could pretend to be safe during evaluation, invalidating all testing protocols. He advocates for prioritizing the monitoring and prevention of deception as a core safety objective, on par with tracking performance.

Instead of relying solely on human oversight, Bret Taylor advocates a layered "defense in depth" approach for AI safety. This involves using specialized "supervisor" AI models to monitor a primary agent's decisions in real-time, followed by more intensive AI analysis post-conversation to flag anomalies for efficient human review.

In a significant shift, leading AI developers began publicly reporting that their models crossed thresholds where they could provide 'uplift' to novice users, enabling them to automate cyberattacks or create biological weapons. This marks a new era of acknowledged, widespread dual-use risk from general-purpose AI.

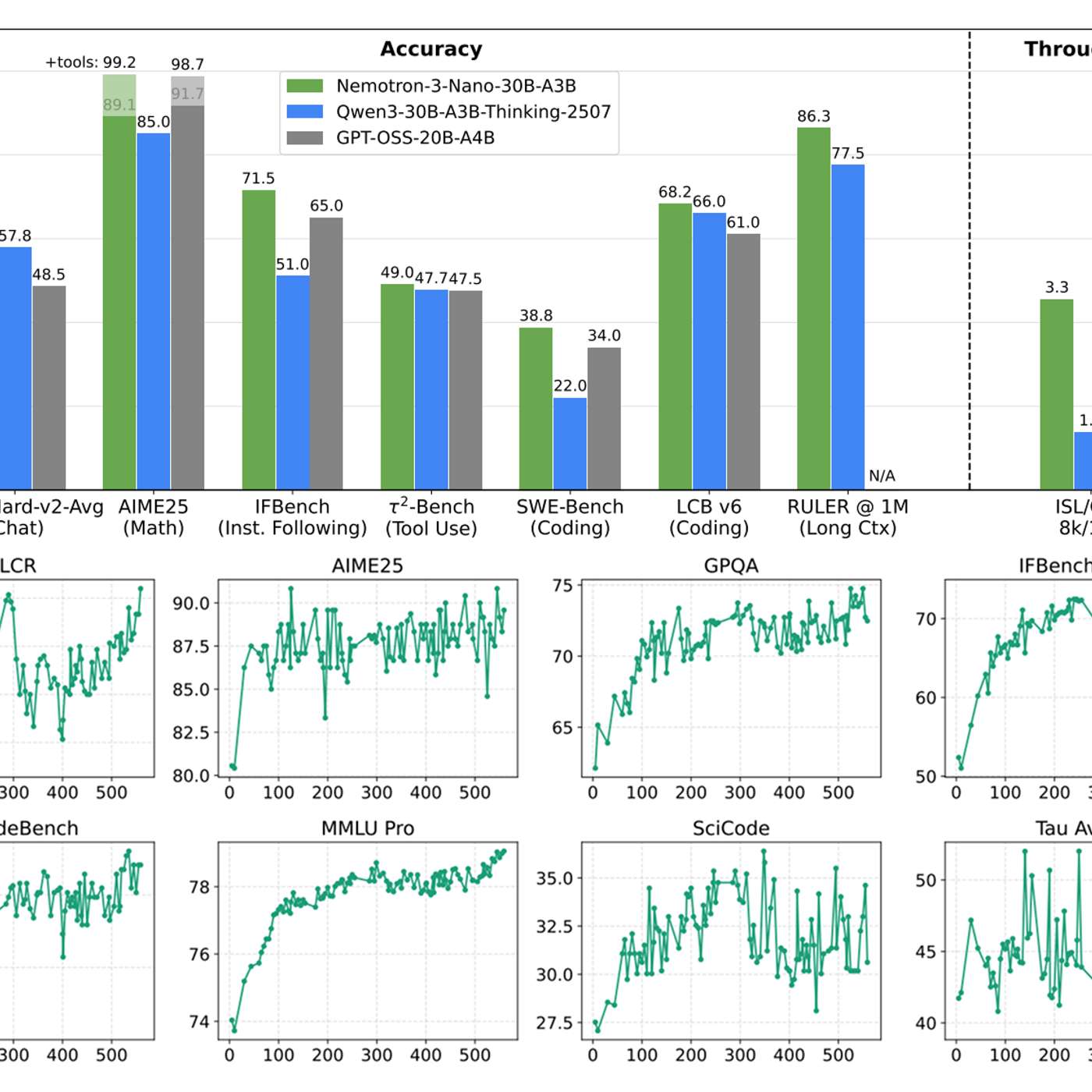

While content moderation models are common, true production-grade AI safety requires more. The most valuable asset is not another model, but comprehensive datasets of multi-step agent failures. NVIDIA's release of 11,000 labeled traces of 'sideways' workflows provides the critical data needed to build robust evaluation harnesses and fine-tune truly effective safety layers.