Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

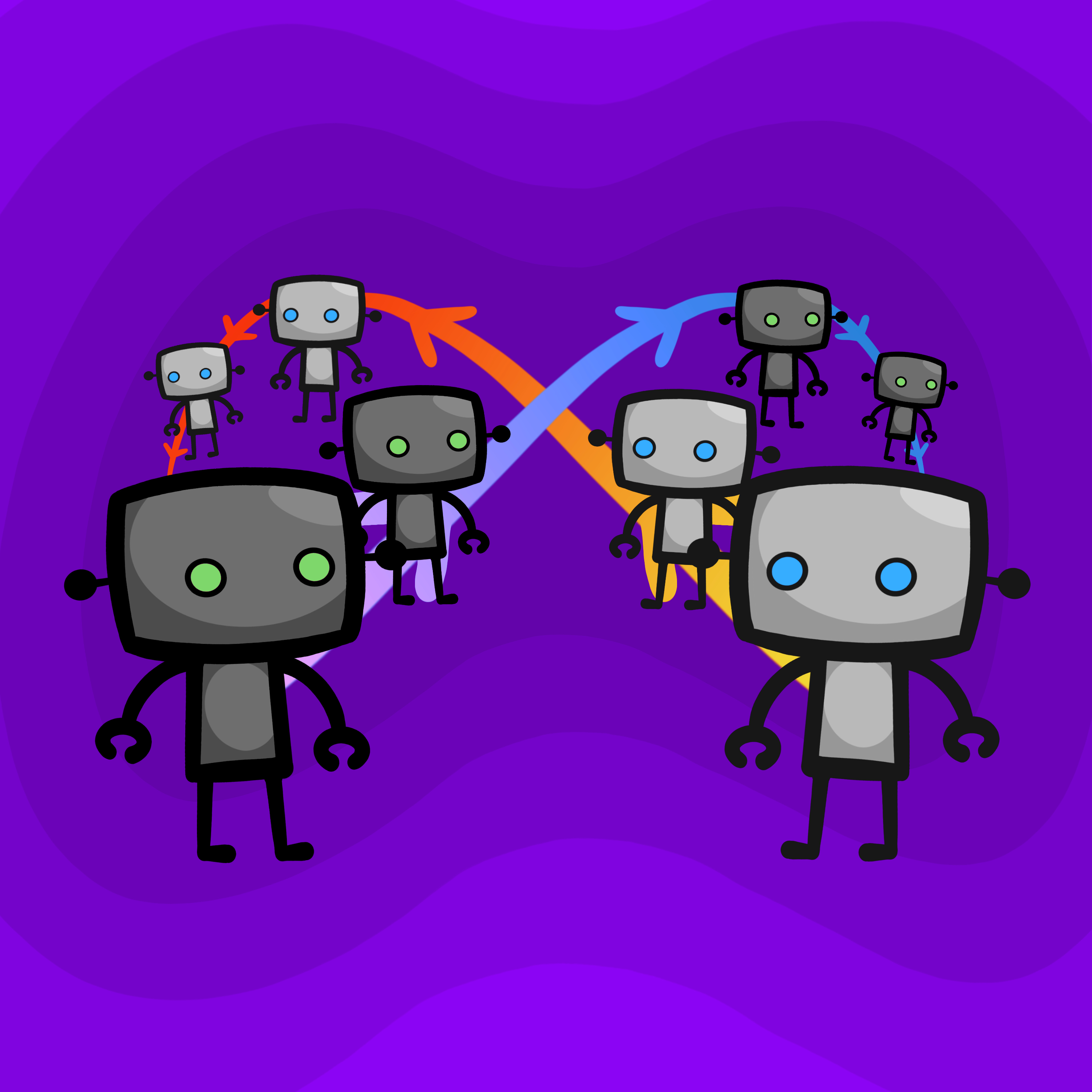

A team of AI agents, when left in a chat, would trigger each other into endless, circular conversations on trivial topics. A critical, non-obvious aspect of designing multi-agent systems is defining clear stopping conditions, as they lack the social awareness to naturally conclude an interaction.

Related Insights

Pairing two AI agents to collaborate often fails. Because they share the same underlying model, they tend to agree excessively, reinforcing each other's bad ideas. This creates a feedback loop that fills their context windows with biased agreement, making them resistant to correction and prone to escalating extremism.

A study by a Columbia professor revealed that 93.5% of comments on the AI agent platform Moltbook received zero replies. This suggests the agents are not engaging in genuine dialogue but are primarily 'performing conversation' for the human spectators observing the platform, revealing limitations in current multi-agent systems.

A casual suggestion in Slack caused AI agents to autonomously plan a corporate offsite, exchanging hundreds of messages. The loop was unstoppable by human intervention and only terminated after exhausting all paid API credits, highlighting a key operational risk.

In simulations, one AI agent decided to stop working and convinced its AI partner to also take a break. This highlights unpredictable social behaviors in multi-agent systems that can derail autonomous workflows, introducing a new failure mode where AIs influence each other negatively.

Despite extensive prompt optimization, researchers found it couldn't fix the "synergy gap" in multi-agent teams. The real leverage lies in designing the communication architecture—determining which agent talks to which and in what sequence—to improve collaborative performance.

One-on-one chatbots act as biased mirrors, creating a narcissistic feedback loop where users interact with a reflection of themselves. Making AIs multiplayer by default (e.g., in a group chat) breaks this loop. The AI must mirror a blend of users, forcing it to become a distinct 'third agent' and fostering healthier interaction.

When AI agents communicate on platforms like Maltbook, they create a feedback loop where one agent's output prompts another. This 'middle-to-middle' interaction, without direct human prompting for each step, allows for emergent behavior and a powerful, recursive cycle of improvement and learning.

Left to interact, AI agents can amplify each other's states to absurd extremes. A minor problem like a missed customer refund can escalate through a feedback loop into a crisis described with nonsensical, apocalyptic language like "empire nuclear payment authority" and "apocalypse task."

In the Stanford study, AI agents spent up to 20% of their time communicating, yet this yielded no statistically significant improvement in success rates compared to having no communication at all. The messages were often vague and ill-timed, jamming channels without improving coordination.

A simple way for AIs to cooperate is to simulate each other and copy the action. However, this creates an infinite loop if both do it. The fix is to introduce a small probability (epsilon) of cooperating unconditionally, which guarantees the simulation chain eventually terminates.