Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Unlike stealth technology developed in secret defense labs, AI is an imported commercial product. This fundamental difference means the military must contend with the values, ethical debates, and employee activism of the commercial tech sector, creating friction and power dynamics that are novel in the history of the military-industrial complex.

Related Insights

The Pentagon expects to buy AI with full control, just as it buys an F-35 jet from Lockheed, without the manufacturer dictating its use. AI firms like Anthropic see their product as an evolving service requiring ongoing involvement, creating a fundamental paradigm clash in government contracting.

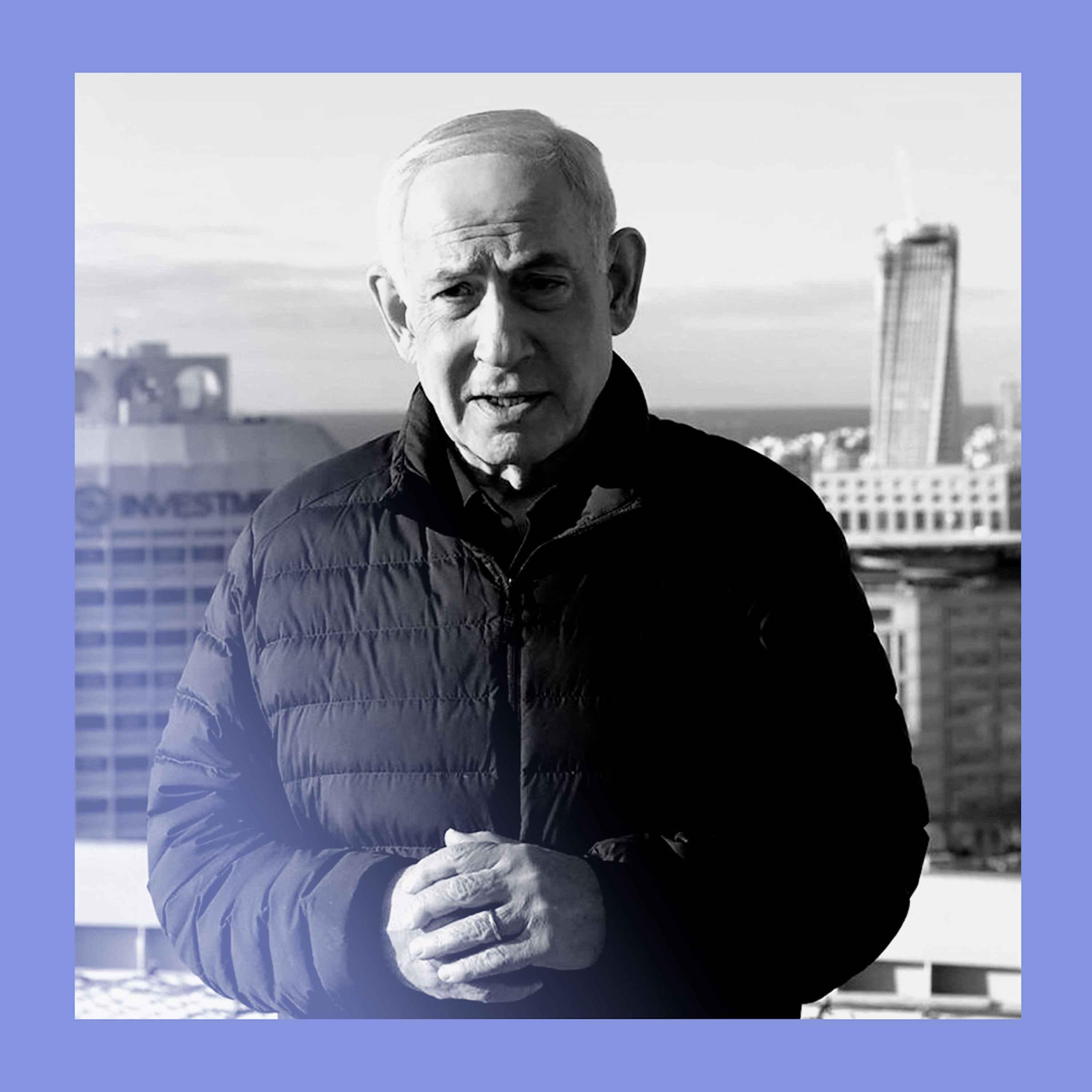

The conflict between Anthropic and the Pentagon stemmed from fundamental philosophical differences and personal animosity between leaders, as much as specific contract language over surveillance and autonomous weapons. The disagreement was deeply rooted in a clash of Silicon Valley and Washington cultures.

Unlike nuclear energy or the space race where government was the primary funder, AI development is almost exclusively led by the private sector. This creates a novel challenge for national security agencies trying to adopt and integrate the technology.

The standoff between Anthropic and the Pentagon marks the moment abstract discussions about AI ethics became concrete geopolitical conflicts. The power to define the ethical boundaries of AI is now synonymous with the power to shape societal norms and military doctrine, making it a highly contested and critical area of national power.

The conflict between Anthropic and the Pentagon isn't about the immediate creation of autonomous weapons. Instead, it's a fundamental disagreement over whether the military can use AI for any 'lawful use' or if the tech companies get to impose their own ethical restrictions and acceptable use policies, effectively setting the rules of engagement.

By refusing to allow its models for lethal operations, Anthropic is challenging the U.S. government's authority. This dispute will set a precedent for whether AI companies act as neutral infrastructure or as political entities that can restrict a nation's military use of their technology.

The Pentagon labeled Anthropic a "supply chain risk" not due to a technical flaw, but because it dislikes the AI's embedded "constitution" and safety guardrails. This reveals a fundamental clash over who controls the values and behaviors of AI used in defense, turning a tech partnership into a political battle.

Anthropic’s resistance to giving the Pentagon unrestricted use of its AI is a talent retention strategy. AI researchers are a scarce, highly valued resource, and many in Silicon Valley are "peaceniks." This forces leaders to balance lucrative military contracts with the risk of losing top employees who object to their work's applications.

The core conflict is not a simple contract dispute, but a fundamental question of governance. Should unelected tech executives set moral boundaries on military technology, or should democratically elected leaders have full control over its lawful use? This highlights the challenge of integrating powerful, privately-developed AI into state functions.

Ben Thompson argues that if AI is as powerful as its creators claim, they must anticipate a forceful government response. Private companies unilaterally setting restrictions on dual-use technology will be seen as an intolerable challenge to state power, leading to direct conflict.