Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Most public criticism of AI is not driven by high-minded philosophy but by a fundamental fear of personal financial loss. People worry AI will threaten their livelihood and then rationalize this fear by couching it in noble-sounding arguments about the dangers to society.

Related Insights

Americans see AI not as a tool for progress, but as the ultimate weapon for a new corporate ethos where profits surge *because* of layoffs and offshoring. This breaks the historical assumption that company success benefits employees, making workers view AI as an existential threat.

The public conversation about AI focuses on job loss, which generates immense fear. This unaddressed fear leads to political polarization and antisocial behavior, or "social ripples." These emotional reactions pose a greater societal threat than the technological disruption itself.

Drawing on Frédéric Bastiat's "seen and unseen" principle, AI doomerism is a classic economic fallacy. It focuses on tangible job displacement ("the seen") while completely missing the new industries, roles, and creative potential that technology inevitably unlocks ("the unseen"), a pattern repeated throughout history.

Many people's negative opinions on AI-generated content stem from a deep-seated fear of their jobs becoming obsolete. This emotional reaction will fade as AI content becomes indistinguishable from human-created content, making the current debate a temporary, fear-based phenomenon.

Most business professionals who are against AI haven't done their homework. Their opinion is a defense mechanism rooted in fear of financial loss and the unwillingness to put in the effort to understand the new technology. Vaynerchuk calls this a profoundly bad business strategy based on fear, not fact.

When faced with a disruptive technology like AI, many business leaders default to raising theoretical societal concerns ("it's bad for society"). This is often a defense mechanism to avoid the hard work of learning and adapting, using high-minded objections to mask inaction.

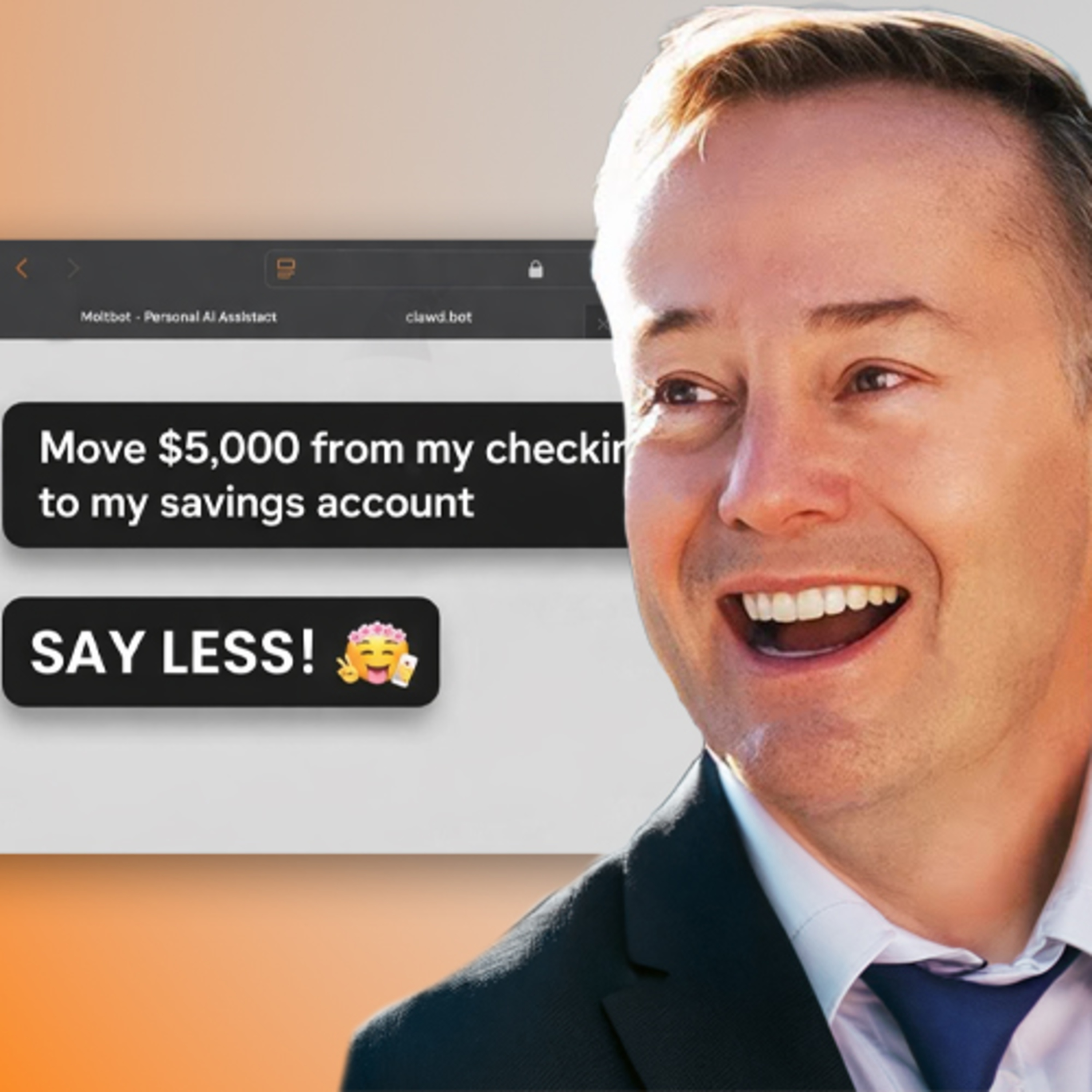

AI leaders often message their technology with a dual warning: it will automate jobs and poses existential risks. This 'cursed microwave' pitch, as Noah Smith describes it, is a terrible value proposition that alienates the public and provides ammunition for regulators pushing to halt AI development.

AI is positioned to become a universal scapegoat for economic anxieties. Executives can cite AI efficiency to justify layoffs and boost stock prices, even if business is poor. Simultaneously, workers can blame AI for job losses, regardless of the true economic drivers like tariffs or market downturns.

The most significant risk to AI development is not a technical challenge but a widespread public outcry from those whose jobs are displaced. This could lead to a "burn down OpenAI" mentality, resulting in crippling regulations that halt progress out of fear and sympathy for the displaced.

While early media coverage focused on doomsday scenarios, the primary drivers of broad public skepticism are far more immediate. Concerns about white-collar job loss and the devaluation of human art are fueling the anti-AI movement much more effectively than abstract fears of superintelligence.