Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Without clear government guardrails for AI, the industry exists in a "Wild West" state. This void is being filled by CEO virtue signaling and press releases, creating chaos and causing public optimism about AI to crater from nearly 90% to just 10%, ultimately harming the industry's long-term viability.

Related Insights

While the public focuses on AI's potential, a small group of tech leaders is using the current unregulated environment to amass unprecedented power and wealth. The federal government is even blocking state-level regulations, ensuring these few individuals gain extraordinary control.

When companies like OpenAI and Anthropic pull products due to risk, it's a clear signal that they are unable to self-govern. This action is interpreted as a plea for government oversight, as relying on the social conscience of a few CEOs is an unsustainable model.

With widespread public anxiety about AI and a lack of clear federal leadership, there is a significant political opening. A candidate who can articulate a sensible vision for AI regulation—one that protects citizens while fostering innovation—could capture the attention of a worried electorate.

The narrative of AI doom isn't just organic panic. It's being leveraged by established players who are actively seeking "regulatory capture." They aim to create a cartel that chokes off innovation from startups right from the start.

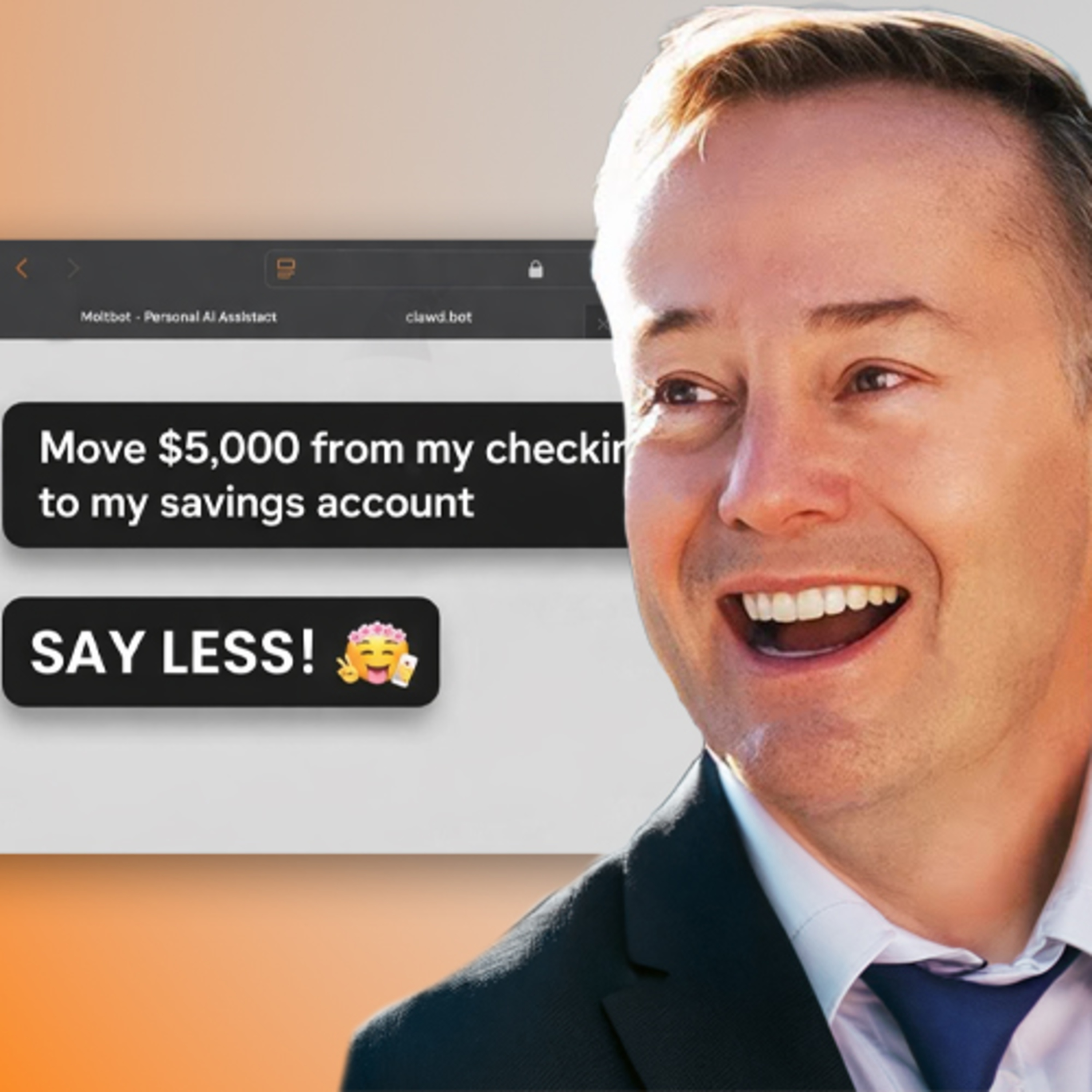

Facing growing moral panic, the AI industry's plan appears to be moving so fast that regulation becomes impossible. By building data centers and deploying models at breakneck speed, companies aim to make their technology ubiquitous before any effective policy can form.

The AI industry faces a major public relations problem. Its two most visible leaders are Anthropic's CEO, who promotes "doomer" narratives, and OpenAI's CEO, dogged by accusations of being a sociopath, creating a negative public image for the entire field.

AI leaders' apocalyptic messaging about sentient AI and job destruction is a strategy to attract massive investment and potentially trigger regulatory capture. This "AB testing" of messages creates a severe PR problem, making AI deeply unpopular with the public.

The AI industry's public communication strategy, which heavily emphasizes risks and downplays tangible benefits, is backfiring. By constantly validating fears without clearly articulating a positive vision, AI leaders are inadvertently encouraging public skepticism and making people question why the technology should exist at all.

Because AI is so new, there are no established best practices or regulations for its use. This creates a critical but temporary window where every organization's choices matter more. The precedents set now by early adopters in business, government, and education will significantly influence how AI is integrated into society.

Widespread public discontent with AI is not just a PR problem; it's a political cloud that could lead to the election of officials who enact strict regulations. This could "disembowel the industry," representing a significant business risk for AI companies that ignore the public's fear of job displacement.