Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Copernicus's simpler heliocentric model was less accurate than the highly-tweaked Ptolemaic system. This shows that progress isn't linear accuracy; a new, conceptually superior framework might perform worse at first. It requires further refinement, as Kepler provided for Copernicus, to realize its full potential.

Related Insights

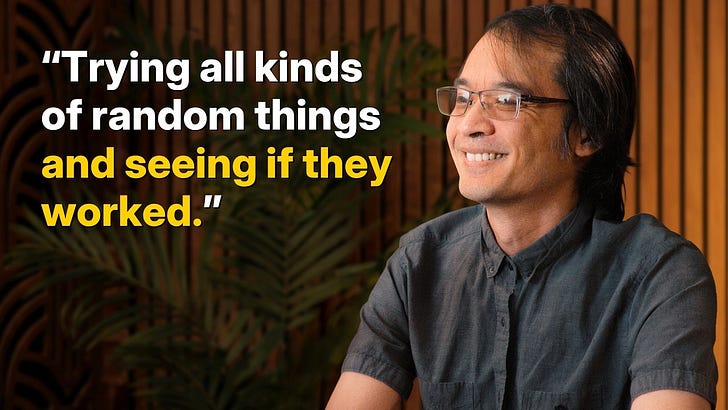

Kepler's method of testing numerous, often strange, hypotheses against Tycho Brahe's precise data mirrors how AIs can generate and verify countless ideas. This uncovers empirical regularities that can later fuel deeper theoretical understanding, much like Newton's laws explained Kepler's findings.

True scientific progress comes from being proven wrong. When an experiment falsifies a prediction, it definitively rules out a potential model of reality, thereby advancing knowledge. This mindset encourages researchers to embrace incorrect hypotheses as learning opportunities rather than failures, getting them closer to understanding the world.

Even Donald Hoffman, proponent of the consciousness-first model, admits his emotions and intuition resist his theory. He relies solely on the logical force of mathematics to advance, demonstrating that groundbreaking ideas often feel profoundly wrong before they can be proven.

A new scientific theory isn't valuable if it only recategorizes what we already know. Its true merit lies in suggesting an outrageous, unique, and testable experiment that no other existing theory could conceive of. Without this, it's just a reframing of old ideas.

Current AI can learn to predict complex patterns, like planetary orbits, from data. However, it struggles to abstract the underlying causal laws, such as Newtonian physics (F=MA). This leap to a higher level of abstraction remains a fundamental challenge beyond simple pattern recognition.

The strength of scientific progress comes from 'individual humility'—the constant process of questioning assumptions and actively searching for errors. This embrace of being wrong, or doubting one's own work, is not a weakness but a superpower that leads to breakthroughs.

AGI won't be achieved by pattern-matching existing knowledge. A real benchmark is whether a model can synthesize anomalous data (like Mercury's orbit) and create a fundamentally new representation of the universe, as Einstein did, moving beyond correlation to a new causal model.

Reflecting on his PhD, Terry Rosen emphasizes that experiments that fail are often the most telling. Instead of discarding negative results, scientists should analyze them deeply. Understanding *why* something didn't work provides critical insights that are essential for iteration and eventual success.

The new, powerful telescopes of the late 19th century were not yet good enough to show Mars clearly, but just powerful enough to reveal indistinct features. This intermediate level of technology created optical illusions, leading astronomers' brains to "connect the dots" and perceive canals where none existed.

Current LLMs fail at science because they lack the ability to iterate. True scientific inquiry is a loop: form a hypothesis, conduct an experiment, analyze the result (even if incorrect), and refine. AI needs this same iterative capability with the real world to make genuine discoveries.