Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

Anthropic's "Managed Agents" is built on the premise that any specific "harness" is temporary, as its assumptions become outdated with model improvements. They are creating a "meta-harness"—an underlying infrastructure designed to outlast any single implementation, making individual harnesses easily swappable and disposable.

Related Insights

Performance gains increasingly come from the "harness"—the surrounding system of tools, data connections, and agentic workflows—not the underlying model. Stanford's "meta-harness" concept shows a 6x performance gap on the same model, suggesting the product layer is where real innovation and competitive advantage now lie.

While building intricate frameworks (scaffolding) to correct model behavior is effective now, it may become obsolete. The speaker suggests it's better to focus on giving models more fundamental capabilities and trust that future, more generalized models will handle tasks without needing such hand-holding.

The real intellectual property and performance driver for advanced AI systems like Claude Code isn't the underlying model, but the surrounding orchestration layer. This "agent harness" manages memory, tools, and context, and has become the key competitive differentiator.

The true building block of an AI feature is the "agent"—a combination of the model, system prompts, tool descriptions, and feedback loops. Swapping an LLM is not a simple drop-in replacement; it breaks the agent's behavior and requires re-engineering the entire system around it.

An AI coding agent's performance is driven more by its "harness"—the system for prompting, tool access, and context management—than the underlying foundation model. This orchestration layer is where products create their unique value and where the most critical engineering work lies.

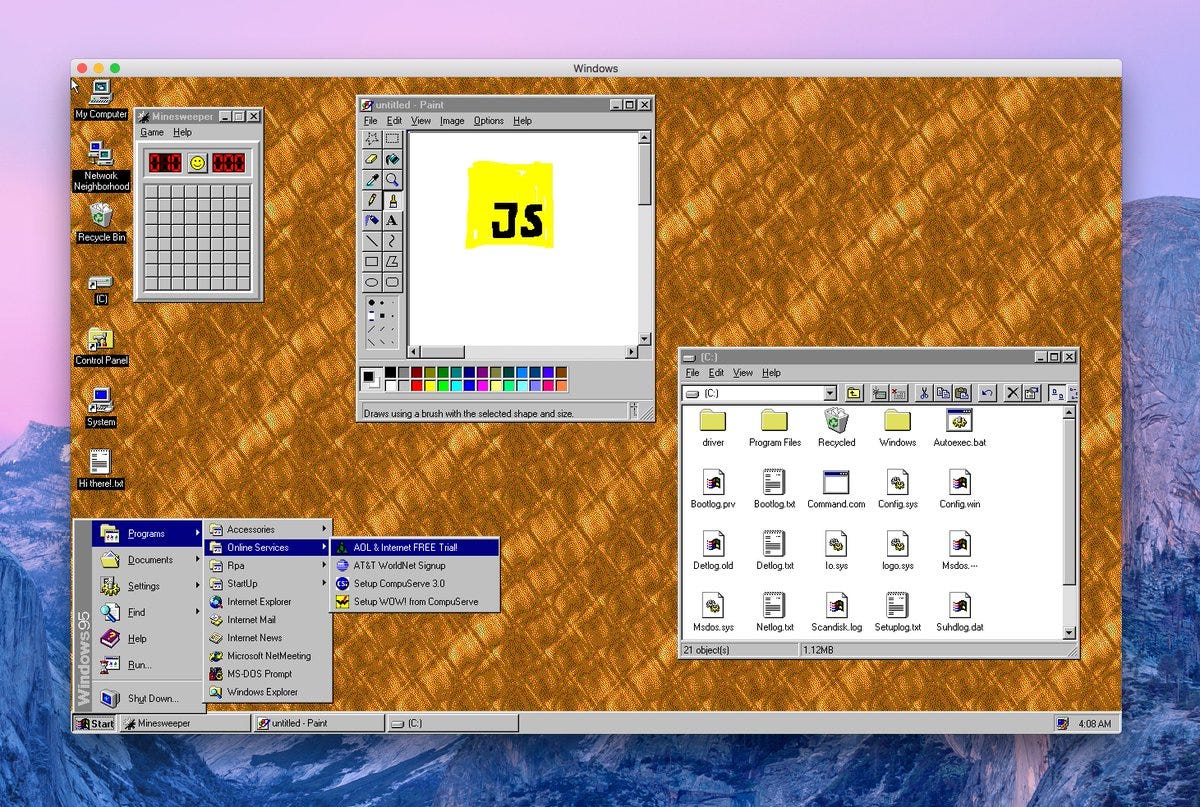

Early agent development used simple frameworks ("scaffolds") to structure model interactions. As LLMs grew more capable, the industry moved to "harnesses"—more opinionated, "batteries-included" systems that provide default tools (like planning and file systems) and handle complex tasks like context compaction automatically.

Platforms for running AI agents are called 'agent harnesses.' Their primary function is to provide the infrastructure for the agent's 'observe, think, act' loop, connecting the LLM 'brain' to external tools and context files, similar to how a car's chassis supports its engine.

Anthropic's new offering provides a managed 'harness' and production infrastructure, abstracting away the complex distributed systems engineering needed to run agents at scale. This allows companies to focus on their core business logic rather than DevOps, drastically reducing time-to-market for functional AI agents.

Beyond a technical concept for coding agents, "harness engineering" provides a powerful mental model for enterprise AI adoption. It reframes the challenge from simply deploying models to redesigning the entire organizational system—processes, data access, and feedback loops—to create an environment where AI capabilities can truly succeed.

Top-tier language models are becoming commoditized in their excellence. The real differentiator in agent performance is now the 'harness'—the specific context, tools, and skills you provide. A minimalist, well-crafted harness on a good model will outperform a bloated setup on a great one.