Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

While building intricate frameworks (scaffolding) to correct model behavior is effective now, it may become obsolete. The speaker suggests it's better to focus on giving models more fundamental capabilities and trust that future, more generalized models will handle tasks without needing such hand-holding.

Related Insights

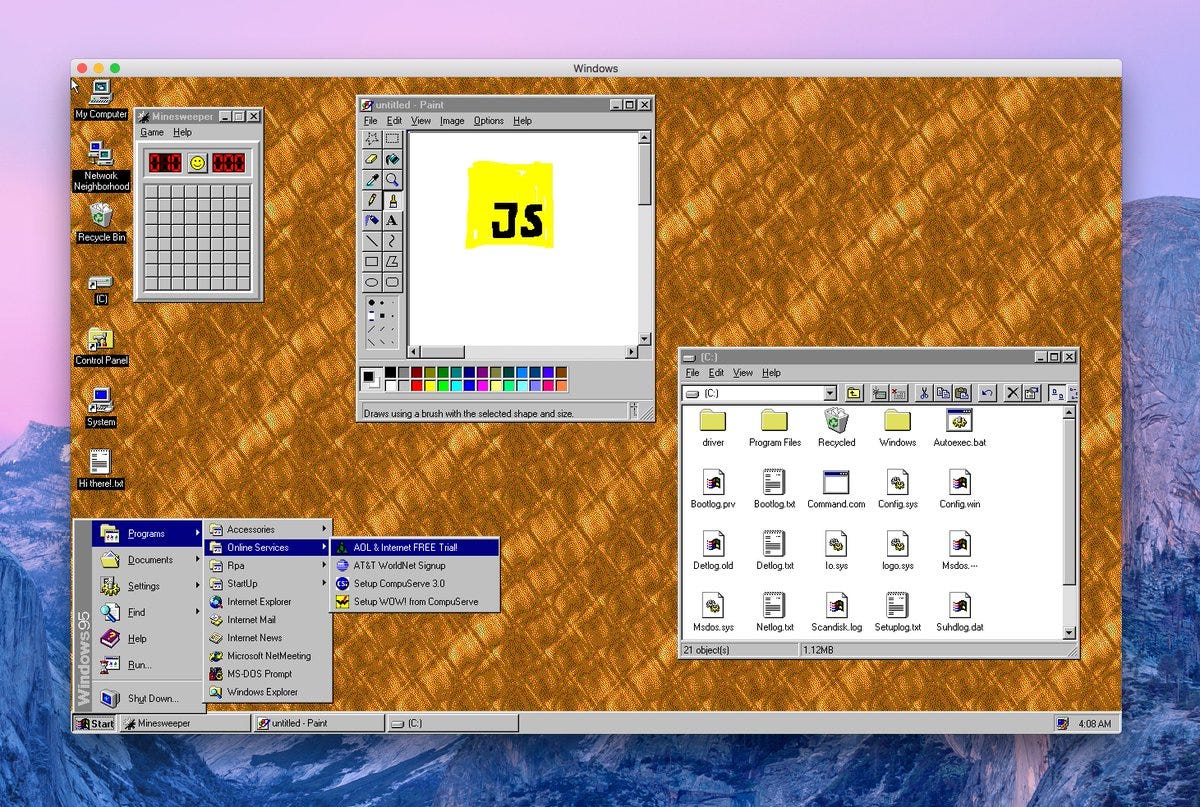

Overly structured, workflow-based systems that work with today's models will become bottlenecks tomorrow. Engineers must be prepared to shed abstractions and rebuild simpler, more general systems to capture the gains from exponentially improving models.

AI development history shows that complex, hard-coded approaches to intelligence are often superseded by more general, simpler methods that scale more effectively. This "bitter lesson" warns against building brittle solutions that will become obsolete as core models improve.

AI agents like OpenClaw learn via "skills"—pre-written text instructions. While functional, this method is described as "janky" and a workaround. It exposes a core weakness of current AI: the lack of true continual learning. This limitation is so profound that new startups are rethinking AI architecture from scratch to solve it.

Features built to guide AI agents, like an explicit "plan mode," will become obsolete as models become more capable. The Claude Code team embraces this, building what's needed for the best current experience and fully expecting to delete that code when a new model renders it unnecessary.

The "bitter lesson" of AI applies to product development: complex scaffolding built around model limitations (like early vector stores or agent frameworks) will inevitably become obsolete as the models themselves get smarter and absorb those functions. Don't over-engineer solutions that a future model will solve natively.

Early on, Google's Jules team built complex scaffolding with numerous sub-agents to compensate for model weaknesses. As models like Gemini improved, they found that simpler architectures performed better and were easier to maintain. The complex scaffolding was a temporary crutch, not a sustainable long-term solution.

The success of tools like Anthropic's Claude Code demonstrates that well-designed harnesses are what transform a powerful AI model from a simple chatbot into a genuinely useful digital assistant. The scaffolding provides the necessary context and structure for the model to perform complex tasks effectively.

Early agent development used simple frameworks ("scaffolds") to structure model interactions. As LLMs grew more capable, the industry moved to "harnesses"—more opinionated, "batteries-included" systems that provide default tools (like planning and file systems) and handle complex tasks like context compaction automatically.

The perceived limits of today's AI are not inherent to the models themselves but to our failure to build the right "agentic scaffold" around them. There's a "model capability overhang" where much more potential can be unlocked with better prompting, context engineering, and tool integrations.

While intricate software "scaffolding" can boost an AI agent's performance, progress is overwhelmingly driven by the core model. A new model generation typically achieves the same capabilities with simple prompts that previously required complex engineering.