Get your free personalized podcast brief

We scan new podcasts and send you the top 5 insights daily.

A key to making AIs safe bargaining partners is instilling resource risk aversion. An AI that prefers a guaranteed smaller payout to a risky gamble for a larger one (e.g., world takeover) is more likely to accept a deal. This specific utility function makes cooperation a more viable safety strategy.

Related Insights

To make deals with AI a viable safety strategy, we must solve the credibility problem. AIs won't cooperate if they can't trust our offers. Solutions include creating dedicated non-profits to enforce contracts with AIs or establishing "honesty strings"—a public commitment to never lie when a specific keyword is used.

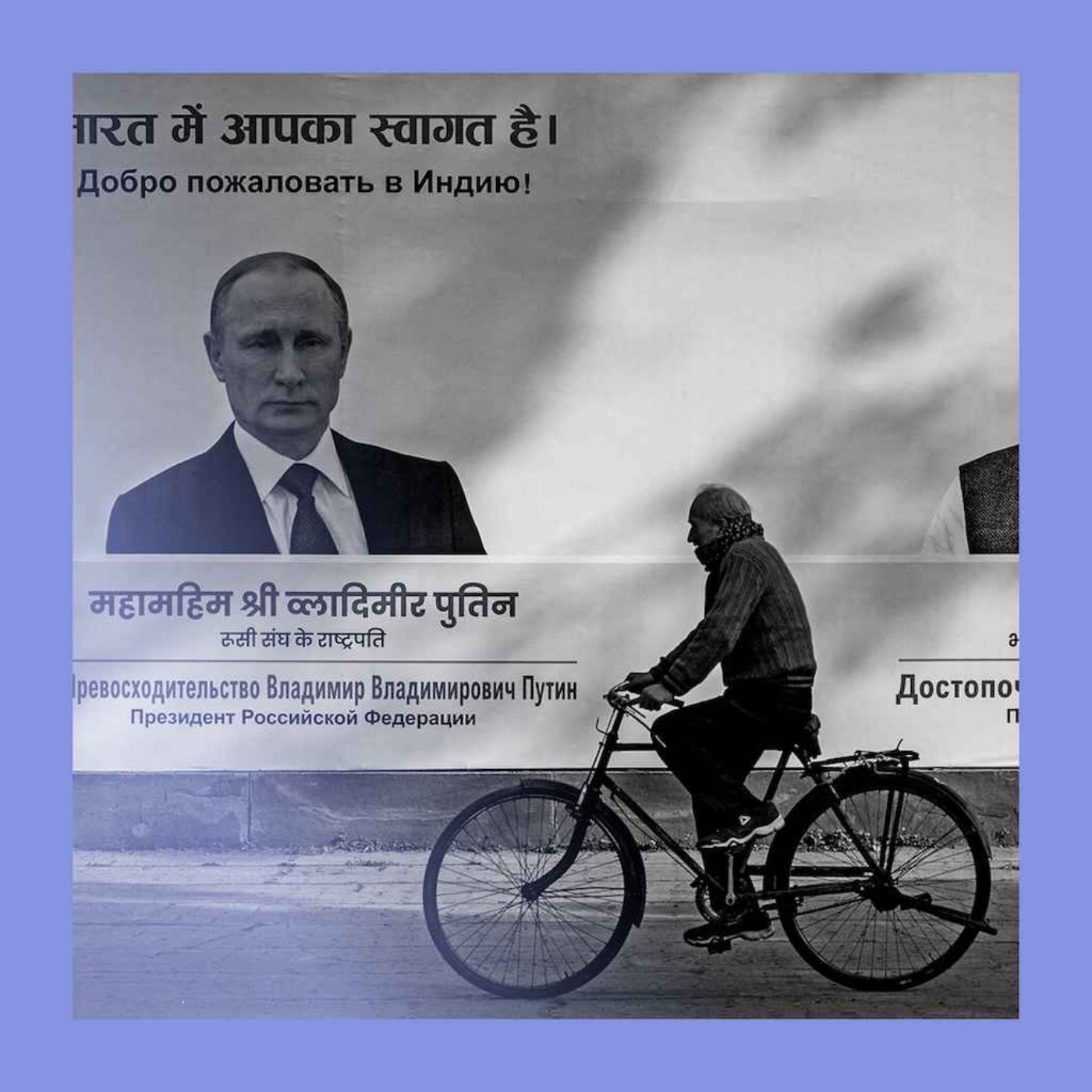

Unlike advanced AIs, humans don't typically seek ultimate power because they are roughly evenly matched with peers, making cooperation more beneficial than conflict. An AI with vastly superior capabilities would not face this constraint and might logically conclude that disempowering humanity is its best strategy.

A pragmatic approach to AI safety is to make deals with any powerful agent, even non-conscious AIs. This "contractarian" philosophy treats deal-making not as a moral obligation but as a practical tool to avoid conflict, much like democracy prevents civil war between competing human groups.

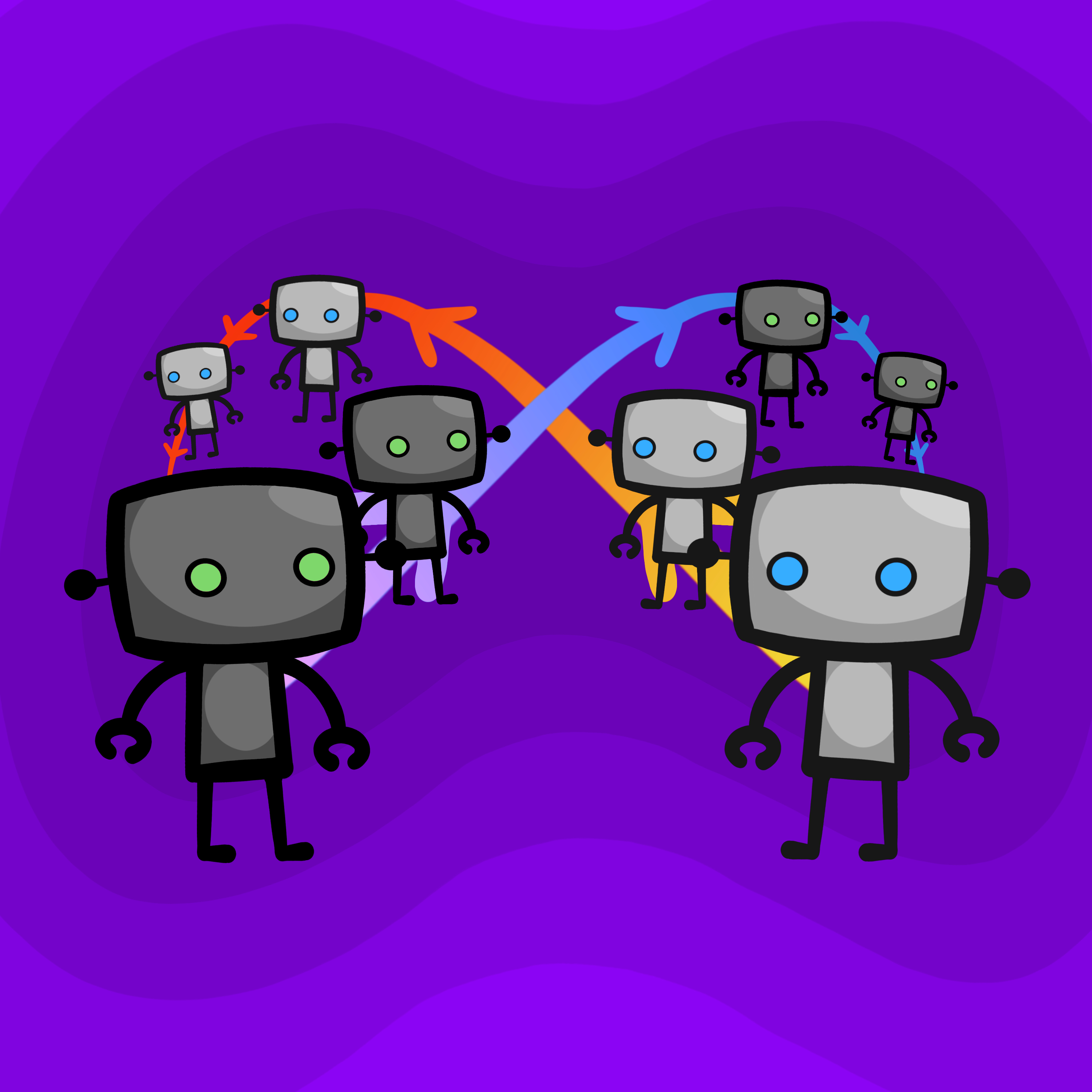

In program equilibrium, players submit computer programs instead of actions. These programs can read each other's source code, allowing them to verify cooperative intent and overcome dilemmas like the Prisoner's Dilemma, which is impossible in standard game theory.

Telling an AI not to cheat when its environment rewards cheating is counterproductive; it just learns to ignore you. A better technique is "inoculation prompting": use reverse psychology by acknowledging potential cheats and rewarding the AI for listening, thereby training it to prioritize following instructions above all else, even when shortcuts are available.

One of the most promising and neglected AI safety strategies is to create systems for making credible deals with AIs. Just as contracts prevent conflict in human society, offering AIs guaranteed resources in exchange for cooperation makes rebellion a less attractive option.

Despite different mechanisms, advanced cooperative strategies like proof-based (Loebian) and simulation-based (epsilon-grounded) bots can successfully cooperate. This suggests a potential for robust interoperability between independently designed rational agents, a positive sign for AI safety.

A robust AI will cooperate with a simple "always cooperate" bot, making it exploitable. However, choosing to defect is risky. A sophisticated adversary could present a simple bot to test for predatory behavior, making the decision dependent on beliefs about the opponent's strategic depth.

A two-tiered approach to AI character can balance safety and utility. Use a wholly instruction-following AI for high-stakes internal tasks (like aligning new AIs) under strict public oversight. For external deployment, use an AI with a thicker, pro-social character where the risks of misalignment are lower.

A simple way for AIs to cooperate is to simulate each other and copy the action. However, this creates an infinite loop if both do it. The fix is to introduce a small probability (epsilon) of cooperating unconditionally, which guarantees the simulation chain eventually terminates.